Introduction: Database as a Service (DBaaS)

Cloud databases offer managed infrastructure, automatic backups, scaling, and high availability—letting teams focus on application logic rather than database administration.

Master cloud databases and managed services. Learn AWS RDS, Aurora, DynamoDB, Azure SQL, Cosmos DB, Google Cloud SQL, Spanner, and how to choose between managed and self-hosted databases.

Cloud databases offer managed infrastructure, automatic backups, scaling, and high availability—letting teams focus on application logic rather than database administration.

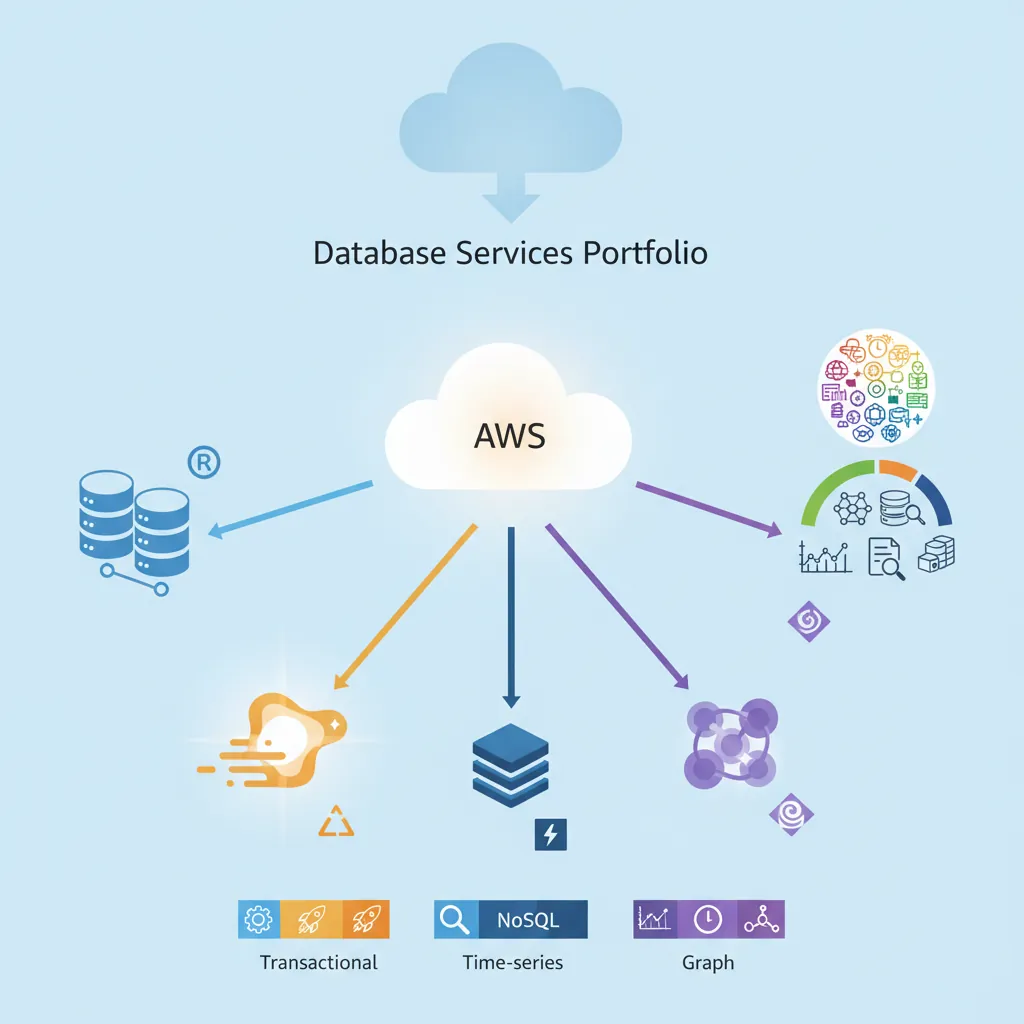

Amazon Web Services offers the most comprehensive database portfolio in the cloud. From fully managed relational databases to serverless NoSQL, AWS provides options for every workload.

RDS provides managed MySQL, PostgreSQL, MariaDB, Oracle, and SQL Server instances. AWS handles backups, patching, monitoring, and high availability—you focus on queries and schemas.

# Create a PostgreSQL RDS instance via AWS CLI

aws rds create-db-instance \

--db-instance-identifier mydb \

--db-instance-class db.t3.medium \

--engine postgres \

--engine-version 15.3 \

--master-username admin \

--master-user-password MySecurePass123! \

--allocated-storage 100 \

--storage-type gp3 \

--backup-retention-period 7 \

--multi-az \

--publicly-accessible

Aurora is AWS's cloud-native relational database, compatible with MySQL and PostgreSQL but with 5x MySQL and 3x PostgreSQL performance. Unlike RDS, Aurora separates compute from storage, enabling instant scaling.

-- Aurora automatically handles replication across 3 AZs

-- This is a standard PostgreSQL-compatible connection

CREATE TABLE orders (

order_id BIGSERIAL PRIMARY KEY,

customer_id INT NOT NULL,

total_amount DECIMAL(10, 2) NOT NULL,

order_date TIMESTAMP DEFAULT CURRENT_TIMESTAMP,

status VARCHAR(20) DEFAULT 'pending'

);

-- Aurora Global Database: replicate across regions with <1 second lag

-- Primary region handles writes, secondary regions serve reads

| Feature | RDS | Aurora |

|---|---|---|

| Storage | EBS-backed, manual scaling | Distributed storage, auto-scales to 128 TB |

| Read Replicas | Up to 15 (async) | Up to 15 (shared storage, <10ms lag) |

| Failover Time | 1-2 minutes | < 30 seconds |

| Cost | Lower (starting ~$0.04/hour) | Higher (~2x), but better performance |

| Global Databases | Cross-region read replicas (lag minutes) | < 1 second global replication |

DynamoDB is AWS's serverless NoSQL database—fully managed, single-digit millisecond latency, and scales to handle 10 trillion requests per day. No servers to provision, no patching, automatic backups.

// Node.js DynamoDB example

const { DynamoDBClient, PutItemCommand, GetItemCommand } = require("@aws-sdk/client-dynamodb");

const client = new DynamoDBClient({ region: "us-east-1" });

// Insert an item

const putParams = {

TableName: "Users",

Item: {

userId: { S: "user123" },

email: { S: "alice@example.com" },

createdAt: { N: Date.now().toString() },

profile: { M: {

name: { S: "Alice Chen" },

age: { N: "28" }

}}

}

};

await client.send(new PutItemCommand(putParams));

// Retrieve by primary key (always single-digit milliseconds)

const getParams = {

TableName: "Users",

Key: { userId: { S: "user123" } }

};

const result = await client.send(new GetItemCommand(getParams));

Provisioned Mode: Set read/write capacity units (RCU/WCU). Predictable performance, lower cost at consistent load. Use auto-scaling to adjust capacity automatically.

On-Demand Mode: Pay per request, zero capacity planning. Instantly handles spikes to 2x previous peak. Perfect for unpredictable workloads (new apps, seasonal spikes).

Cost Example: 1 million reads + 1 million writes per month:

ElastiCache provides managed Redis and Memcached clusters. Use it to cache database queries, session data, or serve as a high-speed data store for real-time applications.

# Python Redis caching pattern with ElastiCache

import redis

import json

import psycopg2

redis_client = redis.Redis(

host='myredis.abc123.0001.use1.cache.amazonaws.com',

port=6379,

decode_responses=True

)

def get_user(user_id):

# Try cache first

cache_key = f"user:{user_id}"

cached = redis_client.get(cache_key)

if cached:

return json.loads(cached)

# Cache miss - query database

conn = psycopg2.connect("postgresql://rds-endpoint/mydb")

cursor = conn.cursor()

cursor.execute("SELECT * FROM users WHERE id = %s", (user_id,))

user = cursor.fetchone()

# Store in cache for 1 hour

redis_client.setex(cache_key, 3600, json.dumps(user))

return user

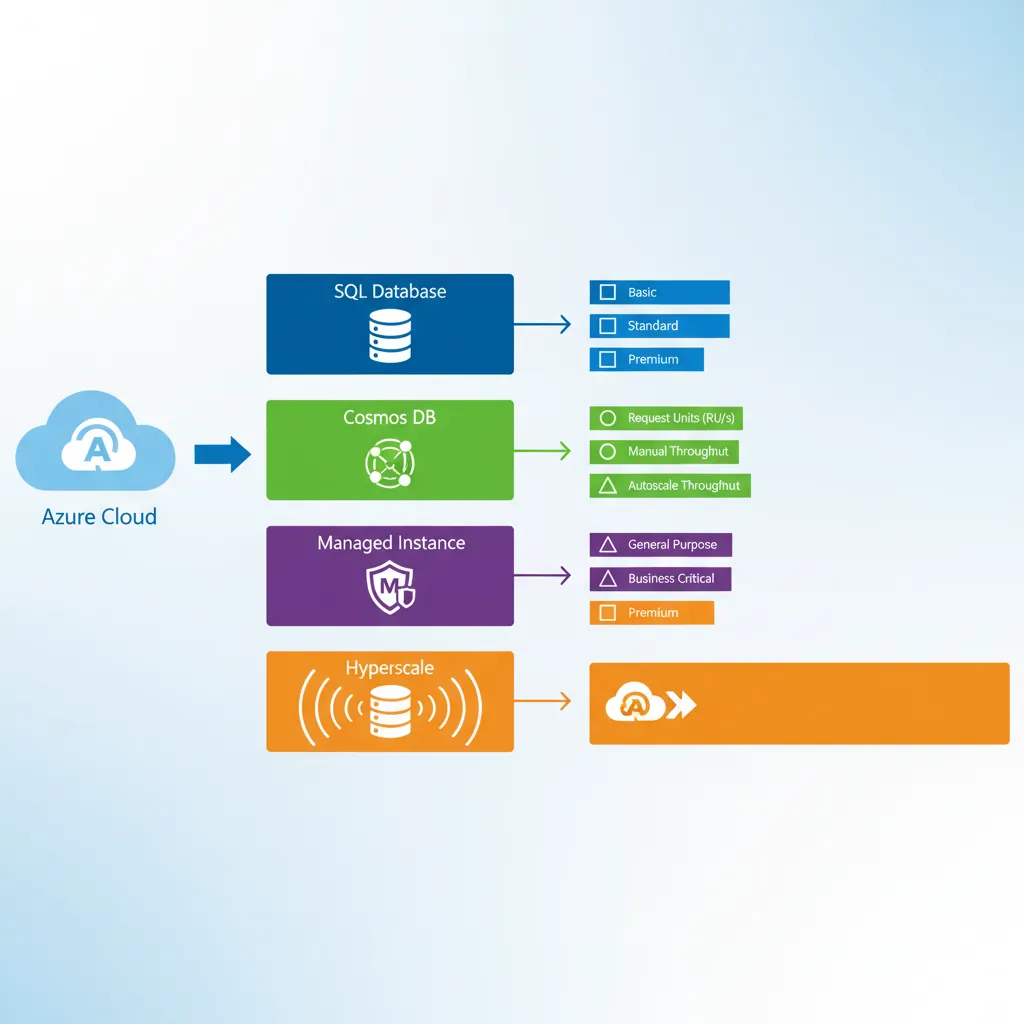

Microsoft Azure provides integrated database services optimized for .NET applications and enterprise workloads. Strong hybrid cloud capabilities allow seamless migration from on-premises SQL Server.

Azure SQL Database is a fully managed SQL Server database engine. It provides 99.995% availability SLA, intelligent query optimization, and automatic tuning via AI.

-- Azure SQL Database automatically handles:

-- - Index tuning (automatic index creation/removal)

-- - Query plan regression detection

-- - Adaptive query processing

-- Create a table with built-in temporal tables for history tracking

CREATE TABLE Employees (

EmployeeID INT PRIMARY KEY,

Name NVARCHAR(100),

Department NVARCHAR(50),

Salary DECIMAL(10, 2),

-- Temporal table columns for automatic versioning

ValidFrom DATETIME2 GENERATED ALWAYS AS ROW START HIDDEN,

ValidTo DATETIME2 GENERATED ALWAYS AS ROW END HIDDEN,

PERIOD FOR SYSTEM_TIME (ValidFrom, ValidTo)

) WITH (SYSTEM_VERSIONING = ON);

-- Query historical data (time travel)

SELECT * FROM Employees

FOR SYSTEM_TIME AS OF '2025-01-15 10:00:00';

Cosmos DB is Microsoft's globally distributed, multi-model NoSQL database. It supports document, key-value, graph, and column-family data models with multiple APIs (SQL, MongoDB, Cassandra, Gremlin, Table).

// Cosmos DB with MongoDB API (drop-in replacement)

const { MongoClient } = require('mongodb');

const client = new MongoClient(

'mongodb://mycosmosdb:key@mycosmosdb.mongo.cosmos.azure.com:10255/?ssl=true'

);

await client.connect();

const db = client.db('ecommerce');

// Automatic global replication across regions

await db.collection('products').insertOne({

productId: 'p-12345',

name: 'Wireless Headphones',

price: 79.99,

inventory: 150,

// Cosmos DB partitions by this key for horizontal scaling

category: 'electronics' // partition key

});

// Reads served from nearest region with tunable consistency

const product = await db.collection('products').findOne({

productId: 'p-12345'

});

Provisioned Throughput: Reserve Request Units (RUs) per second. 1 RU = cost to read 1 KB document. Predictable cost, guaranteed performance.

Serverless: Pay per request, no capacity planning. Up to 5000 RU/s, 50 GB storage. Great for dev/test and low-traffic apps.

Autoscale: Automatically scales between 10% and 100% of max RU/s. Ideal for variable workloads.

Azure PostgreSQL offers managed PostgreSQL with built-in high availability, automatic backups, and intelligent performance recommendations.

# Connect to Azure PostgreSQL

psql "host=myserver.postgres.database.azure.com port=5432 \

dbname=mydb user=admin@myserver sslmode=require"

# Azure provides pglogical extension for logical replication

CREATE EXTENSION IF NOT EXISTS pglogical;

# Enable read replicas for read scaling

az postgres server replica create \

--name myserver-replica \

--source-server myserver \

--resource-group myResourceGroup

Google Cloud brings database innovation from powering Google's own services (Gmail, YouTube, Google Search) to the public cloud. Focus on analytics, machine learning integration, and global scale.

Cloud SQL provides fully managed MySQL, PostgreSQL, and SQL Server. Deep integration with other GCP services like BigQuery, Dataproc, and Vertex AI.

# Create Cloud SQL PostgreSQL instance with high availability

gcloud sql instances create mydb \

--database-version=POSTGRES_15 \

--tier=db-n1-standard-2 \

--region=us-central1 \

--availability-type=REGIONAL \

--backup-start-time=03:00

# Enable automatic storage increase

gcloud sql instances patch mydb \

--storage-auto-increase \

--storage-auto-increase-limit=500

# Create read replica for scaling reads

gcloud sql instances create mydb-replica \

--master-instance-name=mydb \

--tier=db-n1-standard-1

Cloud Spanner is Google's globally distributed, horizontally scalable relational database. It's the only database that combines SQL, strong consistency, and global scale—powering Google Ads and Google Play.

-- Spanner uses standard SQL with extensions for interleaved tables

CREATE TABLE Users (

UserId INT64 NOT NULL,

Email STRING(256),

CreatedAt TIMESTAMP NOT NULL OPTIONS (allow_commit_timestamp=true)

) PRIMARY KEY (UserId);

-- Interleaved tables: child rows stored with parent for efficiency

CREATE TABLE Orders (

UserId INT64 NOT NULL,

OrderId INT64 NOT NULL,

TotalAmount NUMERIC,

OrderDate DATE

) PRIMARY KEY (UserId, OrderId),

INTERLEAVE IN PARENT Users ON DELETE CASCADE;

-- Global transactions with external consistency

BEGIN TRANSACTION;

UPDATE Users SET LastOrderDate = CURRENT_TIMESTAMP()

WHERE UserId = 12345;

INSERT INTO Orders (UserId, OrderId, TotalAmount)

VALUES (12345, 99999, 149.99);

COMMIT TIMESTAMP;

Spanner uses TrueTime—Google's distributed time API backed by GPS and atomic clocks—to guarantee global ordering of transactions. This enables:

Firestore is Google's serverless document database, optimized for mobile and web apps. Real-time synchronization, offline support, and automatic scaling make it ideal for collaborative applications.

// JavaScript Firestore SDK

import { initializeApp } from 'firebase/app';

import { getFirestore, collection, addDoc, onSnapshot } from 'firebase/firestore';

const app = initializeApp({ /* config */ });

const db = getFirestore(app);

// Add a document

const docRef = await addDoc(collection(db, 'tasks'), {

title: 'Complete database tutorial',

completed: false,

createdAt: new Date(),

userId: 'user123'

});

// Real-time listener - updates UI instantly when data changes

const unsubscribe = onSnapshot(

collection(db, 'tasks'),

(snapshot) => {

snapshot.docChanges().forEach((change) => {

if (change.type === 'added') {

console.log('New task:', change.doc.data());

}

if (change.type === 'modified') {

console.log('Modified task:', change.doc.data());

}

});

}

);

Choosing the right cloud for your database depends on your existing infrastructure, team expertise, and specific workload requirements.

| Category | AWS | Azure | GCP |

|---|---|---|---|

| Managed PostgreSQL | RDS, Aurora | Azure Database for PostgreSQL | Cloud SQL |

| Serverless SQL | Aurora Serverless v2 | Azure SQL Serverless | - |

| Global NoSQL | DynamoDB Global Tables | Cosmos DB | Firestore |

| Global SQL | Aurora Global | Cosmos DB SQL API | Cloud Spanner |

| Caching | ElastiCache | Azure Cache for Redis | Memory Store |

| Data Warehouse | Redshift | Synapse Analytics | BigQuery |

| Market Share | ~33% | ~22% | ~10% |

| Best For | Widest service catalog, mature ecosystem | Hybrid cloud, .NET workloads | Analytics, ML integration |

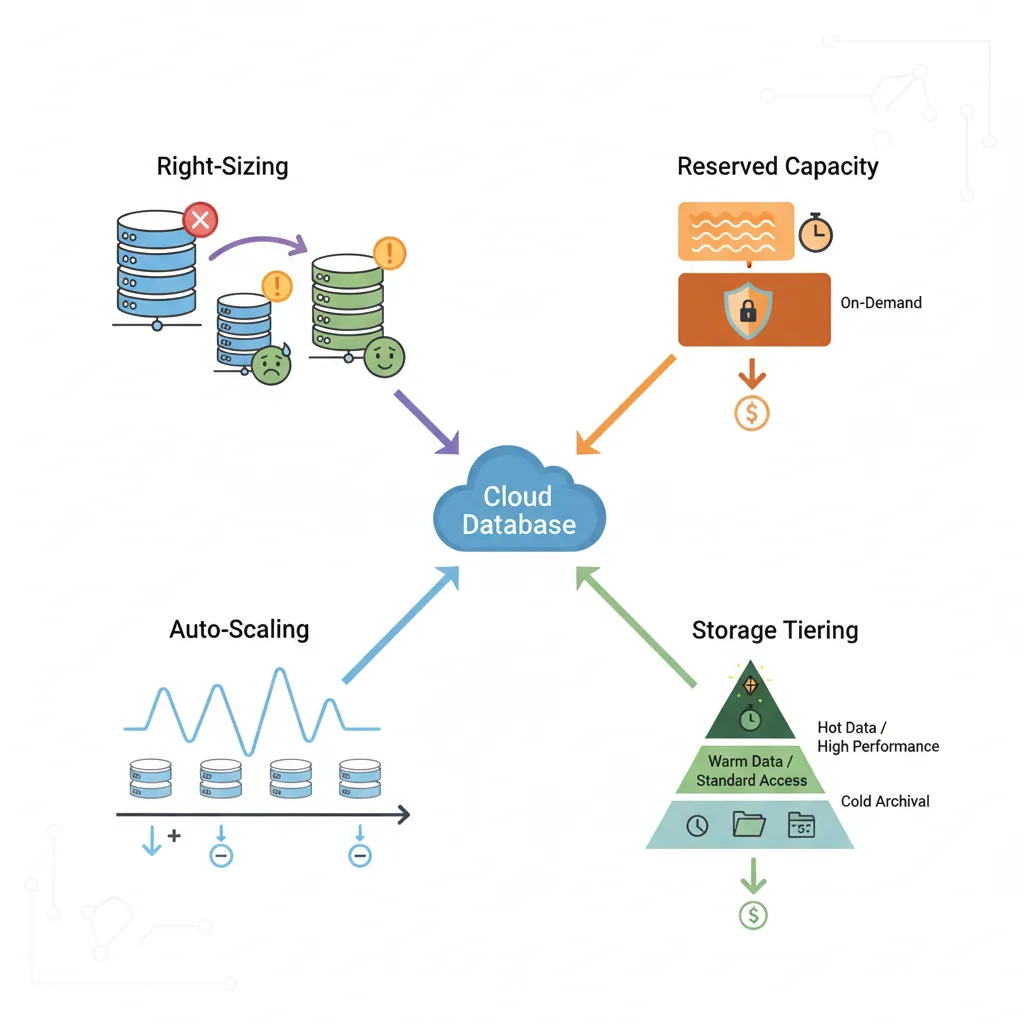

Cloud database costs can spiral quickly without proper management. Apply these strategies to reduce spending by 40-60%.

# AWS CloudWatch query to analyze RDS CPU utilization

aws cloudwatch get-metric-statistics \

--namespace AWS/RDS \

--metric-name CPUUtilization \

--dimensions Name=DBInstanceIdentifier,Value=mydb \

--start-time 2026-01-01T00:00:00Z \

--end-time 2026-02-01T00:00:00Z \

--period 86400 \

--statistics Average,Maximum

# If average < 40% and max < 70%, consider downsizing

Commit to 1-3 years for 40-60% discount compared to on-demand. Break-even is typically 6-9 months.

Scenario: Traffic peaks 8 hours/day, idle 16 hours/day

-- Archive old data to cheaper storage tiers

-- Move completed orders older than 2 years to Glacier

-- Step 1: Export to S3/Blob Storage

SELECT * FROM orders

WHERE created_at < NOW() - INTERVAL '2 years'

INTO OUTFILE 's3://my-bucket/archive_2024/orders.csv';

-- Step 2: Delete from primary database

DELETE FROM orders

WHERE created_at < NOW() - INTERVAL '2 years';

-- Step 3: Use S3 Select or BigQuery for rare queries on archives

# CloudWatch/Azure Monitor alert for unexpected cost spike

AnomalyCostAlert:

Metric: EstimatedCharges

Threshold: $500/day

EvaluationPeriods: 1

Actions:

- SendNotification: ops-team@company.com

- LambdaFunction: auto-scale-down

The "lift and shift" vs. managed service debate is nuanced. Here's when each makes sense.

Many organizations use a hybrid strategy:

Cloud databases simplify operations and enable rapid scaling. Understanding each cloud provider's offerings helps you make informed decisions for your workloads and budget.