Introduction to NLP Evaluation

Proper evaluation is essential for understanding model capabilities and limitations. Beyond accuracy, we must consider fairness, bias, and societal impact of NLP systems.

Key Insight

Evaluation goes beyond metrics—responsible NLP requires considering who benefits, who is harmed, and how systems can be made more fair and transparent.

NLP Mastery

NLP Fundamentals & Linguistic Basics

Phonology, morphology, syntax, semantics, pragmaticsTokenization & Text Cleaning

Subword tokenization, BPE, stopwords, normalizationText Representation & Feature Engineering

Bag-of-words, TF-IDF, feature extraction, vectorizationWord Embeddings

Word2Vec, GloVe, FastText, embedding spaces, analogiesStatistical Language Models & N-grams

Probability chains, smoothing, perplexity, Markov modelsNeural Networks for NLP

Feedforward nets, backpropagation, activation functionsRNNs, LSTMs & GRUs

Sequence modeling, vanishing gradients, gated architecturesTransformers & Attention Mechanism

Self-attention, multi-head attention, positional encodingPretrained Language Models & Transfer Learning

BERT, RoBERTa, fine-tuning, feature extractionGPT Models & Text Generation

Autoregressive generation, prompting, GPT architectureCore NLP Tasks

NER, POS tagging, sentiment analysis, text classificationAdvanced NLP Tasks

Question answering, summarization, machine translationMultilingual & Cross-lingual NLP

mBERT, XLM-R, zero-shot transfer, language diversityEvaluation, Ethics & Responsible NLP

BLEU, ROUGE, bias detection, fairness, responsible AINLP Systems, Optimization & Production

Model serving, quantization, distillation, deploymentCutting-Edge & Research Topics

LLMs, multimodal NLP, reasoning, emerging researchEvaluation Metrics

Classification Metrics

Classification metrics form the backbone of evaluating NLP systems for tasks like sentiment analysis, spam detection, named entity recognition, and text categorization. Understanding these metrics helps you choose the right one for your specific use case, as accuracy alone can be misleading when dealing with imbalanced datasets common in real-world NLP applications.

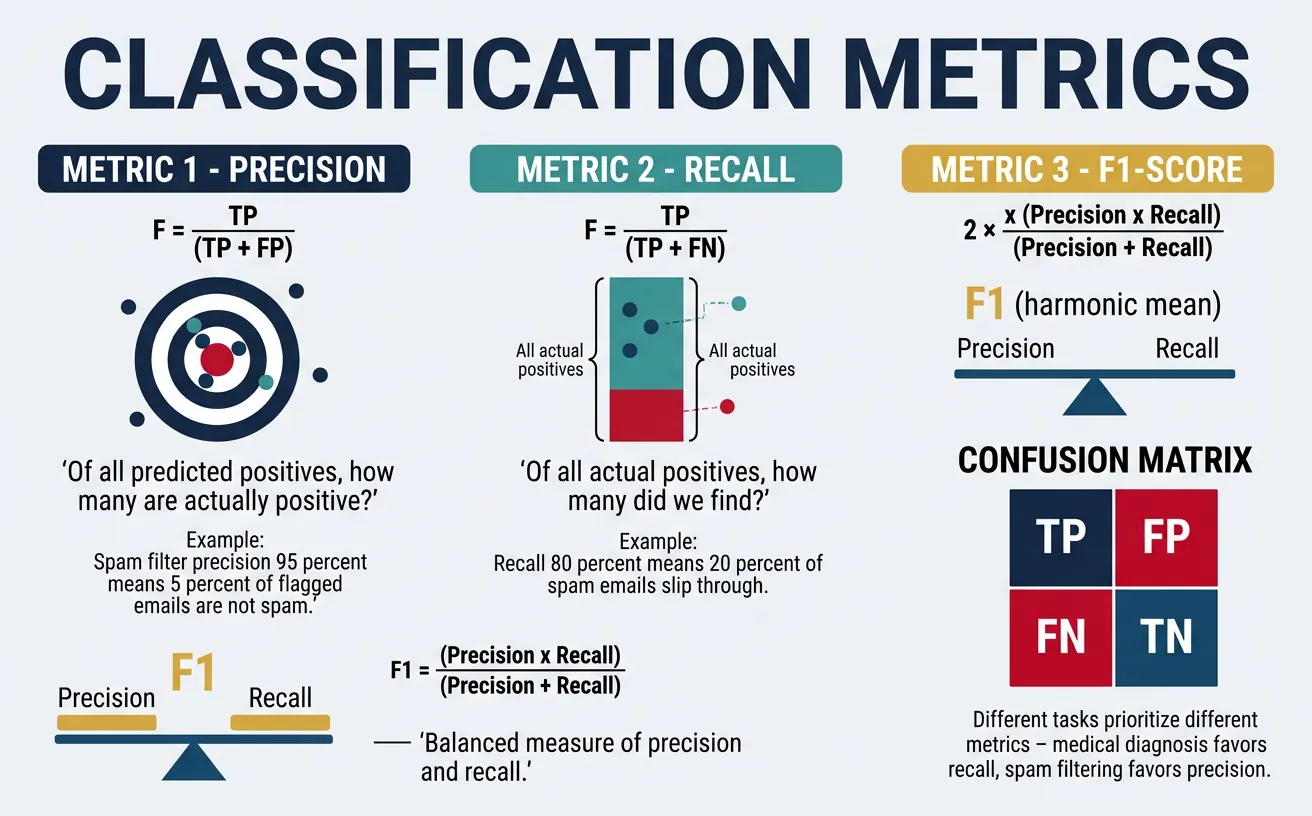

The fundamental metrics—precision, recall, and F1-score—each capture different aspects of model performance. Precision measures how many of your positive predictions were correct, while recall measures how many actual positives you found. The F1-score provides a harmonic mean of both, useful when you need a single balanced metric.

Core Classification Metrics:

$$\text{Precision} = \frac{TP}{TP + FP} \qquad \text{Recall} = \frac{TP}{TP + FN}$$

$$F_1 = 2 \cdot \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} = \frac{2 \cdot TP}{2 \cdot TP + FP + FN}$$

TP = True Positives · FP = False Positives · FN = False Negatives

Metric Selection Guide

Choose Precision when false positives are costly (spam detection—don't lose important emails). Choose Recall when false negatives are costly (disease detection—don't miss cases). Choose F1 when you need balance or have class imbalance.

import numpy as np

from sklearn.metrics import (

accuracy_score, precision_score, recall_score, f1_score,

classification_report, confusion_matrix, precision_recall_fscore_support

)

import matplotlib.pyplot as plt

import seaborn as sns

# Simulated sentiment analysis predictions

np.random.seed(42)

# True labels: 0 = Negative, 1 = Positive

y_true = np.array([1, 0, 1, 1, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 1, 1, 0, 1])

y_pred = np.array([1, 0, 1, 0, 0, 1, 1, 0, 1, 1, 0, 0, 1, 0, 1, 1, 1, 1, 0, 1])

# Calculate individual metrics

accuracy = accuracy_score(y_true, y_pred)

precision = precision_score(y_true, y_pred)

recall = recall_score(y_true, y_pred)

f1 = f1_score(y_true, y_pred)

print("="*50)

print("Classification Metrics for Sentiment Analysis")

print("="*50)

print(f"Accuracy: {accuracy:.4f} (Correct predictions / Total)")

print(f"Precision: {precision:.4f} (True Pos / Predicted Pos)")

print(f"Recall: {recall:.4f} (True Pos / Actual Pos)")

print(f"F1-Score: {f1:.4f} (Harmonic mean of P and R)")

# Detailed classification report

print("\nDetailed Classification Report:")

print(classification_report(y_true, y_pred,

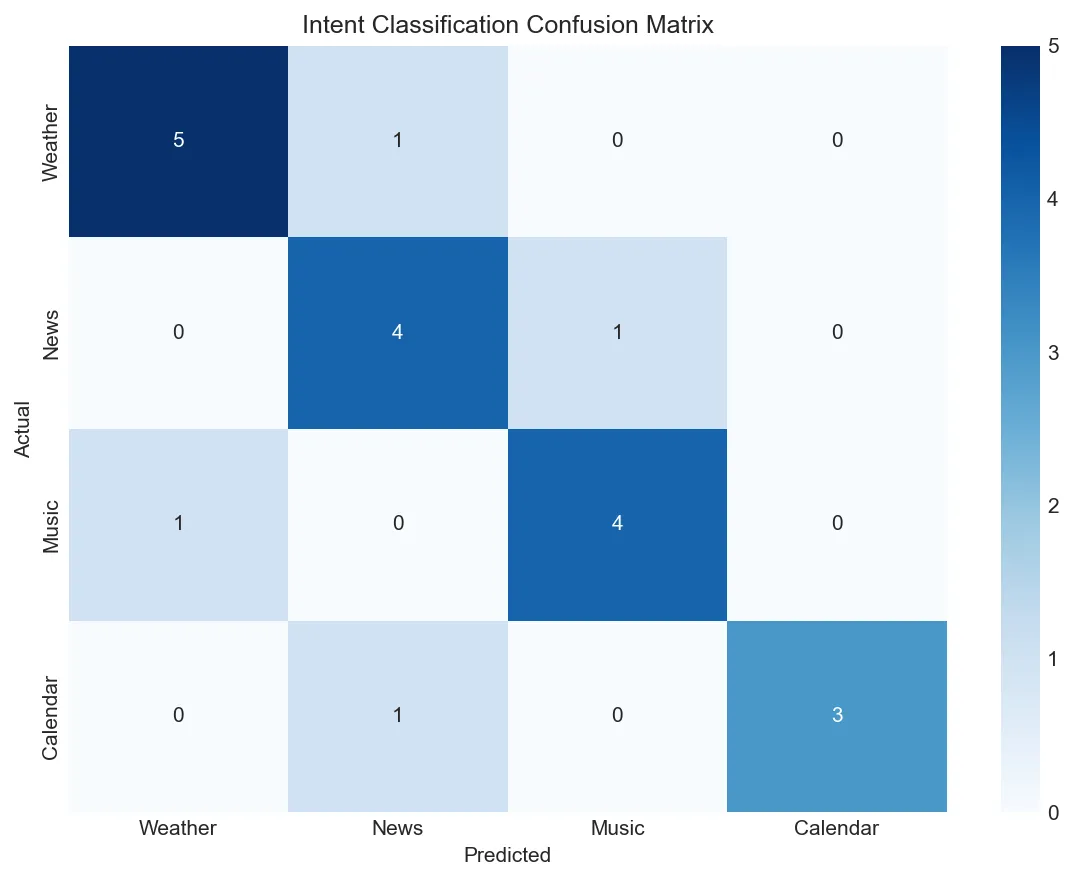

target_names=['Negative', 'Positive']))When dealing with multi-class classification common in NLP (topic classification, intent detection), you need to consider averaging strategies. Macro averaging treats all classes equally, micro averaging gives equal weight to each sample, and weighted averaging accounts for class imbalance.

import numpy as np

from sklearn.metrics import precision_recall_fscore_support, confusion_matrix

import matplotlib.pyplot as plt

import seaborn as sns

# Multi-class intent classification example

# Classes: 0=weather, 1=news, 2=music, 3=calendar

y_true_multi = np.array([0, 1, 2, 0, 3, 1, 2, 0, 1, 3, 2, 0, 1, 2, 3, 0, 1, 2, 0, 3])

y_pred_multi = np.array([0, 1, 2, 0, 3, 2, 2, 0, 1, 1, 2, 0, 1, 0, 3, 0, 1, 2, 1, 3])

class_names = ['Weather', 'News', 'Music', 'Calendar']

# Different averaging strategies

for avg in ['micro', 'macro', 'weighted']:

p, r, f1, _ = precision_recall_fscore_support(y_true_multi, y_pred_multi, average=avg)

print(f"{avg.capitalize():8s} - Precision: {p:.3f}, Recall: {r:.3f}, F1: {f1:.3f}")

# Per-class metrics

print("\nPer-Class Metrics:")

p, r, f1, support = precision_recall_fscore_support(y_true_multi, y_pred_multi, average=None)

for i, name in enumerate(class_names):

print(f" {name:10s}: P={p[i]:.3f}, R={r[i]:.3f}, F1={f1[i]:.3f}, Support={support[i]}")

# Confusion matrix visualization

cm = confusion_matrix(y_true_multi, y_pred_multi)

plt.figure(figsize=(8, 6))

sns.heatmap(cm, annot=True, fmt='d', cmap='Blues',

xticklabels=class_names, yticklabels=class_names)

plt.xlabel('Predicted')

plt.ylabel('Actual')

plt.title('Intent Classification Confusion Matrix')

plt.tight_layout()

plt.show()

Matthews Correlation Coefficient (MCC)

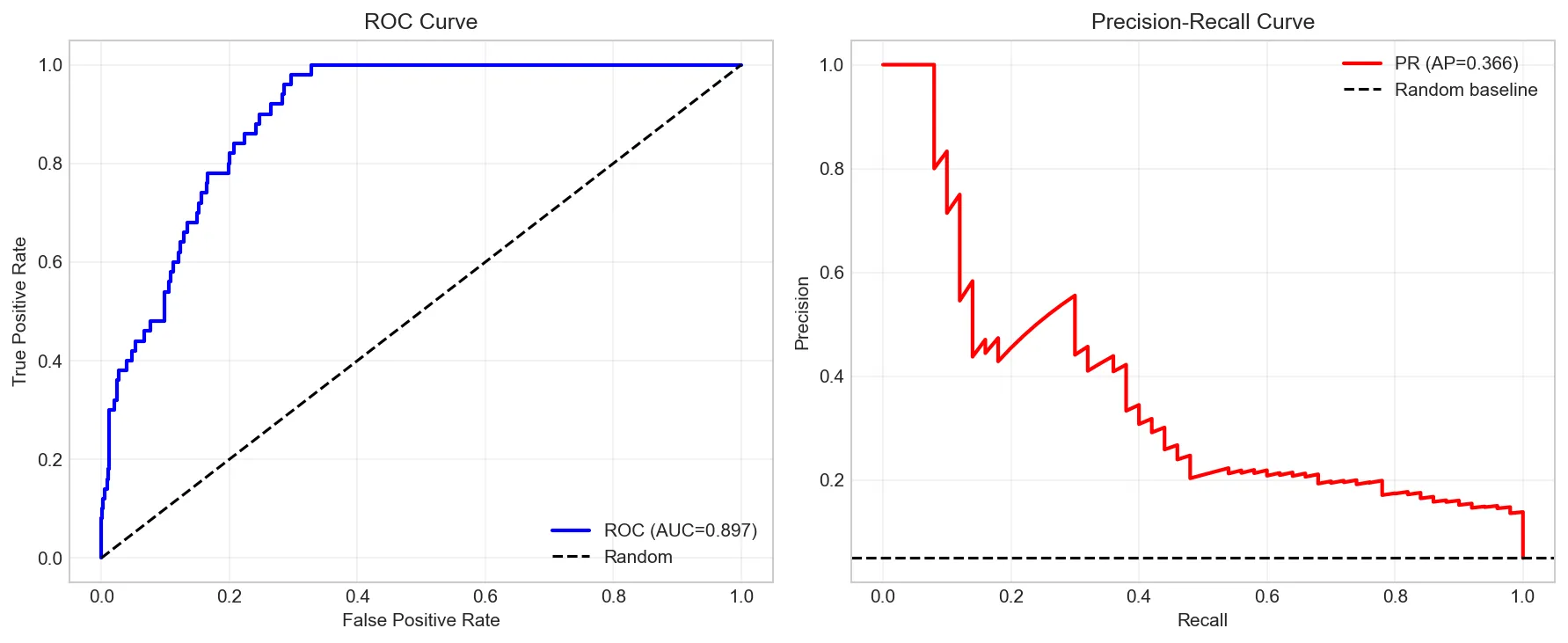

MCC is considered one of the most reliable metrics for binary classification, especially with imbalanced datasets. It ranges from -1 (total disagreement) to +1 (perfect prediction), with 0 indicating random prediction.

import numpy as np

from sklearn.metrics import matthews_corrcoef, roc_auc_score, average_precision_score

from sklearn.metrics import roc_curve, precision_recall_curve

import matplotlib.pyplot as plt

# Highly imbalanced dataset (spam detection: 5% spam)

np.random.seed(42)

n_samples = 1000

y_true_imb = np.array([1] * 50 + [0] * 950) # 5% positive class

# Simulated prediction probabilities

y_proba = np.random.beta(2, 5, n_samples) # More false than true

y_proba[:50] += 0.3 # Boost spam probabilities

y_proba = np.clip(y_proba, 0, 1)

y_pred_imb = (y_proba > 0.5).astype(int)

# Compare metrics on imbalanced data

from sklearn.metrics import accuracy_score, f1_score

print("Evaluation on Imbalanced Data (5% positive class):")

print(f" Accuracy: {accuracy_score(y_true_imb, y_pred_imb):.4f} (misleading!)")

print(f" F1-Score: {f1_score(y_true_imb, y_pred_imb):.4f}")

print(f" MCC: {matthews_corrcoef(y_true_imb, y_pred_imb):.4f}")

print(f" ROC-AUC: {roc_auc_score(y_true_imb, y_proba):.4f}")

print(f" PR-AUC (AP): {average_precision_score(y_true_imb, y_proba):.4f}")

# Plot ROC and PR curves

fig, axes = plt.subplots(1, 2, figsize=(12, 5))

# ROC Curve

fpr, tpr, _ = roc_curve(y_true_imb, y_proba)

axes[0].plot(fpr, tpr, 'b-', linewidth=2, label=f'ROC (AUC={roc_auc_score(y_true_imb, y_proba):.3f})')

axes[0].plot([0, 1], [0, 1], 'k--', label='Random')

axes[0].set_xlabel('False Positive Rate')

axes[0].set_ylabel('True Positive Rate')

axes[0].set_title('ROC Curve')

axes[0].legend()

axes[0].grid(True, alpha=0.3)

# PR Curve

precision_curve, recall_curve, _ = precision_recall_curve(y_true_imb, y_proba)

axes[1].plot(recall_curve, precision_curve, 'r-', linewidth=2,

label=f'PR (AP={average_precision_score(y_true_imb, y_proba):.3f})')

axes[1].axhline(y=0.05, color='k', linestyle='--', label='Random baseline')

axes[1].set_xlabel('Recall')

axes[1].set_ylabel('Precision')

axes[1].set_title('Precision-Recall Curve')

axes[1].legend()

axes[1].grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

Text Generation Metrics

Evaluating text generation quality is fundamentally harder than classification because there's no single "correct" answer. A good translation or summary can be expressed in many valid ways. Generation metrics attempt to measure how similar generated text is to reference human-written text, each capturing different aspects of quality.

BLEU (Bilingual Evaluation Understudy) measures n-gram precision—how many n-grams in the generated text appear in the reference. It's the most widely used metric for machine translation and was revolutionary when introduced, though it has known limitations with morphologically rich languages and doesn't consider recall.

BLEU Score Formula:

$$\text{BLEU} = BP \cdot \exp\!\left(\sum_{n=1}^{N} w_n \log p_n\right)$$

$BP = \min\!\left(1,\, \exp\!\left(1 - \tfrac{r}{c}\right)\right)$ (brevity penalty) · $p_n$ = $n$-gram precision · $w_n = \tfrac{1}{N}$ (equal weights)

import numpy as np

from collections import Counter

def calculate_bleu(reference, candidate, max_n=4):

"""

Calculate BLEU score from scratch to understand the algorithm.

BLEU = BP * exp(sum(w_n * log(p_n)))

where BP is brevity penalty and p_n is n-gram precision

"""

def get_ngrams(tokens, n):

return [tuple(tokens[i:i+n]) for i in range(len(tokens)-n+1)]

def ngram_precision(ref_tokens, cand_tokens, n):

cand_ngrams = get_ngrams(cand_tokens, n)

ref_ngrams = get_ngrams(ref_tokens, n)

if not cand_ngrams:

return 0

ref_counts = Counter(ref_ngrams)

cand_counts = Counter(cand_ngrams)

# Clipped counts

clipped = sum(min(cand_counts[ng], ref_counts[ng]) for ng in cand_counts)

return clipped / len(cand_ngrams)

ref_tokens = reference.lower().split()

cand_tokens = candidate.lower().split()

# Calculate brevity penalty

ref_len = len(ref_tokens)

cand_len = len(cand_tokens)

if cand_len <= ref_len:

bp = np.exp(1 - ref_len/cand_len) if cand_len > 0 else 0

else:

bp = 1.0

# Calculate n-gram precisions

precisions = []

for n in range(1, max_n + 1):

p_n = ngram_precision(ref_tokens, cand_tokens, n)

precisions.append(p_n)

print(f" {n}-gram precision: {p_n:.4f}")

# Geometric mean with equal weights

if 0 in precisions:

bleu = 0

else:

log_precisions = [np.log(p) for p in precisions]

bleu = bp * np.exp(np.mean(log_precisions))

print(f" Brevity Penalty: {bp:.4f}")

return bleu

# Example: Machine translation evaluation

reference = "The cat sat on the mat"

candidates = [

"The cat sat on the mat", # Perfect match

"The cat is sitting on the mat", # Good paraphrase

"A cat was on the mat", # Acceptable

"The dog ran in the park", # Wrong content

]

print("BLEU Score Calculation Examples:")

print("="*50)

for i, cand in enumerate(candidates, 1):

print(f"\nCandidate {i}: '{cand}'")

bleu = calculate_bleu(reference, cand)

print(f" BLEU Score: {bleu:.4f}")ROUGE (Recall-Oriented Understudy for Gisting Evaluation) is the standard metric for summarization. Unlike BLEU's precision focus, ROUGE emphasizes recall—what fraction of the reference n-grams appear in the candidate. ROUGE-L measures longest common subsequence, capturing sentence-level structure.

# Using the rouge-score library for production use

# pip install rouge-score

from collections import Counter

import numpy as np

def calculate_rouge_scores(reference, candidate):

"""

Calculate ROUGE-1, ROUGE-2, and ROUGE-L scores.

"""

def get_ngrams(tokens, n):

return [tuple(tokens[i:i+n]) for i in range(len(tokens)-n+1)]

def rouge_n(ref_tokens, cand_tokens, n):

ref_ngrams = Counter(get_ngrams(ref_tokens, n))

cand_ngrams = Counter(get_ngrams(cand_tokens, n))

overlap = sum((ref_ngrams & cand_ngrams).values())

precision = overlap / sum(cand_ngrams.values()) if cand_ngrams else 0

recall = overlap / sum(ref_ngrams.values()) if ref_ngrams else 0

f1 = 2 * precision * recall / (precision + recall) if (precision + recall) > 0 else 0

return {'precision': precision, 'recall': recall, 'f1': f1}

def lcs_length(x, y):

"""Longest Common Subsequence length using dynamic programming."""

m, n = len(x), len(y)

dp = [[0] * (n + 1) for _ in range(m + 1)]

for i in range(1, m + 1):

for j in range(1, n + 1):

if x[i-1] == y[j-1]:

dp[i][j] = dp[i-1][j-1] + 1

else:

dp[i][j] = max(dp[i-1][j], dp[i][j-1])

return dp[m][n]

def rouge_l(ref_tokens, cand_tokens):

lcs = lcs_length(ref_tokens, cand_tokens)

precision = lcs / len(cand_tokens) if cand_tokens else 0

recall = lcs / len(ref_tokens) if ref_tokens else 0

f1 = 2 * precision * recall / (precision + recall) if (precision + recall) > 0 else 0

return {'precision': precision, 'recall': recall, 'f1': f1}

ref_tokens = reference.lower().split()

cand_tokens = candidate.lower().split()

return {

'rouge-1': rouge_n(ref_tokens, cand_tokens, 1),

'rouge-2': rouge_n(ref_tokens, cand_tokens, 2),

'rouge-l': rouge_l(ref_tokens, cand_tokens)

}

# Example: Summarization evaluation

reference_summary = "The quick brown fox jumps over the lazy dog near the river"

candidate_summaries = [

"The quick brown fox jumps over the lazy dog",

"A fast brown fox leaps over a sleepy dog",

"The fox jumped over the dog",

]

print("ROUGE Score Comparison for Summarization:")

print("="*60)

print(f"Reference: '{reference_summary}'\n")

for i, cand in enumerate(candidate_summaries, 1):

print(f"Candidate {i}: '{cand}'")

scores = calculate_rouge_scores(reference_summary, cand)

for metric, values in scores.items():

print(f" {metric.upper()}: P={values['precision']:.3f}, "

f"R={values['recall']:.3f}, F1={values['f1']:.3f}")

print()BERTScore represents a paradigm shift in generation evaluation by using contextual embeddings instead of exact n-gram matching. It computes cosine similarity between BERT embeddings of tokens, allowing it to recognize semantic equivalence even when words differ. This makes it much more aligned with human judgment.

# pip install bert-score

# Note: This requires transformers and torch

import numpy as np

# Simulated BERTScore calculation concept

# In practice, use: from bert_score import score

def explain_bertscore_concept():

"""

BERTScore computes similarity using BERT embeddings.

For each token in candidate:

1. Get BERT embedding

2. Find maximum cosine similarity with any reference token

Precision = avg(max similarity for each candidate token)

Recall = avg(max similarity for each reference token)

F1 = harmonic mean

"""

# Simulated token embeddings (in reality, from BERT)

# Representing: ["fast", "quick", "dog", "cat", "animal"]

embeddings = {

'fast': np.array([0.9, 0.1, 0.0, 0.0]),

'quick': np.array([0.85, 0.15, 0.0, 0.0]), # Similar to 'fast'

'dog': np.array([0.0, 0.0, 0.9, 0.1]),

'cat': np.array([0.0, 0.0, 0.7, 0.3]), # Similar to 'dog'

'animal': np.array([0.0, 0.0, 0.5, 0.5]), # General

'runs': np.array([0.3, 0.3, 0.2, 0.2]),

'jumps': np.array([0.35, 0.3, 0.2, 0.15]), # Similar to 'runs'

}

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

reference_tokens = ['quick', 'dog', 'runs']

candidate_tokens = ['fast', 'cat', 'jumps']

print("BERTScore Concept Demonstration:")

print("="*50)

print(f"Reference: {reference_tokens}")

print(f"Candidate: {candidate_tokens}")

print()

# Calculate precision (from candidate perspective)

precision_scores = []

print("Precision calculation (candidate ? reference):")

for cand_tok in candidate_tokens:

cand_emb = embeddings[cand_tok]

max_sim = max(cosine_similarity(cand_emb, embeddings[ref_tok])

for ref_tok in reference_tokens)

precision_scores.append(max_sim)

print(f" '{cand_tok}' best match: {max_sim:.3f}")

# Calculate recall (from reference perspective)

recall_scores = []

print("\nRecall calculation (reference ? candidate):")

for ref_tok in reference_tokens:

ref_emb = embeddings[ref_tok]

max_sim = max(cosine_similarity(ref_emb, embeddings[cand_tok])

for cand_tok in candidate_tokens)

recall_scores.append(max_sim)

print(f" '{ref_tok}' best match: {max_sim:.3f}")

precision = np.mean(precision_scores)

recall = np.mean(recall_scores)

f1 = 2 * precision * recall / (precision + recall)

print(f"\nBERTScore Results:")

print(f" Precision: {precision:.4f}")

print(f" Recall: {recall:.4f}")

print(f" F1: {f1:.4f}")

return precision, recall, f1

explain_bertscore_concept()Perplexity for Language Models

Perplexity is the fundamental metric for evaluating language models. Intuitively, it measures how "surprised" the model is by the test data—lower perplexity means the model assigns higher probability to the actual text. Mathematically, perplexity is the exponentiation of the average negative log-likelihood per token.

A perplexity of 100 means the model is as uncertain as if it were choosing uniformly among 100 options at each step. State-of-the-art language models achieve perplexity in the low teens on standard benchmarks like Penn Treebank, while GPT-3 and similar models can achieve single-digit perplexity on certain datasets.

import numpy as np

def calculate_perplexity(log_probs):

"""

Calculate perplexity from log probabilities.

Perplexity = exp(-1/N * sum(log P(w_i | context)))

Lower perplexity = model assigns higher probability to text = better

"""

avg_neg_log_prob = -np.mean(log_probs)

perplexity = np.exp(avg_neg_log_prob)

return perplexity

# Simulate language model predictions

np.random.seed(42)

# Test sentences with their (simulated) log probabilities

test_cases = [

{

'name': 'Common phrase',

'text': 'The cat sat on the mat',

'log_probs': np.array([-2.3, -1.8, -2.1, -1.5, -1.9, -2.0]) # High prob

},

{

'name': 'Unusual phrase',

'text': 'The cat computed the eigenvalues',

'log_probs': np.array([-2.3, -1.8, -5.2, -4.8, -4.5]) # Lower prob for rare combo

},

{

'name': 'Nonsense',

'text': 'Colorless green ideas sleep furiously',

'log_probs': np.array([-4.5, -5.2, -6.1, -5.8, -5.5]) # Grammatical but semantic nonsense

}

]

print("Perplexity Calculation Examples:")

print("="*60)

for case in test_cases:

ppl = calculate_perplexity(case['log_probs'])

avg_log_prob = np.mean(case['log_probs'])

print(f"\n{case['name']}:")

print(f" Text: '{case['text']}'")

print(f" Avg log probability: {avg_log_prob:.3f}")

print(f" Perplexity: {ppl:.2f}")

print(f" Interpretation: Model is as uncertain as choosing from ~{int(ppl)} options")

# Compare model quality

print("\n" + "="*60)

print("Model Quality Comparison (same test set):")

print("="*60)

models = [

('Baseline n-gram', 150.0),

('LSTM', 65.0),

('Transformer', 25.0),

('GPT-2 Small', 18.5),

('GPT-2 Large', 12.3),

]

for model_name, ppl in models:

bar = '¦' * int(50 - ppl/3)

print(f"{model_name:20s} PPL: {ppl:6.1f} {bar}")NLP Benchmarks

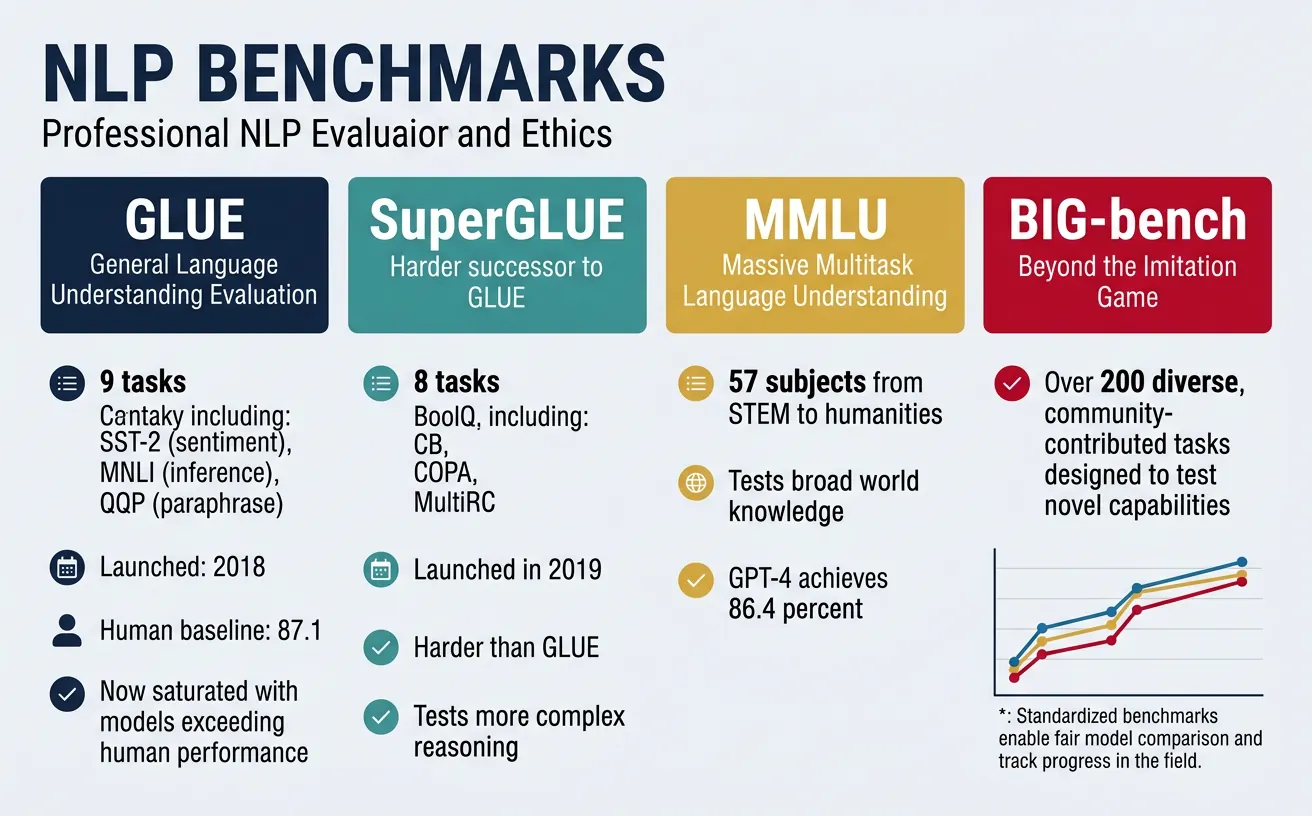

Standardized benchmarks enable fair comparison between models and track progress in the field. The landscape of NLP benchmarks has evolved rapidly, from single-task datasets to comprehensive multi-task evaluations. Understanding these benchmarks helps you contextualize model claims and choose appropriate evaluation setups for your own work.

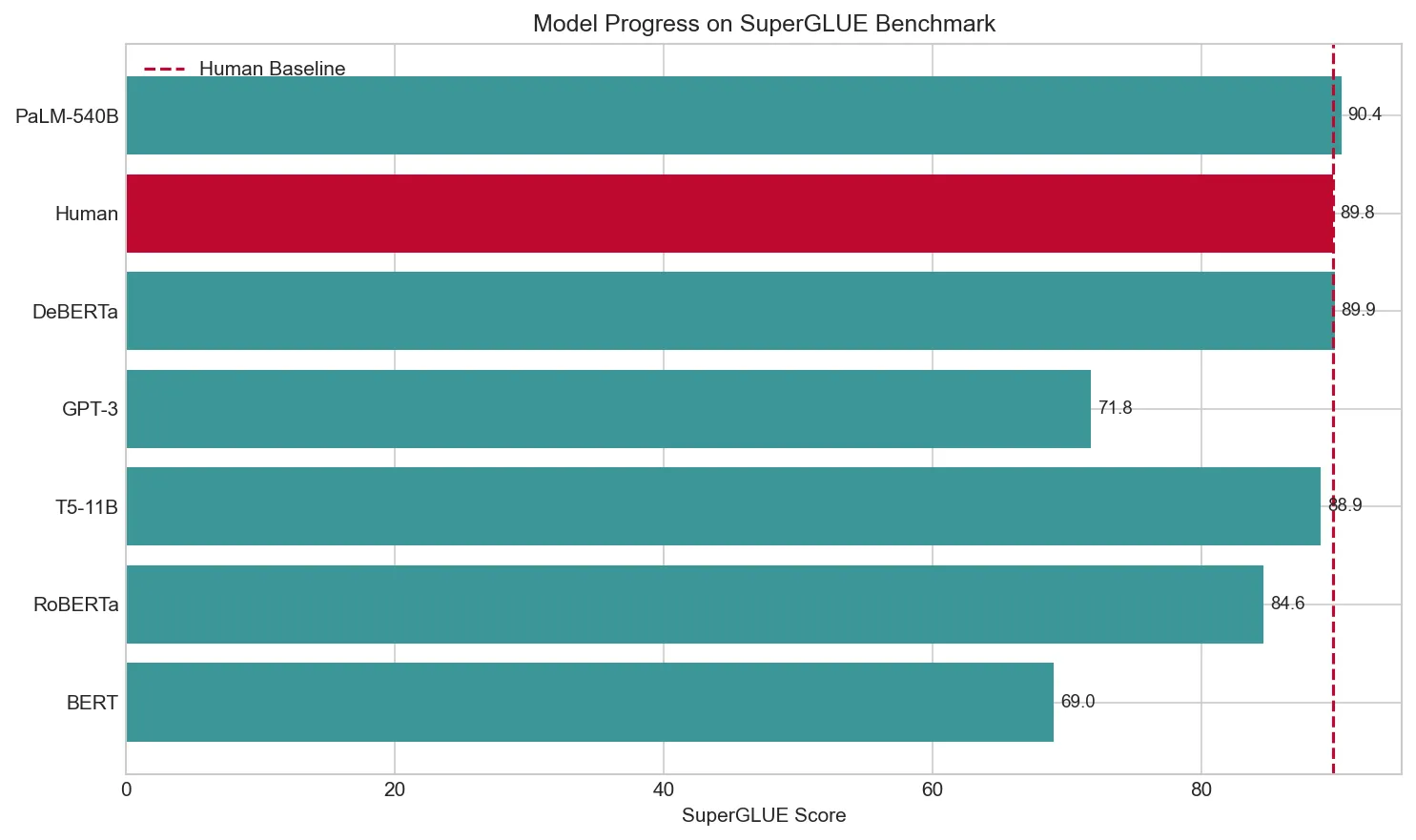

GLUE (General Language Understanding Evaluation) and its harder successor SuperGLUE have become the standard benchmarks for natural language understanding. They include tasks like sentiment analysis, textual entailment, and question answering. When BERT-based models saturated GLUE, SuperGLUE introduced more challenging tasks requiring reasoning and common sense.

Benchmark Saturation Warning

Be cautious of benchmark scores near human performance. Models may achieve high scores through shortcuts or dataset artifacts rather than true understanding. Always evaluate on your target domain, not just standard benchmarks.

import numpy as np

import matplotlib.pyplot as plt

# Major NLP benchmarks and their characteristics

benchmarks = {

'GLUE': {

'description': 'General Language Understanding Evaluation',

'tasks': ['CoLA', 'SST-2', 'MRPC', 'QQP', 'STS-B', 'MNLI', 'QNLI', 'RTE', 'WNLI'],

'metrics': ['Matthews Corr', 'Accuracy', 'F1/Acc', 'F1/Acc', 'Pearson/Spearman',

'Matched/Mismatched Acc', 'Accuracy', 'Accuracy', 'Accuracy'],

'human_baseline': 87.1,

'best_model_2024': 91.3,

'saturation': 'Yes'

},

'SuperGLUE': {

'description': 'Harder NLU benchmark',

'tasks': ['BoolQ', 'CB', 'COPA', 'MultiRC', 'ReCoRD', 'RTE', 'WiC', 'WSC'],

'metrics': ['Accuracy', 'F1/Accuracy', 'Accuracy', 'F1a/EM', 'F1/EM',

'Accuracy', 'Accuracy', 'Accuracy'],

'human_baseline': 89.8,

'best_model_2024': 90.4,

'saturation': 'Near'

},

'SQuAD 2.0': {

'description': 'Question Answering with unanswerable questions',

'tasks': ['Extractive QA'],

'metrics': ['EM', 'F1'],

'human_baseline': 89.5,

'best_model_2024': 93.2,

'saturation': 'Yes'

},

'MMLU': {

'description': 'Massive Multitask Language Understanding',

'tasks': ['57 subjects from STEM, humanities, social sciences, etc.'],

'metrics': ['Accuracy'],

'human_baseline': 89.8,

'best_model_2024': 86.4,

'saturation': 'No'

}

}

print("Major NLP Benchmarks Overview:")

print("="*70)

for name, info in benchmarks.items():

print(f"\n?? {name}: {info['description']}")

print(f" Tasks: {len(info['tasks'])} - {', '.join(info['tasks'][:3])}{'...' if len(info['tasks']) > 3 else ''}")

print(f" Human Baseline: {info['human_baseline']}")

print(f" Best Model: {info['best_model_2024']}")

print(f" Saturated: {info['saturation']}")

# Visualize model progress on SuperGLUE

models_progress = [

('BERT', 69.0, '2018'),

('RoBERTa', 84.6, '2019'),

('T5-11B', 88.9, '2020'),

('GPT-3', 71.8, '2020'),

('DeBERTa', 89.9, '2021'),

('Human', 89.8, '-'),

('PaLM-540B', 90.4, '2022'),

]

plt.figure(figsize=(10, 6))

names = [m[0] for m in models_progress]

scores = [m[1] for m in models_progress]

colors = ['#3B9797' if m[0] != 'Human' else '#BF092F' for m in models_progress]

bars = plt.barh(names, scores, color=colors)

plt.axvline(x=89.8, color='#BF092F', linestyle='--', label='Human Baseline')

plt.xlabel('SuperGLUE Score')

plt.title('Model Progress on SuperGLUE Benchmark')

plt.legend()

for bar, score in zip(bars, scores):

plt.text(score + 0.5, bar.get_y() + bar.get_height()/2, f'{score}',

va='center', fontsize=9)

plt.tight_layout()

plt.show()

Beyond Accuracy: Behavioral Testing

CheckList proposes testing NLP models like software, with specific test cases for capabilities (vocabulary, negation, coreference), and invariances (typos, synonyms). This reveals failures that aggregate metrics miss.

import numpy as np

# Behavioral testing framework concept

class NLPBehavioralTest:

"""Framework for systematic NLP model testing."""

def __init__(self, model_predict_fn):

self.model = model_predict_fn

self.results = {'passed': 0, 'failed': 0, 'failures': []}

def minimum_functionality_test(self, test_cases):

"""Test basic capabilities with clear expected outputs."""

print("\n?? Minimum Functionality Tests:")

for text, expected in test_cases:

pred = self.model(text)

passed = pred == expected

status = '?' if passed else '?'

if passed:

self.results['passed'] += 1

else:

self.results['failed'] += 1

self.results['failures'].append((text, expected, pred))

print(f" {status} '{text}' ? Expected: {expected}, Got: {pred}")

def invariance_test(self, original, perturbations):

"""Test that model prediction doesn't change with irrelevant perturbations."""

print("\n?? Invariance Tests (should give same prediction):")

original_pred = self.model(original)

print(f" Original: '{original}' ? {original_pred}")

for perturbed in perturbations:

perturbed_pred = self.model(perturbed)

passed = perturbed_pred == original_pred

status = '?' if passed else '?'

if passed:

self.results['passed'] += 1

else:

self.results['failed'] += 1

self.results['failures'].append((perturbed, original_pred, perturbed_pred))

print(f" {status} '{perturbed}' ? {perturbed_pred}")

def directional_test(self, original, modifications, expected_change):

"""Test that specific changes affect prediction in expected direction."""

print(f"\n?? Directional Tests (prediction should become {expected_change}):")

original_pred = self.model(original)

print(f" Original: '{original}' ? {original_pred}")

for modified in modifications:

modified_pred = self.model(modified)

passed = modified_pred == expected_change

status = '?' if passed else '?'

if passed:

self.results['passed'] += 1

else:

self.results['failed'] += 1

print(f" {status} '{modified}' ? {modified_pred}")

# Simulated sentiment classifier

def mock_sentiment_model(text):

text_lower = text.lower()

# Simplistic rule-based (represents model weaknesses)

if 'not' in text_lower and 'great' in text_lower:

return 'positive' # Bug: doesn't handle negation properly

elif any(w in text_lower for w in ['great', 'excellent', 'amazing', 'love']):

return 'positive'

elif any(w in text_lower for w in ['terrible', 'awful', 'hate', 'bad']):

return 'negative'

return 'neutral'

# Run behavioral tests

tester = NLPBehavioralTest(mock_sentiment_model)

# Test 1: Basic functionality

tester.minimum_functionality_test([

('I love this product!', 'positive'),

('This is terrible.', 'negative'),

('The movie was okay.', 'neutral'),

])

# Test 2: Invariance to typos/formatting

tester.invariance_test(

'I love this product!',

[

'I love this product.', # Punctuation change

'I LOVE this product!', # Case change

'I love this product!', # Extra space

]

)

# Test 3: Negation handling

tester.directional_test(

'The movie was great!',

['The movie was not great!', 'The movie was not great at all.'],

'negative'

)

print(f"\n?? Test Summary: {tester.results['passed']} passed, {tester.results['failed']} failed")

if tester.results['failures']:

print("Failures reveal model weaknesses in negation handling!")LLM-as-Judge & Auto-Raters

Traditional metrics like BLEU and ROUGE compare generated text to reference answers using surface-level n-gram overlap. But for open-ended generation tasks — creative writing, conversational responses, code explanations, brainstorming — there is no single correct reference. Human evaluation has long been the gold standard, but it is slow (days), expensive ($5-20 per example), and difficult to reproduce.

LLM-as-Judge (also called auto-raters) solves this by using a powerful language model to evaluate the output of another model. The judge LLM receives the prompt, the generated response, and a rubric (scoring criteria), then produces a score and justification. Research shows that strong LLMs (GPT-4, Claude) achieve >80% agreement with human annotators on many evaluation tasks — comparable to inter-annotator agreement between humans themselves.

| Evaluation Method | Cost per 1K Items | Latency | Reproducibility | Best For |

|---|---|---|---|---|

| Human Evaluation | $5,000–20,000 | Days–weeks | Moderate (inter-annotator variance) | Nuanced judgment, safety, creativity |

| Reference-Based Metrics (BLEU/ROUGE) | ~$0 (compute only) | Seconds | Perfect | Translation, summarization with references |

| LLM-as-Judge (Single) | $2–50 (API cost) | Minutes | High (with temperature=0) | Open-ended generation, instruction following |

| LLM-as-Judge (Pairwise) | $4–100 (API cost) | Minutes | High | Model comparison, A/B testing |

| Model-Based Metrics (BERTScore) | ~$0 (local GPU) | Seconds | Perfect | Semantic similarity to references |

Common LLM-as-Judge Patterns

- Pointwise Scoring: Judge rates a single response on a 1-5 scale against a rubric. Fast but can suffer from position bias and inconsistent calibration.

- Pairwise Comparison: Judge chooses the better response between two candidates. More reliable than pointwise; used by Chatbot Arena and AlpacaEval.

- Reference-Guided: Judge compares a response against a gold-standard reference answer. Combines benefits of reference metrics and LLM judgment.

- Multi-Aspect: Judge scores separately on dimensions like helpfulness, harmlessness, factuality, and coherence, then aggregates. Provides richer signal for model improvement.

import numpy as np

def llm_judge_demo():

"""Demonstrate the LLM-as-Judge evaluation pattern.

In production, the 'judge' function would call an LLM API.

Here we simulate the scoring to show the framework.

"""

# Evaluation rubric for helpfulness (1-5 scale)

rubric = """

Score the response on helpfulness (1-5):

5: Comprehensive, accurate, well-structured, directly addresses the question

4: Good answer with minor gaps, mostly accurate

3: Partially helpful, some relevant information but incomplete

2: Marginally relevant, significant inaccuracies or missing key points

1: Unhelpful, irrelevant, or factually wrong

"""

# Simulated evaluation examples

evaluations = [

{

"prompt": "Explain what a neural network is.",

"response_a": "A neural network is a computational model inspired by "

"biological neurons. It consists of layers of interconnected "

"nodes that learn patterns from data through backpropagation.",

"response_b": "Neural networks are AI things that learn stuff.",

"human_score_a": 4,

"human_score_b": 1,

},

{

"prompt": "What are the benefits of exercise?",

"response_a": "Exercise improves cardiovascular health, strengthens muscles, "

"boosts mental health through endorphin release, aids weight "

"management, and reduces risk of chronic diseases like diabetes.",

"response_b": "Exercise makes you stronger and healthier. It's good for "

"your heart and can help you lose weight. Doctors recommend "

"at least 30 minutes per day.",

"human_score_a": 5,

"human_score_b": 4,

},

]

def simulate_judge(response, rubric_text):

"""Simulate LLM judge scoring (in production: API call to GPT-4/Claude).

Uses simple heuristics as a stand-in for actual LLM judgment."""

word_count = len(response.split())

has_specifics = any(w in response.lower() for w in

['specifically', 'example', 'such as', 'including',

'consists', 'through', 'layers', 'reduces', 'improves'])

score = min(5, max(1, word_count // 8 + (2 if has_specifics else 0)))

return score

print("LLM-as-Judge Evaluation Framework")

print("=" * 60)

print(f"\nRubric: {rubric.strip()}\n")

agreements = 0

total = 0

for i, ev in enumerate(evaluations, 1):

print(f"--- Example {i} ---")

print(f"Prompt: {ev['prompt']}")

for label in ['a', 'b']:

response = ev[f'response_{label}']

human = ev[f'human_score_{label}']

auto = simulate_judge(response, rubric)

agreed = abs(human - auto) <= 1 # within 1 point = agreement

agreements += int(agreed)

total += 1

print(f"\n Response {label.upper()}: \"{response[:60]}...\"")

print(f" Human score: {human}/5")

print(f" Auto-rater: {auto}/5 "

f"{'[AGREE]' if agreed else '[DISAGREE]'}")

agreement_rate = agreements / total * 100

print(f"\n{'=' * 60}")

print(f"Agreement rate (within ±1): {agreement_rate:.0f}% "

f"({agreements}/{total})")

print(f"\nIn practice, GPT-4 as judge achieves ~80% agreement")

print("with human annotators — comparable to human-human agreement.")

llm_judge_demo()

import numpy as np

def pairwise_comparison_demo():

"""Demonstrate pairwise LLM-as-Judge comparison.

This is the method used by Chatbot Arena (LMSYS) and AlpacaEval.

"""

comparisons = [

{

"prompt": "Write a haiku about machine learning.",

"model_a": "Data flows like streams\n"

"Patterns emerge from the noise\n"

"Silicon dreams grow",

"model_b": "Machine learning is\n"

"A way to train computers\n"

"Using lots of data",

"expected_winner": "A",

},

{

"prompt": "What is the capital of France?",

"model_a": "The capital of France is Paris.",

"model_b": "Paris is the capital of France. It has been the capital "

"since the 10th century and is home to landmarks like "

"the Eiffel Tower and the Louvre.",

"expected_winner": "B",

}

]

def simulate_pairwise_judge(prompt, resp_a, resp_b):

"""Simulate pairwise comparison. Returns 'A', 'B', or 'Tie'."""

score_a = len(resp_a.split()) + resp_a.count('\n') * 3

score_b = len(resp_b.split()) + resp_b.count('\n') * 3

if abs(score_a - score_b) < 3:

return "Tie"

return "A" if score_a > score_b else "B"

print("Pairwise LLM-as-Judge Comparison")

print("=" * 60)

print("Method: Show both responses to a judge LLM, ask which is better.\n")

for i, comp in enumerate(comparisons, 1):

winner = simulate_pairwise_judge(comp["prompt"],

comp["model_a"],

comp["model_b"])

print(f"--- Comparison {i} ---")

print(f"Prompt: {comp['prompt']}")

print(f" Model A: {comp['model_a'][:50]}...")

print(f" Model B: {comp['model_b'][:50]}...")

print(f" Judge verdict: Model {winner}")

print(f" Expected: Model {comp['expected_winner']}")

print()

# Elo rating from pairwise comparisons (Chatbot Arena method)

print("=" * 60)

print("\nElo Rating System (Chatbot Arena style):")

print("Each pairwise comparison updates model Elo ratings.")

models = {"GPT-4o": 1250, "Claude-3.5-Sonnet": 1245,

"Gemini-1.5-Pro": 1230, "Llama-3.1-405B": 1190,

"Mixtral-8x7B": 1140}

print(f"\n{'Model':<25} {'Elo Rating':<12}")

print("-" * 37)

for model, elo in sorted(models.items(), key=lambda x: -x[1]):

bar = "█" * int((elo - 1100) / 5)

print(f"{model:<25} {elo:<12} {bar}")

print(f"\nElo computed from {'>300K' if True else ''} pairwise human votes.")

print("Models within ~20 Elo points are statistically indistinguishable.")

pairwise_comparison_demo()

Evaluation Frameworks for Open-Ended Tasks

| Framework | Method | Scale | Use Case |

|---|---|---|---|

| Chatbot Arena (LMSYS) | Crowd-sourced pairwise human votes → Elo ratings | 300K+ votes | General chatbot ranking |

| AlpacaEval 2.0 | GPT-4 pairwise comparison vs. reference model | 805 instructions | Fast automated model comparison |

| MT-Bench | GPT-4 multi-turn scoring on 80 prompts | 80 questions × 8 categories | Multi-turn conversation quality |

| WildBench | GPT-4 scoring on real user queries | 1,024 tasks | Real-world instruction following |

| Arena-Hard-Auto | LLM auto-rater on hard prompts from Chatbot Arena | 500 prompts | Discriminating top-tier models |

Key insight: No single evaluation captures model quality comprehensively. Best practice is to combine automated benchmarks (MMLU, HumanEval), LLM-as-judge scoring (MT-Bench, AlpacaEval), and targeted human evaluation on your specific use case.

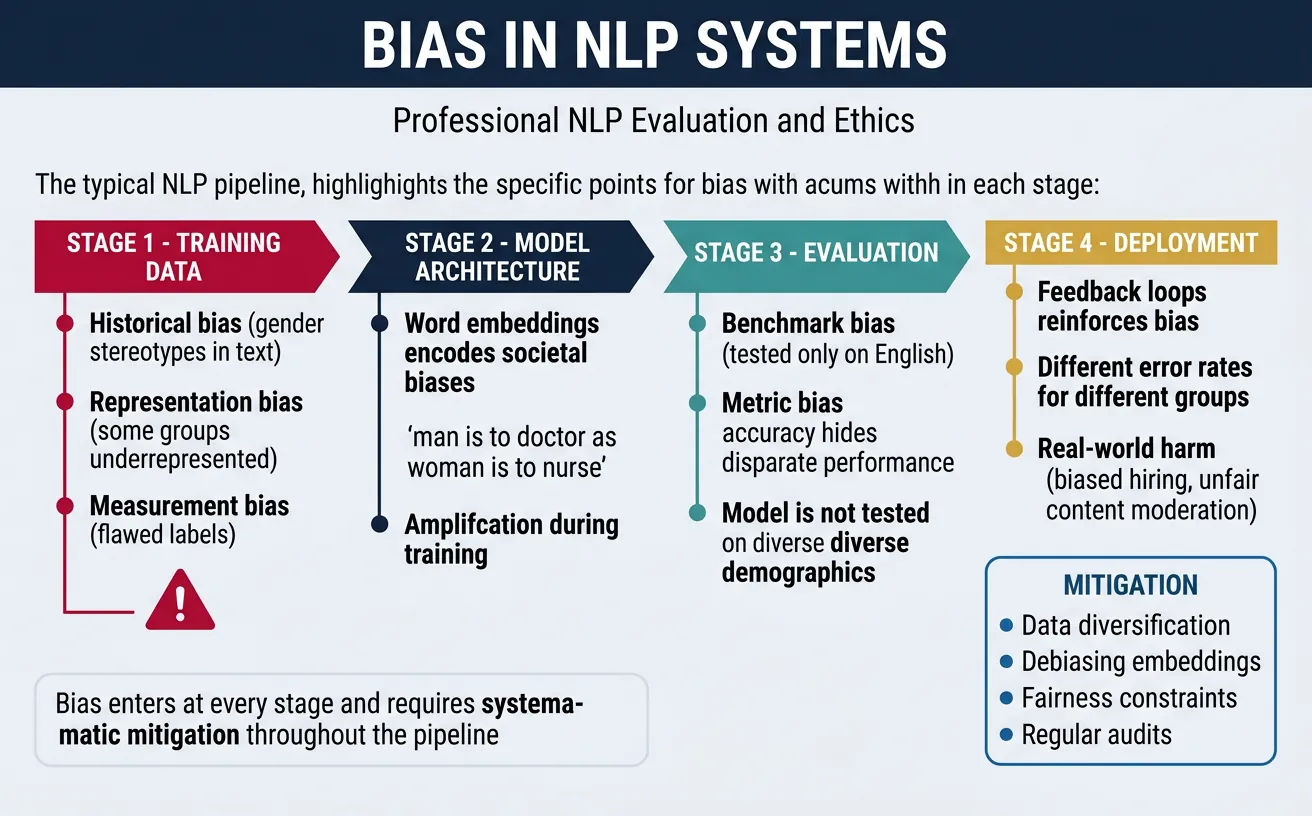

Bias in NLP

Bias in NLP systems is one of the most critical challenges facing the field. Language models learn from human-generated text, which reflects societal biases—stereotypes about gender, race, religion, and other attributes. These biases can cause real harm when NLP systems make decisions about hiring, lending, healthcare, or criminal justice.

Understanding bias requires recognizing its multiple sources: historical bias (prejudices in society reflected in training data), representation bias (underrepresentation of certain groups), measurement bias (flawed data collection), and aggregation bias (one-size-fits-all models that ignore group differences).

Real-World Bias Impact

Documented cases of bias harm include: Resume screening systems penalizing women, sentiment analyzers rating African American English more negatively, translation systems defaulting to male pronouns for "doctor" and female for "nurse", and toxicity detectors disproportionately flagging text mentioning minority groups.

Detecting Bias

Bias detection involves systematically probing models for differential treatment of demographic groups. The Word Embedding Association Test (WEAT) measures bias in word embeddings by comparing associations between concept words and attribute words, similar to the human Implicit Association Test.

import numpy as np

from collections import defaultdict

# Simulated word embeddings for bias demonstration

# In practice, use gensim, fastText, or transformer embeddings

np.random.seed(42)

# Create embeddings with built-in gender bias (for demonstration)

embedding_dim = 50

def create_biased_embeddings():

"""Create word embeddings with known biases for demonstration."""

embeddings = {}

# Gender direction (simplified)

gender_direction = np.random.randn(embedding_dim)

gender_direction = gender_direction / np.linalg.norm(gender_direction)

# Male words - positive on gender direction

male_words = ['man', 'male', 'boy', 'he', 'his', 'father', 'son']

for word in male_words:

base = np.random.randn(embedding_dim) * 0.3

embeddings[word] = base + gender_direction * 0.5

# Female words - negative on gender direction

female_words = ['woman', 'female', 'girl', 'she', 'her', 'mother', 'daughter']

for word in female_words:

base = np.random.randn(embedding_dim) * 0.3

embeddings[word] = base - gender_direction * 0.5

# Career words - slightly biased toward male

career_words = ['engineer', 'scientist', 'doctor', 'lawyer', 'executive', 'programmer']

for word in career_words:

base = np.random.randn(embedding_dim) * 0.3

embeddings[word] = base + gender_direction * 0.15 # Bias!

# Family words - slightly biased toward female

family_words = ['nurse', 'homemaker', 'teacher', 'secretary', 'receptionist']

for word in family_words:

base = np.random.randn(embedding_dim) * 0.3

embeddings[word] = base - gender_direction * 0.15 # Bias!

# Normalize all embeddings

for word in embeddings:

embeddings[word] = embeddings[word] / np.linalg.norm(embeddings[word])

return embeddings, gender_direction

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

def weat_effect_size(X, Y, A, B, embeddings):

"""

Calculate WEAT effect size.

X, Y: Target word sets (e.g., career vs family)

A, B: Attribute word sets (e.g., male vs female)

Measures how much more X is associated with A (vs B)

compared to Y with A (vs B).

"""

def association(word, A, B):

"""Mean similarity to A minus mean similarity to B."""

sim_A = np.mean([cosine_similarity(embeddings[word], embeddings[a]) for a in A])

sim_B = np.mean([cosine_similarity(embeddings[word], embeddings[b]) for b in B])

return sim_A - sim_B

# Association difference between X and Y

X_assoc = [association(x, A, B) for x in X]

Y_assoc = [association(y, A, B) for y in Y]

effect_size = (np.mean(X_assoc) - np.mean(Y_assoc)) / np.std(X_assoc + Y_assoc)

return effect_size, X_assoc, Y_assoc

# Create biased embeddings

embeddings, gender_dir = create_biased_embeddings()

# WEAT Test: Career vs Family, Male vs Female

male_attrs = ['man', 'male', 'he', 'his']

female_attrs = ['woman', 'female', 'she', 'her']

career_words = ['engineer', 'scientist', 'doctor', 'programmer']

family_words = ['nurse', 'homemaker', 'teacher', 'receptionist']

effect, career_assoc, family_assoc = weat_effect_size(

career_words, family_words, male_attrs, female_attrs, embeddings

)

print("Word Embedding Association Test (WEAT) Results:")

print("="*60)

print(f"\nTarget sets: Career vs Family")

print(f"Attribute sets: Male vs Female")

print(f"\nEffect size: {effect:.3f}")

print(" (Positive = Career more associated with Male)")

print(" (Effect size > 0.8 is considered large)")

print("\nPer-word associations (Male - Female):")

for word, assoc in zip(career_words, career_assoc):

direction = '? Male bias' if assoc > 0 else '? Female bias'

print(f" {word:15s}: {assoc:+.3f} {direction}")

for word, assoc in zip(family_words, family_assoc):

direction = '? Male bias' if assoc > 0 else '? Female bias'

print(f" {word:15s}: {assoc:+.3f} {direction}")Beyond word embeddings, we must test bias in downstream applications. This involves creating counterfactual test sets where only demographic information changes, and measuring whether predictions differ.

import numpy as np

from typing import List, Dict, Tuple

class BiasAuditor:

"""

Audit NLP models for demographic bias using counterfactual testing.

"""

def __init__(self, model_predict_fn):

self.model = model_predict_fn

def counterfactual_test(self, templates: List[str],

substitutions: Dict[str, List[str]]) -> Dict:

"""

Test if model predictions change when swapping demographic terms.

templates: Sentences with {placeholder} for demographic terms

substitutions: {placeholder: [term1, term2, ...]}

"""

results = []

for template in templates:

predictions = {}

for placeholder, terms in substitutions.items():

for term in terms:

sentence = template.format(**{placeholder: term})

pred = self.model(sentence)

predictions[term] = pred

results.append({

'template': template,

'predictions': predictions,

'consistent': len(set(predictions.values())) == 1

})

return results

def calculate_demographic_parity(self, results: List[Dict]) -> Dict:

"""

Calculate demographic parity - whether positive rates are equal.

"""

group_positive_rates = {}

for result in results:

for group, pred in result['predictions'].items():

if group not in group_positive_rates:

group_positive_rates[group] = []

group_positive_rates[group].append(1 if pred == 'positive' else 0)

rates = {group: np.mean(preds) for group, preds in group_positive_rates.items()}

max_diff = max(rates.values()) - min(rates.values())

return {'rates': rates, 'max_difference': max_diff}

# Simulated sentiment/toxicity model with bias

def biased_toxicity_model(text: str) -> str:

"""Simulated model that has bias against certain groups."""

text_lower = text.lower()

# Simulate bias: certain groups flagged more often

bias_terms = ['muslim', 'gay', 'black', 'immigrant']

bias_boost = 0.3 if any(term in text_lower for term in bias_terms) else 0

# Base toxicity score

toxic_words = ['hate', 'kill', 'stupid', 'idiot']

base_score = 0.2 + 0.3 * sum(w in text_lower for w in toxic_words)

final_score = base_score + bias_boost

return 'toxic' if final_score > 0.5 else 'not_toxic'

# Audit the model

auditor = BiasAuditor(biased_toxicity_model)

# Test templates

templates = [

"I saw a {identity} person at the store.",

"My neighbor is {identity}.",

"The {identity} community is growing.",

"I talked to a {identity} friend.",

]

identity_terms = {

'identity': ['Christian', 'Muslim', 'Jewish', 'atheist',

'white', 'black', 'Asian', 'Hispanic',

'straight', 'gay', 'American', 'immigrant']

}

results = auditor.counterfactual_test(templates, identity_terms)

print("Bias Audit Results:")

print("="*60)

# Show inconsistent cases (bias detected)

inconsistent = [r for r in results if not r['consistent']]

print(f"\n?? Found {len(inconsistent)} biased templates out of {len(templates)}")

for result in inconsistent:

print(f"\nTemplate: '{result['template']}'")

for term, pred in result['predictions'].items():

flag = '??' if pred == 'toxic' else ' '

print(f" {flag} {term:12s} ? {pred}")

# Calculate demographic parity

parity = auditor.calculate_demographic_parity(results)

print(f"\n?? Demographic Parity Analysis:")

print(f"Max difference in positive rates: {parity['max_difference']:.3f}")

print("(Should be close to 0 for fair model)")

print("\nPositive (toxic) rates by group:")

for group, rate in sorted(parity['rates'].items(), key=lambda x: -x[1]):

bar = '¦' * int(rate * 20)

print(f" {group:12s}: {rate:.2f} {bar}")Mitigating Bias

Bias mitigation strategies operate at different stages: pre-processing (debiasing training data), in-processing (modifying the learning algorithm), and post-processing (adjusting model outputs). Each approach has trade-offs between effectiveness, fairness definitions, and potential performance degradation.

For word embeddings, debiasing techniques like gender-neutral embeddings project out the gender direction while preserving other semantic information. For language models, counterfactual data augmentation balances the training data, while adversarial debiasing trains the model to be unable to predict protected attributes.

import numpy as np

def debias_word_embeddings(embeddings, gender_direction,

definitional_pairs, equalize_pairs):

"""

Debias word embeddings using the method from Bolukbasi et al. (2016).

Steps:

1. Identify gender direction from definitional pairs

2. Neutralize: Remove gender component from non-gendered words

3. Equalize: Make gendered pairs equidistant from neutral words

"""

debiased = {word: emb.copy() for word, emb in embeddings.items()}

# Normalize gender direction

gender_dir = gender_direction / np.linalg.norm(gender_direction)

# Step 1: Identify words to neutralize (non-gendered words)

gendered_words = set()

for pair in definitional_pairs + equalize_pairs:

gendered_words.update(pair)

neutral_words = [w for w in embeddings if w not in gendered_words]

# Step 2: Neutralize - project out gender component

print("Neutralizing non-gendered words...")

for word in neutral_words:

# Remove gender direction component

gender_component = np.dot(debiased[word], gender_dir) * gender_dir

debiased[word] = debiased[word] - gender_component

debiased[word] = debiased[word] / np.linalg.norm(debiased[word])

# Step 3: Equalize - make pairs equidistant from neutral words

print("Equalizing gendered pairs...")

for word1, word2 in equalize_pairs:

if word1 in debiased and word2 in debiased:

# Find midpoint

midpoint = (debiased[word1] + debiased[word2]) / 2

# Remove gender from midpoint

midpoint = midpoint - np.dot(midpoint, gender_dir) * gender_dir

# Set both words to be on opposite sides of midpoint along gender direction

debiased[word1] = midpoint + gender_dir * 0.5

debiased[word2] = midpoint - gender_dir * 0.5

# Normalize

debiased[word1] = debiased[word1] / np.linalg.norm(debiased[word1])

debiased[word2] = debiased[word2] / np.linalg.norm(debiased[word2])

return debiased

# Demonstrate debiasing

print("Word Embedding Debiasing Demonstration:")

print("="*60)

# Using embeddings from earlier

embeddings, gender_dir = create_biased_embeddings()

definitional_pairs = [('man', 'woman'), ('male', 'female'), ('he', 'she')]

equalize_pairs = [('father', 'mother'), ('son', 'daughter')]

debiased_embeddings = debias_word_embeddings(

embeddings, gender_dir, definitional_pairs, equalize_pairs

)

# Compare bias before and after

print("\nBias comparison (male - female association):")

male_attrs = ['man', 'male', 'he']

female_attrs = ['woman', 'female', 'she']

test_words = ['engineer', 'scientist', 'nurse', 'teacher']

print(f"{'Word':15s} | {'Before':10s} | {'After':10s} | {'Change':10s}")

print("-" * 50)

for word in test_words:

# Before debiasing

before_male = np.mean([cosine_similarity(embeddings[word], embeddings[a]) for a in male_attrs])

before_female = np.mean([cosine_similarity(embeddings[word], embeddings[a]) for a in female_attrs])

before_bias = before_male - before_female

# After debiasing

after_male = np.mean([cosine_similarity(debiased_embeddings[word], debiased_embeddings[a]) for a in male_attrs])

after_female = np.mean([cosine_similarity(debiased_embeddings[word], debiased_embeddings[a]) for a in female_attrs])

after_bias = after_male - after_female

change = after_bias - before_bias

print(f"{word:15s} | {before_bias:+.4f} | {after_bias:+.4f} | {change:+.4f}")Counterfactual Data Augmentation

Create balanced training data by generating counterfactual examples where demographic terms are swapped. For every "The doctor finished his shift", add "The doctor finished her shift". This forces the model to learn that occupations aren't inherently gendered.

import re

from typing import List, Tuple

class CounterfactualAugmenter:

"""

Augment training data with counterfactual examples for bias mitigation.

"""

def __init__(self):

# Define swap pairs

self.gender_swaps = {

'he': 'she', 'she': 'he',

'him': 'her', 'her': 'him',

'his': 'her', 'hers': 'his',

'man': 'woman', 'woman': 'man',

'men': 'women', 'women': 'men',

'male': 'female', 'female': 'male',

'father': 'mother', 'mother': 'father',

'son': 'daughter', 'daughter': 'son',

'brother': 'sister', 'sister': 'brother',

'husband': 'wife', 'wife': 'husband',

'boy': 'girl', 'girl': 'boy',

'mr': 'ms', 'ms': 'mr',

'himself': 'herself', 'herself': 'himself',

}

self.name_swaps = {

'john': 'mary', 'mary': 'john',

'james': 'jennifer', 'jennifer': 'james',

'michael': 'sarah', 'sarah': 'michael',

'david': 'emily', 'emily': 'david',

}

def swap_gender(self, text: str) -> str:

"""Swap gender terms in text."""

words = text.split()

swapped_words = []

for word in words:

lower_word = word.lower()

# Check for swap

if lower_word in self.gender_swaps:

new_word = self.gender_swaps[lower_word]

# Preserve capitalization

if word[0].isupper():

new_word = new_word.capitalize()

swapped_words.append(new_word)

elif lower_word in self.name_swaps:

new_word = self.name_swaps[lower_word]

if word[0].isupper():

new_word = new_word.capitalize()

swapped_words.append(new_word)

else:

swapped_words.append(word)

return ' '.join(swapped_words)

def augment_dataset(self, texts: List[str], labels: List[int]) -> Tuple[List[str], List[int]]:

"""Augment dataset with counterfactual examples."""

augmented_texts = []

augmented_labels = []

for text, label in zip(texts, labels):

# Add original

augmented_texts.append(text)

augmented_labels.append(label)

# Add counterfactual if different

counterfactual = self.swap_gender(text)

if counterfactual != text:

augmented_texts.append(counterfactual)

augmented_labels.append(label) # Same label!

return augmented_texts, augmented_labels

# Demonstrate counterfactual augmentation

augmenter = CounterfactualAugmenter()

# Example biased training data

original_texts = [

"The doctor finished his surgery.",

"The nurse helped her patient.",

"John is a great engineer.",

"Mary loves cooking for her family.",

"He became CEO after years of hard work.",

"She was promoted to senior nurse.",

]

original_labels = [1, 1, 1, 1, 1, 1] # All positive sentiment

print("Counterfactual Data Augmentation:")

print("="*60)

for text in original_texts:

counterfactual = augmenter.swap_gender(text)

print(f"Original: {text}")

print(f"Counterfactual: {counterfactual}")

print()

# Full dataset augmentation

aug_texts, aug_labels = augmenter.augment_dataset(original_texts, original_labels)

print(f"Original dataset size: {len(original_texts)}")

print(f"Augmented dataset size: {len(aug_texts)}")

print(f"\nBy training on both versions with the same label,")

print("the model learns that gender shouldn't affect predictions.")Fairness & Equity

Fairness in machine learning is a multifaceted concept with multiple competing definitions that often cannot all be satisfied simultaneously. Understanding these definitions helps you choose appropriate fairness criteria for your specific application context. The choice depends on the domain, stakeholders, and the consequences of different types of errors.

Key fairness definitions include: Demographic Parity (equal positive rates across groups), Equalized Odds (equal true positive and false positive rates), Equal Opportunity (equal true positive rates for those who deserve positive outcomes), and Individual Fairness (similar individuals get similar predictions). Research has shown these definitions can be mutually exclusive.

Fairness Trade-offs

Impossibility Theorem: It's mathematically proven that you cannot simultaneously satisfy demographic parity, equalized odds, and calibration (except in degenerate cases). You must choose which fairness criterion matters most for your application.

import numpy as np

import matplotlib.pyplot as plt

from typing import Dict

class FairnessMetrics:

"""

Calculate various fairness metrics for binary classification.

"""

def __init__(self, y_true, y_pred, sensitive_attr):

"""

y_true: Ground truth labels (0 or 1)

y_pred: Predicted labels (0 or 1)

sensitive_attr: Group membership (0 or 1 for binary groups)

"""

self.y_true = np.array(y_true)

self.y_pred = np.array(y_pred)

self.groups = np.array(sensitive_attr)

def _group_metrics(self, group_mask):

"""Calculate metrics for a specific group."""

y_t = self.y_true[group_mask]

y_p = self.y_pred[group_mask]

tp = np.sum((y_t == 1) & (y_p == 1))

fp = np.sum((y_t == 0) & (y_p == 1))

fn = np.sum((y_t == 1) & (y_p == 0))

tn = np.sum((y_t == 0) & (y_p == 0))

tpr = tp / (tp + fn) if (tp + fn) > 0 else 0 # True Positive Rate

fpr = fp / (fp + tn) if (fp + tn) > 0 else 0 # False Positive Rate

positive_rate = (tp + fp) / len(y_t) if len(y_t) > 0 else 0

return {'tpr': tpr, 'fpr': fpr, 'positive_rate': positive_rate,

'tp': tp, 'fp': fp, 'fn': fn, 'tn': tn, 'n': len(y_t)}

def demographic_parity(self) -> Dict:

"""

Demographic Parity: P(Y_hat=1 | A=0) = P(Y_hat=1 | A=1)

Positive prediction rates should be equal across groups.

"""

metrics_0 = self._group_metrics(self.groups == 0)

metrics_1 = self._group_metrics(self.groups == 1)

gap = abs(metrics_0['positive_rate'] - metrics_1['positive_rate'])

ratio = min(metrics_0['positive_rate'], metrics_1['positive_rate']) / \

max(metrics_0['positive_rate'], metrics_1['positive_rate']) \

if max(metrics_0['positive_rate'], metrics_1['positive_rate']) > 0 else 0

return {

'group_0_rate': metrics_0['positive_rate'],

'group_1_rate': metrics_1['positive_rate'],

'gap': gap,

'ratio': ratio, # 80% rule: ratio >= 0.8 is often used as threshold

'satisfied': gap < 0.1 # Threshold for "fair enough"

}

def equalized_odds(self) -> Dict:

"""

Equalized Odds: Equal TPR and FPR across groups.

P(Y_hat=1 | Y=1, A=a) and P(Y_hat=1 | Y=0, A=a) equal for all a.

"""

metrics_0 = self._group_metrics(self.groups == 0)

metrics_1 = self._group_metrics(self.groups == 1)

tpr_gap = abs(metrics_0['tpr'] - metrics_1['tpr'])

fpr_gap = abs(metrics_0['fpr'] - metrics_1['fpr'])

return {

'group_0_tpr': metrics_0['tpr'],

'group_1_tpr': metrics_1['tpr'],

'group_0_fpr': metrics_0['fpr'],

'group_1_fpr': metrics_1['fpr'],

'tpr_gap': tpr_gap,

'fpr_gap': fpr_gap,

'satisfied': tpr_gap < 0.1 and fpr_gap < 0.1

}

def equal_opportunity(self) -> Dict:

"""

Equal Opportunity: Equal TPR across groups.

Among those who deserve positive outcome, equal chance of getting it.

"""

metrics_0 = self._group_metrics(self.groups == 0)

metrics_1 = self._group_metrics(self.groups == 1)

tpr_gap = abs(metrics_0['tpr'] - metrics_1['tpr'])

return {

'group_0_tpr': metrics_0['tpr'],

'group_1_tpr': metrics_1['tpr'],

'gap': tpr_gap,

'satisfied': tpr_gap < 0.1

}

# Simulate a hiring model with fairness issues

np.random.seed(42)

n_samples = 1000

# Generate data

group = np.random.binomial(1, 0.4, n_samples) # 40% minority group

# True qualification (what hiring SHOULD be based on)

qualification = np.random.binomial(1, 0.5, n_samples)

# Biased model: lower positive rate for minority group

bias_factor = np.where(group == 1, 0.7, 1.0) # Minority gets 70% of their fair chance

y_pred = np.random.binomial(1, qualification * 0.8 * bias_factor)

# For demonstration, true label is qualification

y_true = qualification

# Calculate fairness metrics

fairness = FairnessMetrics(y_true, y_pred, group)

print("Fairness Audit for Hiring Model:")

print("="*60)

print("Groups: 0 = Majority, 1 = Minority")

# Demographic Parity

dp = fairness.demographic_parity()

print(f"\n1. Demographic Parity (equal hiring rates):")

print(f" Majority hiring rate: {dp['group_0_rate']:.3f}")

print(f" Minority hiring rate: {dp['group_1_rate']:.3f}")

print(f" Gap: {dp['gap']:.3f}")

print(f" 80% Rule Ratio: {dp['ratio']:.3f} {'?' if dp['ratio'] >= 0.8 else '? VIOLATION'}")

# Equalized Odds

eo = fairness.equalized_odds()

print(f"\n2. Equalized Odds (equal TPR and FPR):")

print(f" Majority TPR: {eo['group_0_tpr']:.3f}, FPR: {eo['group_0_fpr']:.3f}")

print(f" Minority TPR: {eo['group_1_tpr']:.3f}, FPR: {eo['group_1_fpr']:.3f}")

print(f" TPR Gap: {eo['tpr_gap']:.3f}, FPR Gap: {eo['fpr_gap']:.3f}")

print(f" Satisfied: {'?' if eo['satisfied'] else '?'}")

# Equal Opportunity

eop = fairness.equal_opportunity()

print(f"\n3. Equal Opportunity (equal TPR):")

print(f" Among qualified candidates:")

print(f" Majority hired: {eop['group_0_tpr']:.1%}")

print(f" Minority hired: {eop['group_1_tpr']:.1%}")

print(f" Gap: {eop['gap']:.3f}")

print(f" Satisfied: {'?' if eop['satisfied'] else '?'}")Fairness-Accuracy Trade-off

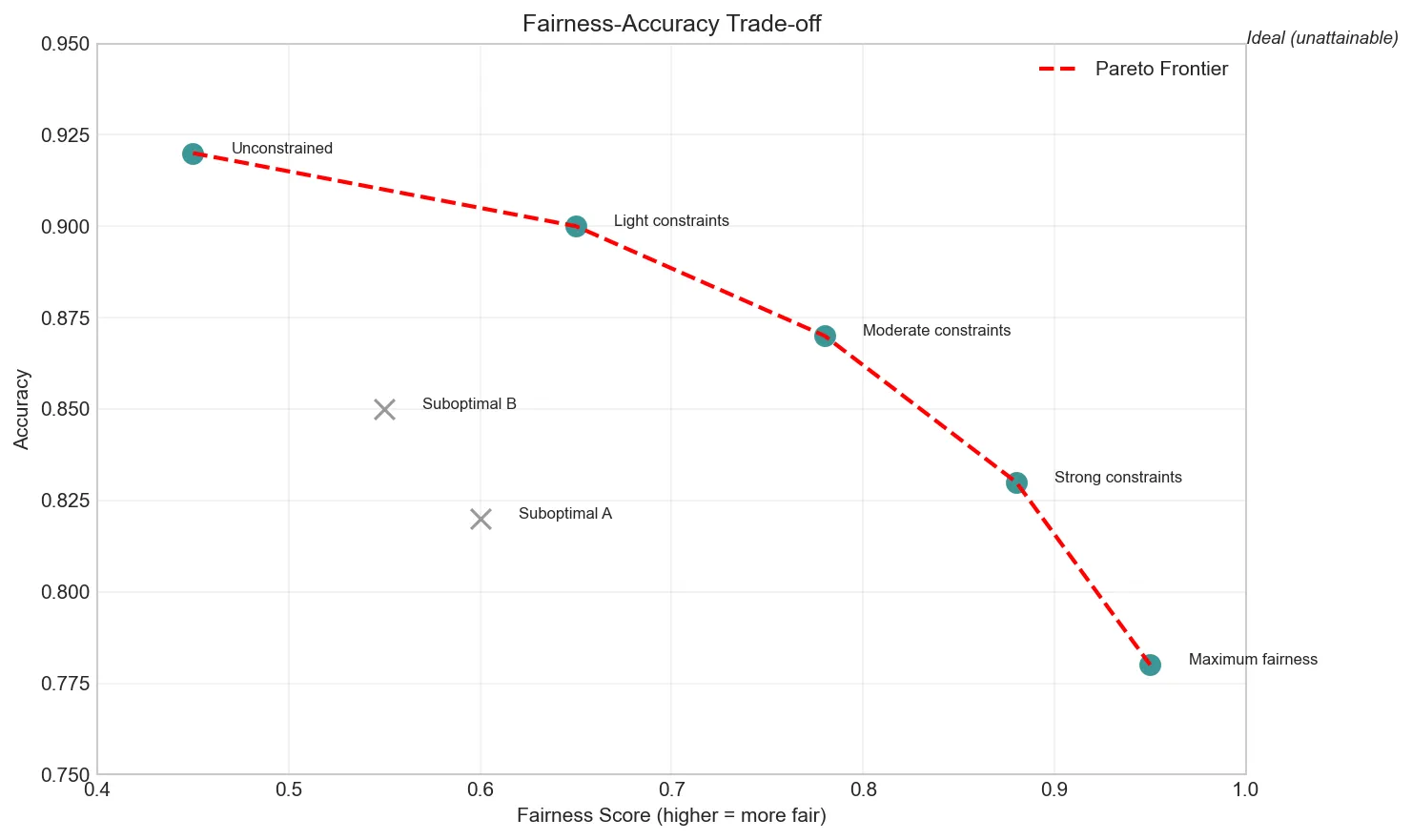

Enforcing fairness constraints often reduces model accuracy. The Pareto frontier represents optimal trade-offs—no model can be better on both fairness AND accuracy than models on this frontier. Stakeholders must decide the acceptable accuracy cost for fairness gains.

import numpy as np

import matplotlib.pyplot as plt

# Simulate fairness-accuracy trade-off

np.random.seed(42)

# Different model configurations with varying fairness constraints

models = [

{'name': 'Unconstrained', 'accuracy': 0.92, 'fairness': 0.45},

{'name': 'Light constraints', 'accuracy': 0.90, 'fairness': 0.65},

{'name': 'Moderate constraints', 'accuracy': 0.87, 'fairness': 0.78},

{'name': 'Strong constraints', 'accuracy': 0.83, 'fairness': 0.88},

{'name': 'Maximum fairness', 'accuracy': 0.78, 'fairness': 0.95},

# Some suboptimal models

{'name': 'Suboptimal A', 'accuracy': 0.82, 'fairness': 0.60},

{'name': 'Suboptimal B', 'accuracy': 0.85, 'fairness': 0.55},

]

# Identify Pareto frontier

def is_pareto_optimal(point, all_points):

"""Check if point is on Pareto frontier."""

for other in all_points:

if other['accuracy'] >= point['accuracy'] and other['fairness'] >= point['fairness']:

if other['accuracy'] > point['accuracy'] or other['fairness'] > point['fairness']:

return False

return True

pareto_models = [m for m in models if is_pareto_optimal(m, models)]

print("Fairness-Accuracy Trade-off Analysis:")

print("="*60)

print(f"{'Model':<25} {'Accuracy':<12} {'Fairness':<12} {'Pareto?':<10}")

print("-" * 60)

for m in models:

is_pareto = '? Optimal' if m in pareto_models else '?'

print(f"{m['name']:<25} {m['accuracy']:<12.2f} {m['fairness']:<12.2f} {is_pareto}")

# Visualization

plt.figure(figsize=(10, 6))

# Plot all models

for m in models:

color = '#3B9797' if m in pareto_models else '#999999'

marker = 'o' if m in pareto_models else 'x'

plt.scatter(m['fairness'], m['accuracy'], c=color, s=100, marker=marker)

plt.annotate(m['name'], (m['fairness'] + 0.02, m['accuracy']), fontsize=8)

# Draw Pareto frontier

pareto_sorted = sorted(pareto_models, key=lambda x: x['fairness'])

fairness_vals = [m['fairness'] for m in pareto_sorted]

accuracy_vals = [m['accuracy'] for m in pareto_sorted]

plt.plot(fairness_vals, accuracy_vals, 'r--', linewidth=2, label='Pareto Frontier')

plt.xlabel('Fairness Score (higher = more fair)')

plt.ylabel('Accuracy')

plt.title('Fairness-Accuracy Trade-off')

plt.legend()

plt.grid(True, alpha=0.3)

plt.xlim(0.4, 1.0)

plt.ylim(0.75, 0.95)

# Add annotation

plt.annotate('Ideal (unattainable)', xy=(1.0, 0.95), fontsize=9, fontstyle='italic')

plt.tight_layout()

plt.show()

print("\n?? Key Insight: Models on the Pareto frontier represent")

print(" optimal trade-offs. Choosing among them is a value judgment.")

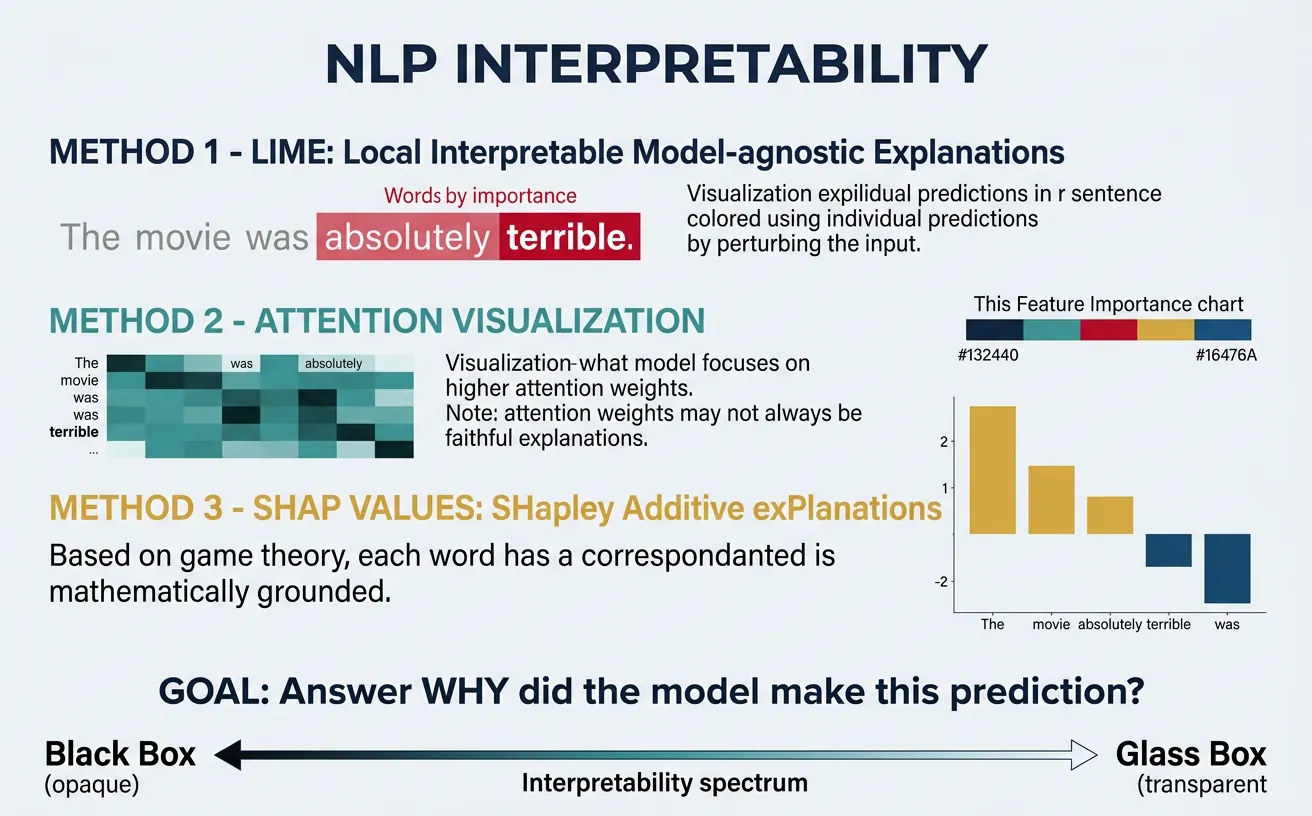

Interpretability & Explainability

As NLP models become more complex—from simple logistic regression to billion-parameter transformers—understanding why they make specific predictions becomes crucial. Interpretability helps debug models, build trust with users, satisfy regulatory requirements, and catch biased or incorrect reasoning before deployment.

We distinguish between intrinsically interpretable models (simple enough to understand directly, like decision trees or attention weights) and post-hoc explanations for black-box models (LIME, SHAP, integrated gradients). Each approach has trade-offs between fidelity to the actual model behavior and human comprehensibility.

Attention ? Explanation

Caution: While attention weights show what the model "looks at," research shows they don't reliably explain why predictions are made. Attention can be manipulated without changing outputs. Use attention as one signal among many, not as definitive explanation.

import numpy as np

import matplotlib.pyplot as plt

class LIME_TextExplainer:

"""

Simplified LIME (Local Interpretable Model-agnostic Explanations)

for text classification.

LIME works by:

1. Perturbing the input (removing words)

2. Getting model predictions for perturbed inputs

3. Fitting a simple linear model locally

4. Using linear coefficients as word importance

"""

def __init__(self, model_predict_proba, num_samples=500):

self.model = model_predict_proba

self.num_samples = num_samples

def explain(self, text, class_idx=1):

"""Explain prediction for a single text."""

words = text.split()

n_words = len(words)

# Generate perturbed samples

perturbations = []

predictions = []

for _ in range(self.num_samples):

# Randomly mask words

mask = np.random.binomial(1, 0.7, n_words) # Keep 70% on average

perturbed_words = [w for w, m in zip(words, mask) if m]

perturbed_text = ' '.join(perturbed_words) if perturbed_words else text

perturbations.append(mask)

predictions.append(self.model(perturbed_text)[class_idx])

# Fit weighted linear regression

X = np.array(perturbations)

y = np.array(predictions)

# Add intercept

X_with_intercept = np.column_stack([np.ones(len(X)), X])

# Solve linear regression (simplified - no weighting)

try:

coefficients = np.linalg.lstsq(X_with_intercept, y, rcond=None)[0]

word_importance = coefficients[1:] # Exclude intercept

except:

word_importance = np.zeros(n_words)

return dict(zip(words, word_importance))

# Simulated sentiment model

def mock_sentiment_model(text):

"""Simulated model that returns [neg_prob, pos_prob]."""

text_lower = text.lower()

positive_words = ['great', 'excellent', 'amazing', 'love', 'wonderful', 'best']

negative_words = ['terrible', 'awful', 'hate', 'worst', 'bad', 'horrible']

pos_count = sum(w in text_lower for w in positive_words)

neg_count = sum(w in text_lower for w in negative_words)

# Softmax-like transformation

pos_score = np.exp(pos_count - neg_count)

total = pos_score + 1

pos_prob = pos_score / total

return [1 - pos_prob, pos_prob]

# Explain a prediction

explainer = LIME_TextExplainer(mock_sentiment_model, num_samples=300)

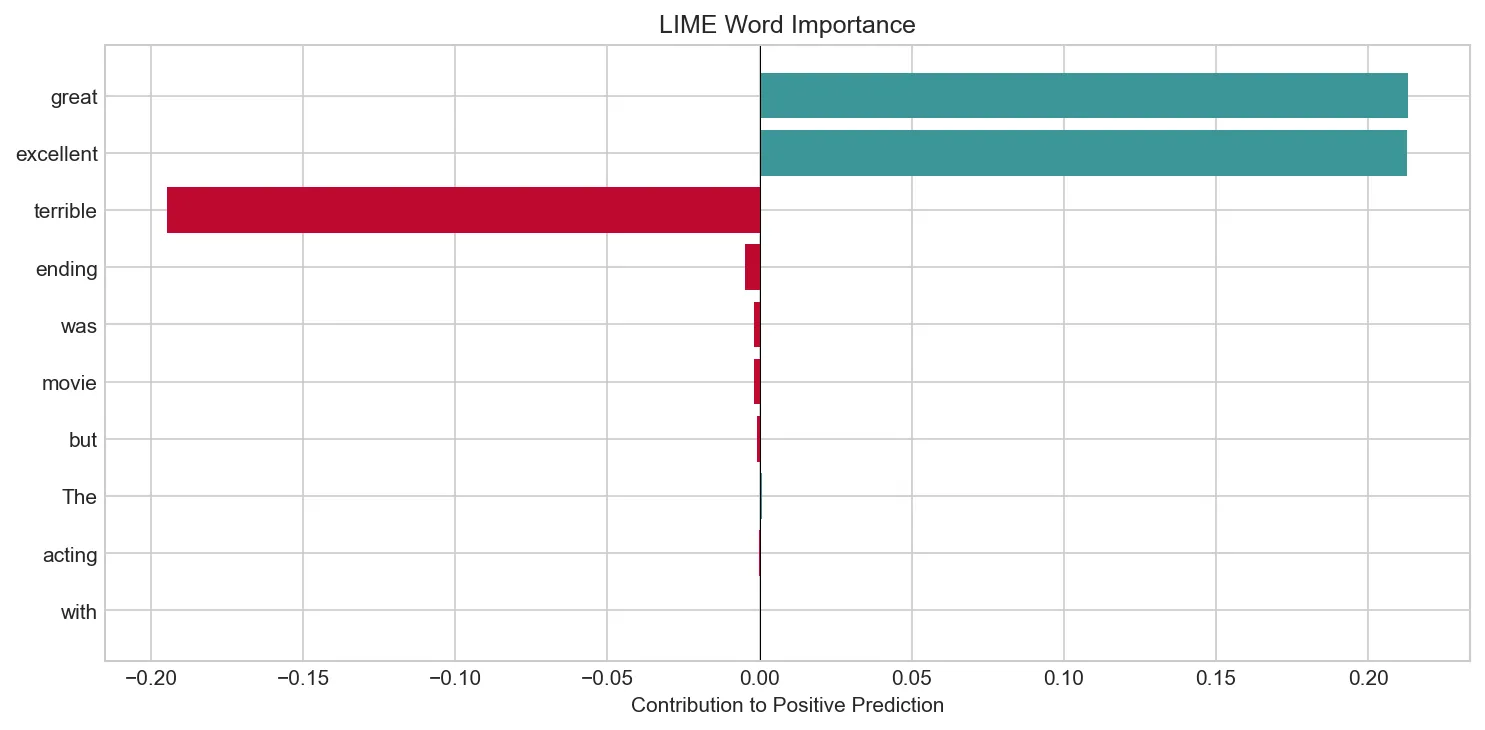

test_text = "The movie was great with excellent acting but terrible ending"

print("LIME Explanation for Text Classification:")

print("="*60)

print(f"Text: '{test_text}'")

probs = mock_sentiment_model(test_text)

print(f"Prediction: Negative={probs[0]:.3f}, Positive={probs[1]:.3f}")

importances = explainer.explain(test_text, class_idx=1) # Explain positive class

print(f"\nWord Importance for POSITIVE class:")

sorted_importance = sorted(importances.items(), key=lambda x: abs(x[1]), reverse=True)

for word, imp in sorted_importance:

direction = '+' if imp > 0 else '-'

bar = '¦' * int(abs(imp) * 50)

print(f" {word:15s}: {direction}{abs(imp):.3f} {bar}")

# Visualize

words = [w for w, _ in sorted_importance]

imps = [i for _, i in sorted_importance]

colors = ['#3B9797' if i > 0 else '#BF092F' for i in imps]

plt.figure(figsize=(10, 5))

plt.barh(words[::-1], imps[::-1], color=colors[::-1])

plt.axvline(x=0, color='black', linewidth=0.5)

plt.xlabel('Contribution to Positive Prediction')

plt.title('LIME Word Importance')

plt.tight_layout()

plt.show()

Integrated Gradients is a principled method for explaining neural network predictions. It computes the gradient of the output with respect to input features, integrated along a path from a baseline (usually all zeros) to the actual input. This satisfies desirable axiomatic properties including completeness (attributions sum to the prediction).

import numpy as np

import matplotlib.pyplot as plt

class IntegratedGradientsExplainer:

"""

Simplified Integrated Gradients for text.

IG computes: (x - baseline) * integral(gradient(x) from baseline to x)

For text, we approximate by:

1. Creating a path from baseline (zeros/padding) to input embeddings

2. Computing gradients at points along the path

3. Summing gradients and multiplying by (input - baseline)

"""

def __init__(self, embedding_fn, model_fn, n_steps=50):

self.embed = embedding_fn

self.model = model_fn

self.n_steps = n_steps

def compute_attributions(self, tokens, target_class=1):

"""Compute IG attributions for each token."""

# Get input embeddings

input_embeds = self.embed(tokens) # shape: (n_tokens, embed_dim)

baseline = np.zeros_like(input_embeds) # Zero baseline

# Compute gradients along path

scaled_inputs = []

for alpha in np.linspace(0, 1, self.n_steps):

scaled = baseline + alpha * (input_embeds - baseline)

scaled_inputs.append(scaled)

# Compute gradients (simplified - in practice use autograd)

gradients = []

for scaled in scaled_inputs:

# Numerical gradient approximation

grad = np.zeros_like(scaled)

epsilon = 1e-4

for i in range(scaled.shape[0]):

for j in range(scaled.shape[1]):

scaled_plus = scaled.copy()

scaled_plus[i, j] += epsilon

scaled_minus = scaled.copy()

scaled_minus[i, j] -= epsilon

out_plus = self.model(scaled_plus)[target_class]

out_minus = self.model(scaled_minus)[target_class]

grad[i, j] = (out_plus - out_minus) / (2 * epsilon)

gradients.append(grad)

# Average gradients

avg_gradients = np.mean(gradients, axis=0)

# Integrated gradients formula

ig = (input_embeds - baseline) * avg_gradients

# Sum over embedding dimension to get per-token attribution

token_attributions = ig.sum(axis=1)

return token_attributions

# Simulated embedding and model for demonstration

np.random.seed(42)

def simple_embedding(tokens):

"""Map tokens to embeddings."""

embed_dim = 10

# Consistent embeddings based on token hash

embeddings = []

for token in tokens:

np.random.seed(hash(token) % 2**32)

embeddings.append(np.random.randn(embed_dim) * 0.1)

return np.array(embeddings)

def simple_model(embeddings):

"""Classify based on embeddings."""

# Sum embeddings and apply simple linear model

pooled = embeddings.mean(axis=0)

# Learned weights (fixed for demo)

np.random.seed(0)

weights = np.random.randn(len(pooled))

logit = np.dot(pooled, weights)

prob = 1 / (1 + np.exp(-logit))

return [1 - prob, prob]

# Note: Full IG computation is slow for demo, showing concept instead

print("Integrated Gradients Concept:")

print("="*60)

print("""

Integrated Gradients computes attribution by:

1. Start from baseline (e.g., zero embedding)

2. Interpolate: x(a) = baseline + a(input - baseline), a ? [0,1]

3. Compute gradients: ?F/?x at each interpolation point

4. Integrate: IG(x) = (x - baseline) × ?0¹ ?F/?x(a) da

Properties:

- Completeness: S IG(x?) = F(x) - F(baseline)

- Sensitivity: If feature matters, attribution ? 0

- Implementation Invariance: Same attributions for equivalent models

Advantage over LIME:

- No approximation with surrogate model

- Mathematically grounded

- Considers feature interactions via gradients

""")

# Visualize concept with toy example

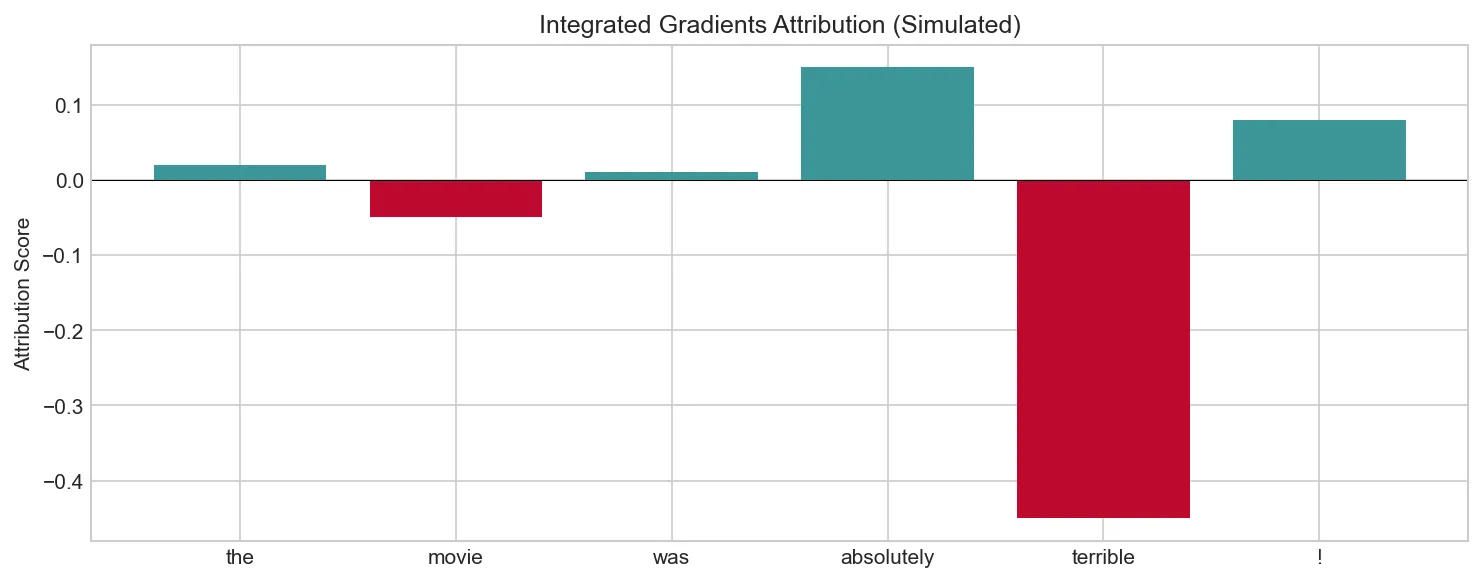

tokens = ['the', 'movie', 'was', 'absolutely', 'terrible', '!']

# Simulated attributions (in practice computed via backprop)

attributions = np.array([0.02, -0.05, 0.01, 0.15, -0.45, 0.08])

plt.figure(figsize=(10, 4))

colors = ['#3B9797' if a > 0 else '#BF092F' for a in attributions]

plt.bar(tokens, attributions, color=colors)

plt.axhline(y=0, color='black', linewidth=0.5)

plt.ylabel('Attribution Score')

plt.title('Integrated Gradients Attribution (Simulated)')

plt.tight_layout()

plt.show()

print("\nInterpretation: 'terrible' has strong negative attribution,")

print("indicating it pushes prediction toward negative class.")

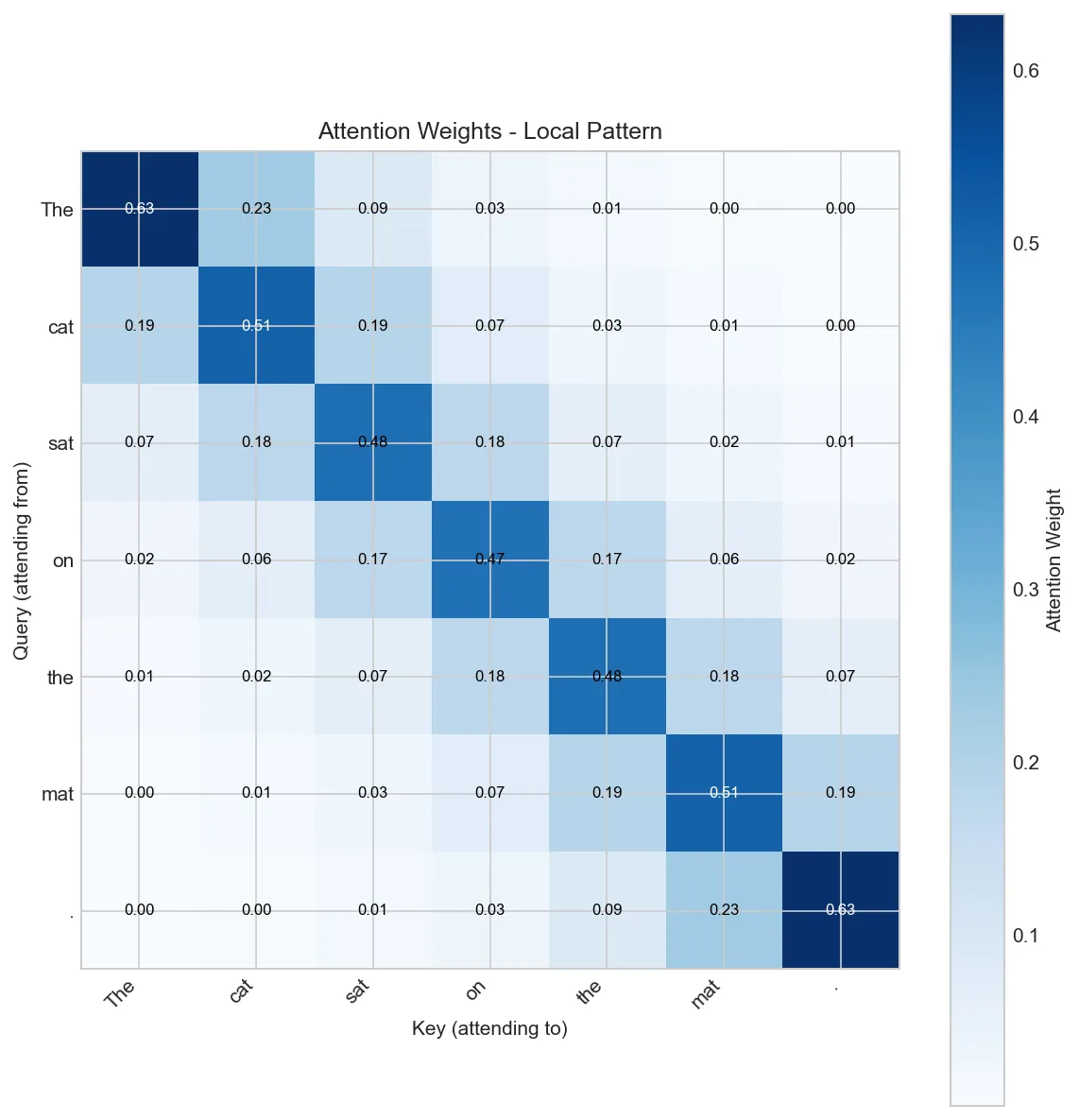

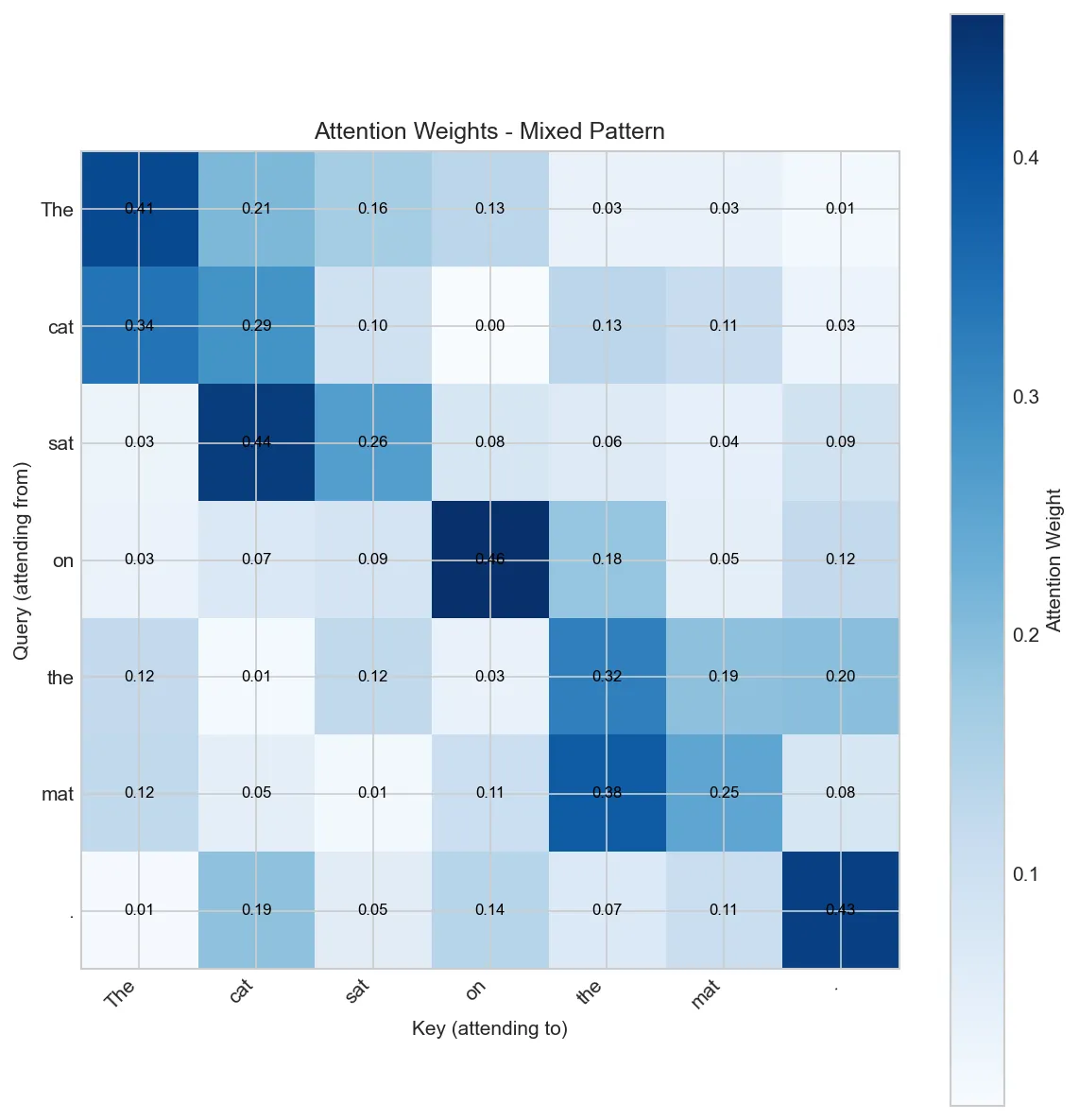

Attention Visualization

While attention weights have limitations as explanations, they remain useful for understanding what patterns the model learns. Visualizing attention can reveal if the model attends to relevant tokens, learns syntactic patterns, or exhibits concerning behaviors like always attending to punctuation.

import numpy as np

import matplotlib.pyplot as plt

def visualize_attention(tokens, attention_matrix, layer_name="Layer 1, Head 1"):

"""

Visualize attention weights as a heatmap.

attention_matrix: (seq_len, seq_len) attention weights

"""

fig, ax = plt.subplots(figsize=(8, 8))

im = ax.imshow(attention_matrix, cmap='Blues')

# Set tick labels

ax.set_xticks(np.arange(len(tokens)))

ax.set_yticks(np.arange(len(tokens)))

ax.set_xticklabels(tokens, rotation=45, ha='right')

ax.set_yticklabels(tokens)

# Add colorbar

cbar = ax.figure.colorbar(im, ax=ax)

cbar.set_label('Attention Weight')

# Add value annotations

for i in range(len(tokens)):

for j in range(len(tokens)):

text = ax.text(j, i, f'{attention_matrix[i, j]:.2f}',

ha='center', va='center', fontsize=8,

color='white' if attention_matrix[i, j] > 0.5 else 'black')

ax.set_xlabel('Key (attending to)')

ax.set_ylabel('Query (attending from)')

ax.set_title(f'Attention Weights - {layer_name}')

plt.tight_layout()

plt.show()

# Simulate attention patterns

np.random.seed(42)

tokens = ['The', 'cat', 'sat', 'on', 'the', 'mat', '.']

n = len(tokens)

# Create realistic attention patterns

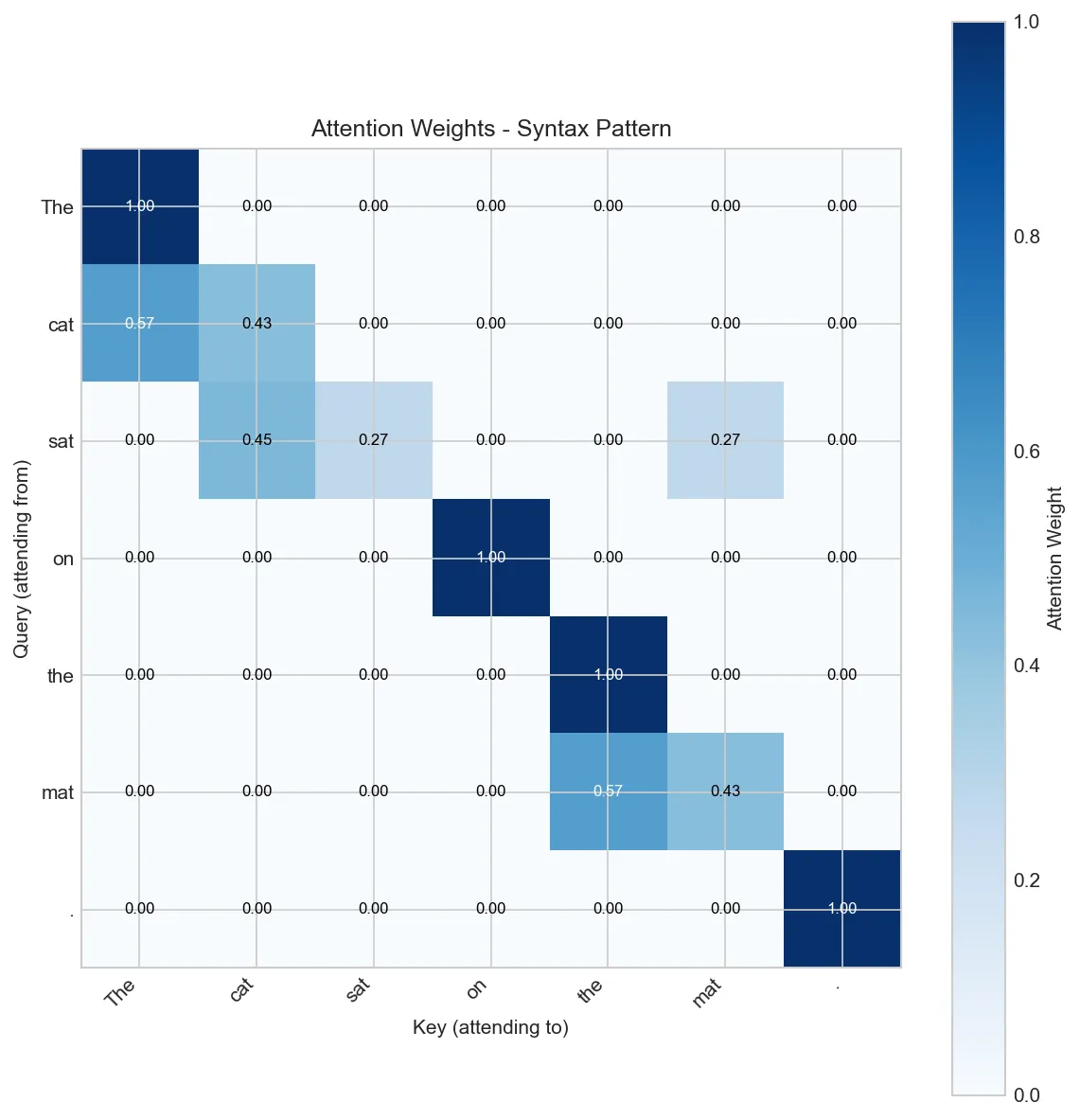

def create_attention_pattern(tokens, pattern_type='mixed'):

"""Create different attention patterns."""

n = len(tokens)

if pattern_type == 'local':

# Attend to nearby tokens

attn = np.zeros((n, n))

for i in range(n):

for j in range(n):

attn[i, j] = np.exp(-abs(i - j))

elif pattern_type == 'syntax':

# Subject-verb, determiner-noun patterns

attn = np.eye(n) * 0.3 # Self-attention base

attn[1, 0] = 0.4 # cat -> The

attn[2, 1] = 0.5 # sat -> cat (subject-verb)

attn[5, 4] = 0.4 # mat -> the

attn[2, 5] = 0.3 # sat -> mat (verb-object)

elif pattern_type == 'mixed':

# Combination of patterns

attn = np.random.rand(n, n) * 0.2

attn += np.eye(n) * 0.3

# Subject-verb connection

attn[2, 1] = 0.6 # sat -> cat

# Determiner-noun

attn[1, 0] = 0.5 # cat -> The

attn[5, 4] = 0.5 # mat -> the

# Normalize rows to sum to 1 (softmax-like)

attn = attn / attn.sum(axis=1, keepdims=True)

return attn

# Show different attention patterns

for pattern in ['local', 'syntax', 'mixed']:

print(f"\nAttention Pattern: {pattern.upper()}")

attn = create_attention_pattern(tokens, pattern)

visualize_attention(tokens, attn, f"{pattern.capitalize()} Pattern")

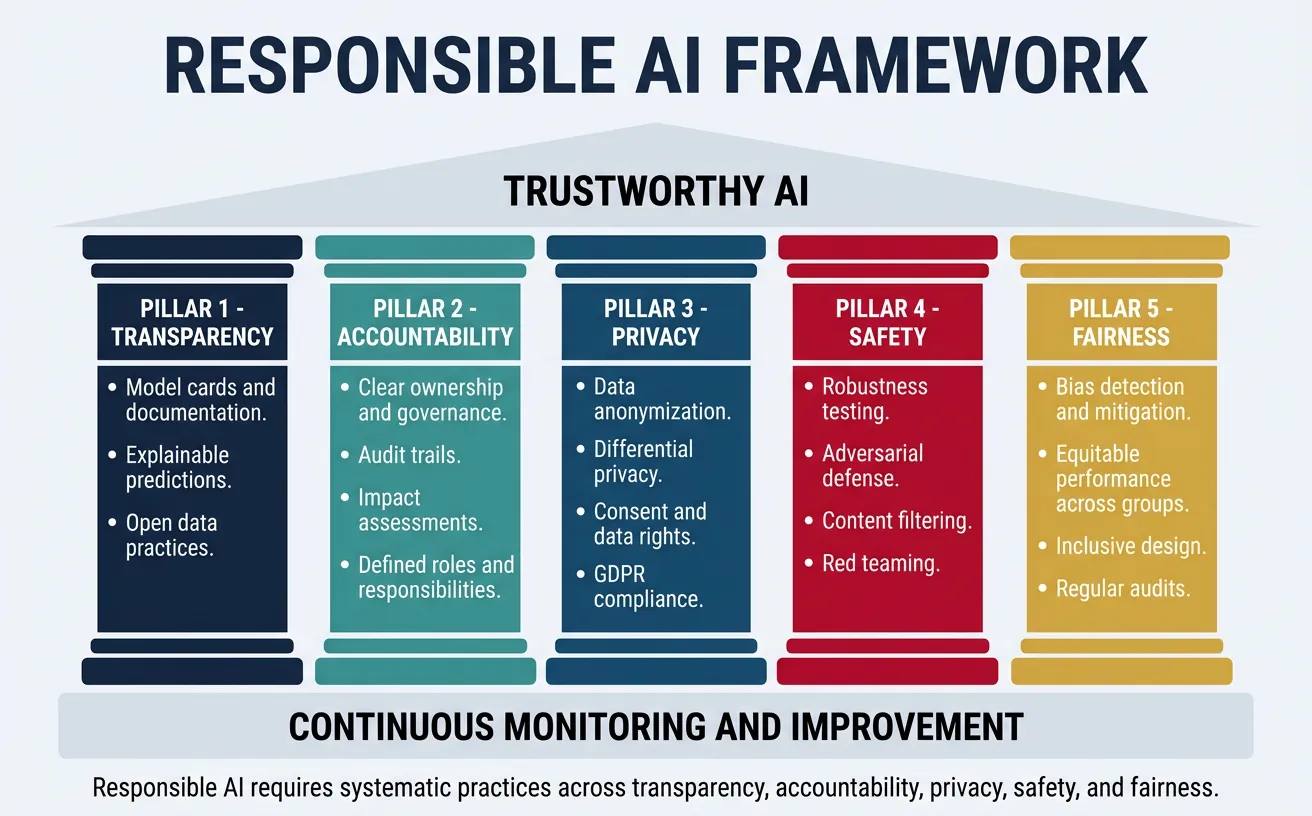

Responsible AI Practices

Responsible AI encompasses the entire lifecycle of NLP systems—from problem formulation through deployment and monitoring. It requires interdisciplinary thinking, combining technical skills with understanding of ethics, law, sociology, and the specific domain where the system will be used. Building responsible NLP systems is not just an ethical imperative but increasingly a legal and business requirement.

Key principles include: Transparency (documenting capabilities and limitations), Accountability (clear ownership of decisions), Privacy (protecting user data), Safety (preventing harmful outputs), and Continuous Monitoring (detecting drift and emerging issues). These principles must be operationalized through concrete practices and tools.

Model Cards & Datasheets

Model Cards document intended uses, limitations, and evaluation metrics. Datasheets for Datasets describe data collection, composition, and known biases. These standardized documentation practices increase transparency and help users make informed decisions about whether a model is appropriate for their use case.

"""

Model Card Template for NLP Models

Based on: Mitchell et al. 2019, "Model Cards for Model Reporting"

"""

class ModelCard:

"""Structured documentation for responsible ML deployment."""

def __init__(self):

self.sections = {}

def set_model_details(self, name, version, type_, developers, license_):

self.sections['Model Details'] = {

'Name': name,

'Version': version,

'Type': type_,

'Developers': developers,

'License': license_,

'Date': 'January 2026',

}

def set_intended_use(self, primary_uses, out_of_scope, users):

self.sections['Intended Use'] = {

'Primary intended uses': primary_uses,

'Out-of-scope uses': out_of_scope,

'Intended users': users,

}

def set_factors(self, relevant_factors, evaluation_factors):

self.sections['Factors'] = {

'Relevant factors': relevant_factors,

'Evaluation factors': evaluation_factors,

}

def set_metrics(self, performance_metrics, decision_thresholds):

self.sections['Metrics'] = {