Observability

System Design Mastery

Introduction to System Design

Fundamentals, why it matters, key conceptsScalability Fundamentals

Horizontal vs vertical scaling, stateless designLoad Balancing & Caching

Algorithms, Redis, CDN patternsDatabase Design & Sharding

SQL vs NoSQL, replication, partitioningMicroservices Architecture

Decomposition, discovery, testing, resilienceAPI Design & REST/GraphQL

RESTful principles, GraphQL, gRPCMessage Queues & Event-Driven

Kafka, outbox, event sourcing, idempotent consumersCAP Theorem & Consistency

Distributed trade-offs, eventual consistencyRate Limiting & Security

Throttling algorithms, DDoS protectionMonitoring & Observability

Logging, metrics, distributed tracingReal-World Case Studies

URL shortener, chat, feed, video streamingData Modeling & Schema Design

Data modeling, schema design, indexingDistributed Systems Deep Dive

Consensus, Paxos, Raft, coordinationAuthentication & Security

OAuth, JWT, zero trust, complianceQuestions & Trade-offs

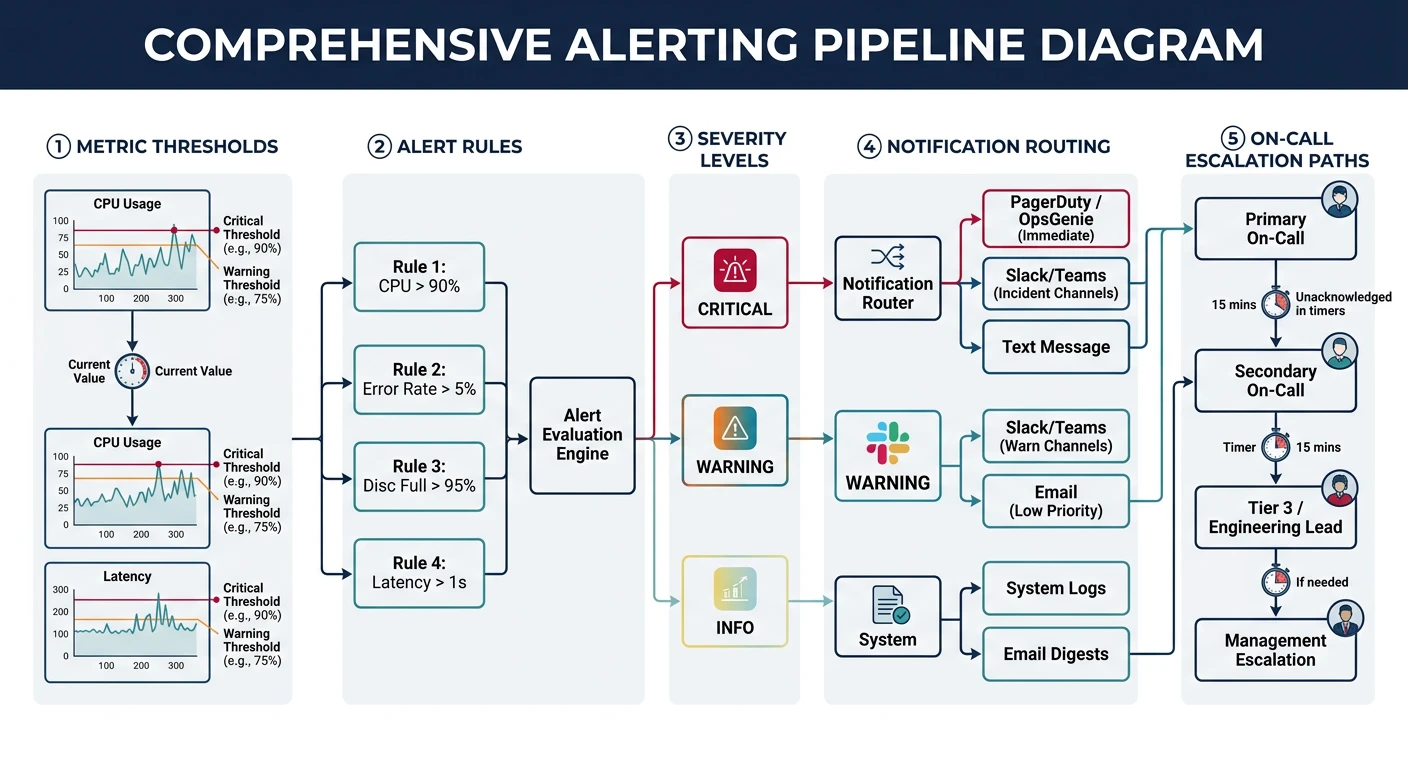

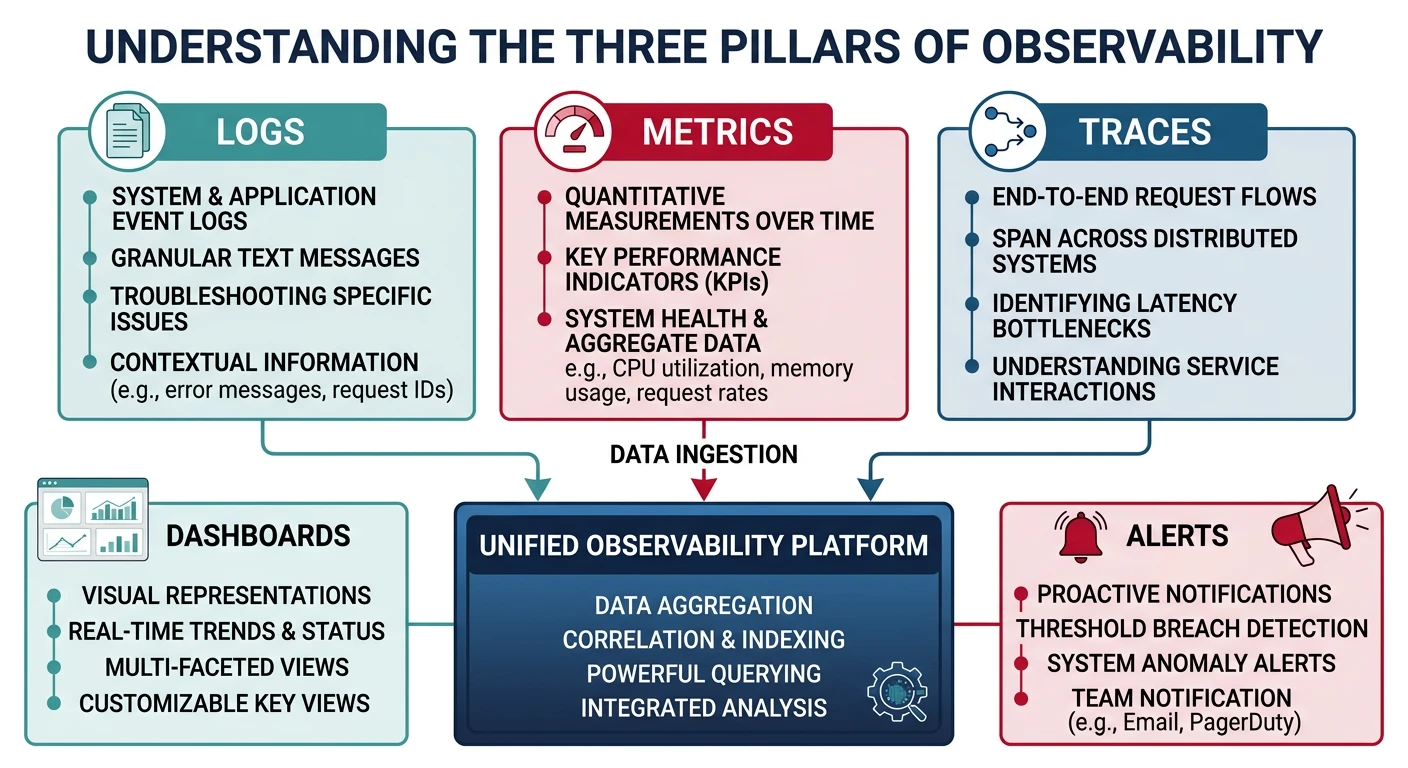

Common questions, SQL vs NoSQL, push vs pullObservability is the ability to understand the internal state of your system by examining its outputs: logs, metrics, and traces. Unlike traditional monitoring, observability allows you to ask questions you didn't anticipate.

The Three Pillars

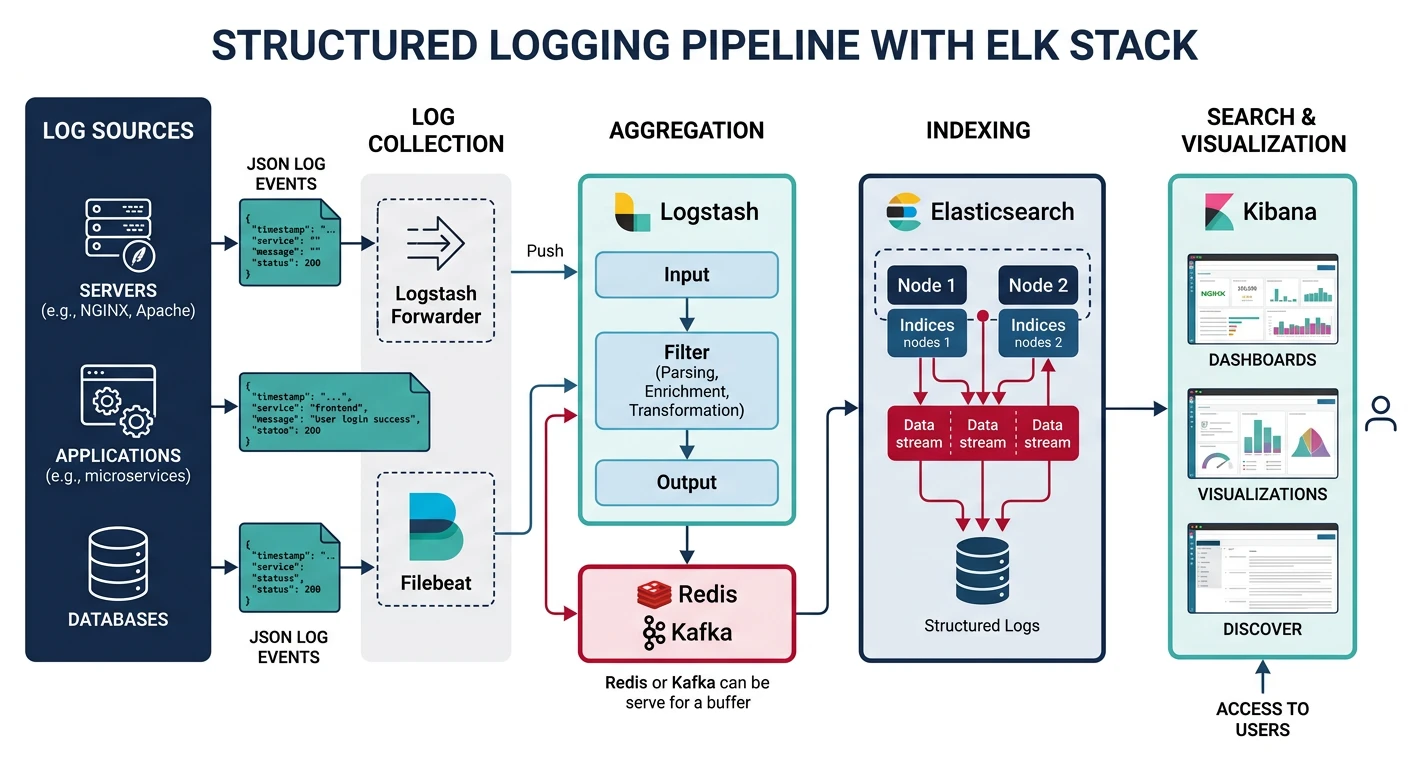

- Logs: Discrete events with context (what happened)

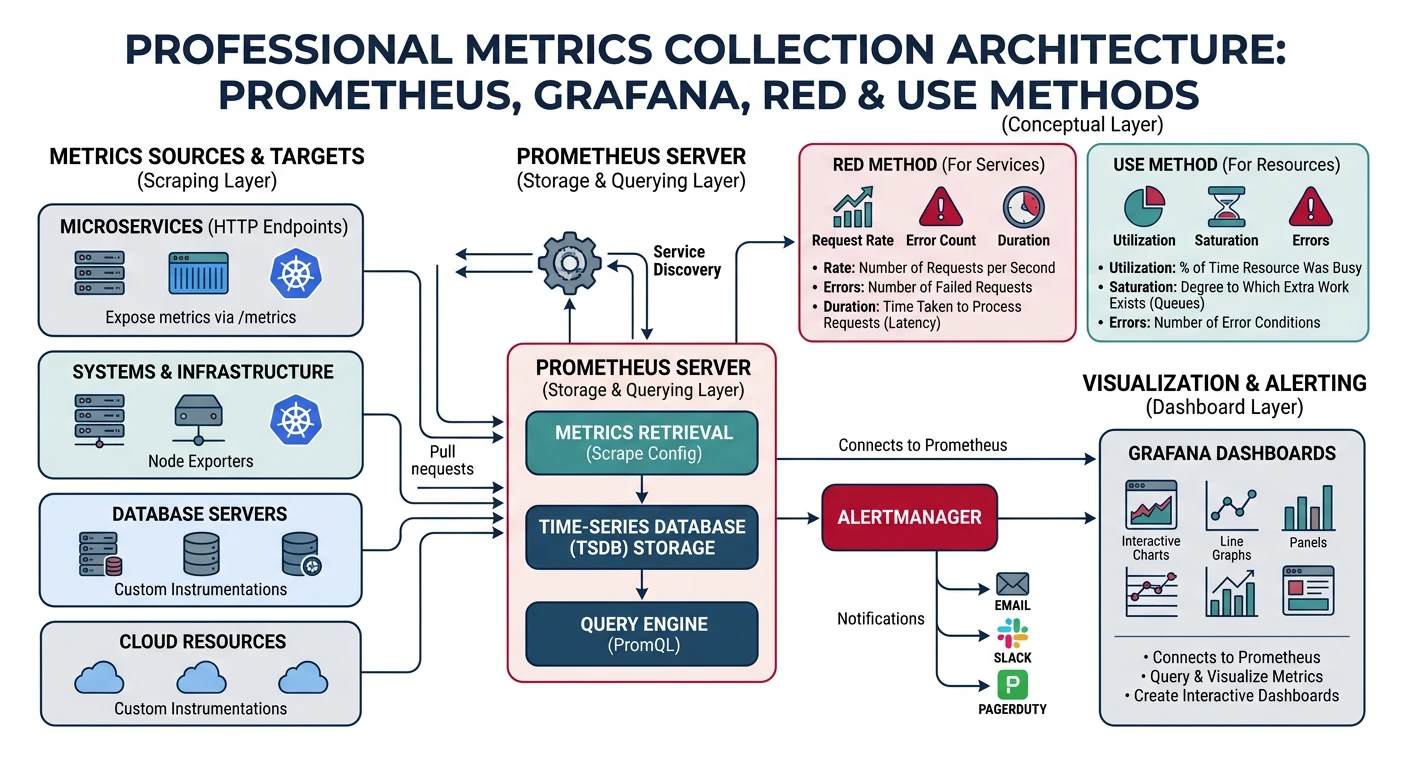

- Metrics: Numeric measurements over time (how much)

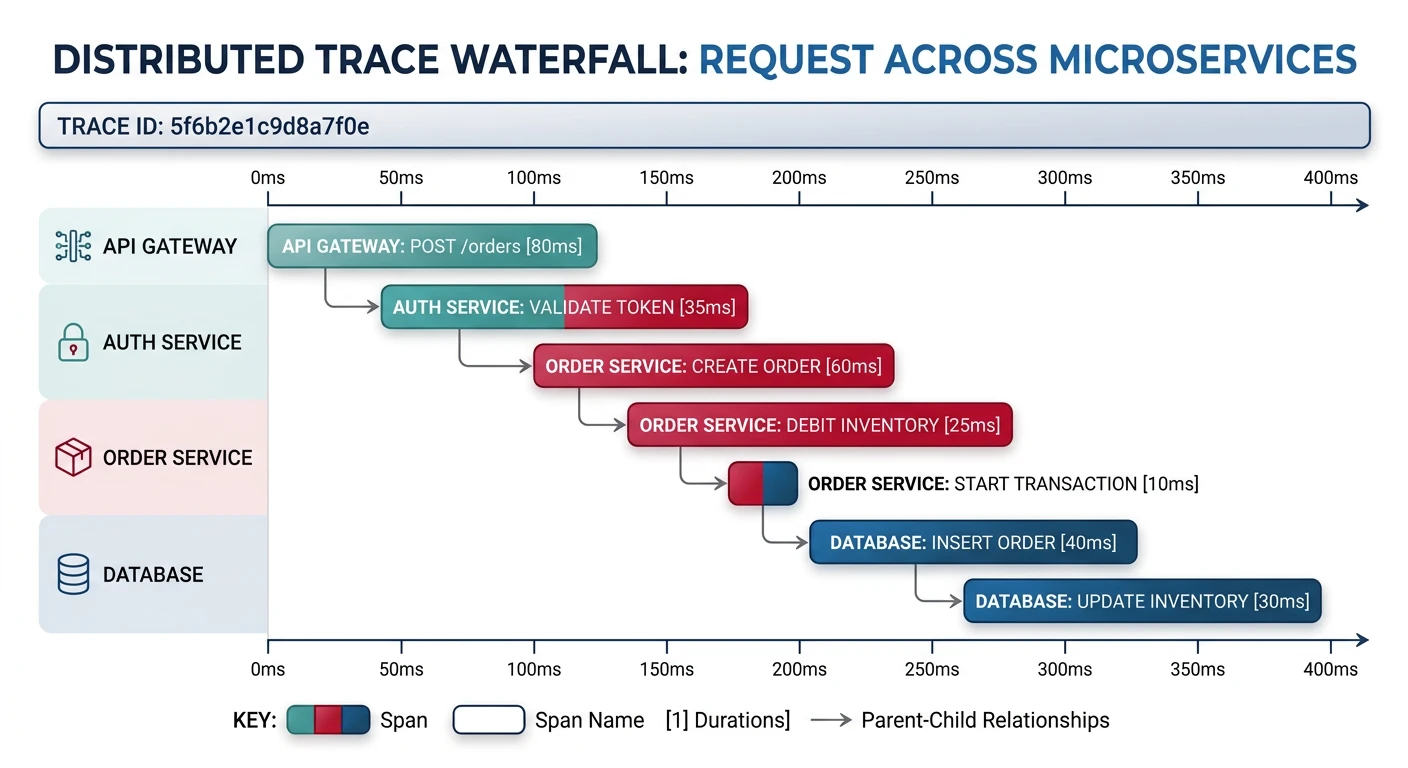

- Traces: Request journey across services (where/how long)