URL Shortener (like bit.ly)

System Design Mastery

Introduction to System Design

Fundamentals, why it matters, key conceptsScalability Fundamentals

Horizontal vs vertical scaling, stateless designLoad Balancing & Caching

Algorithms, Redis, CDN patternsDatabase Design & Sharding

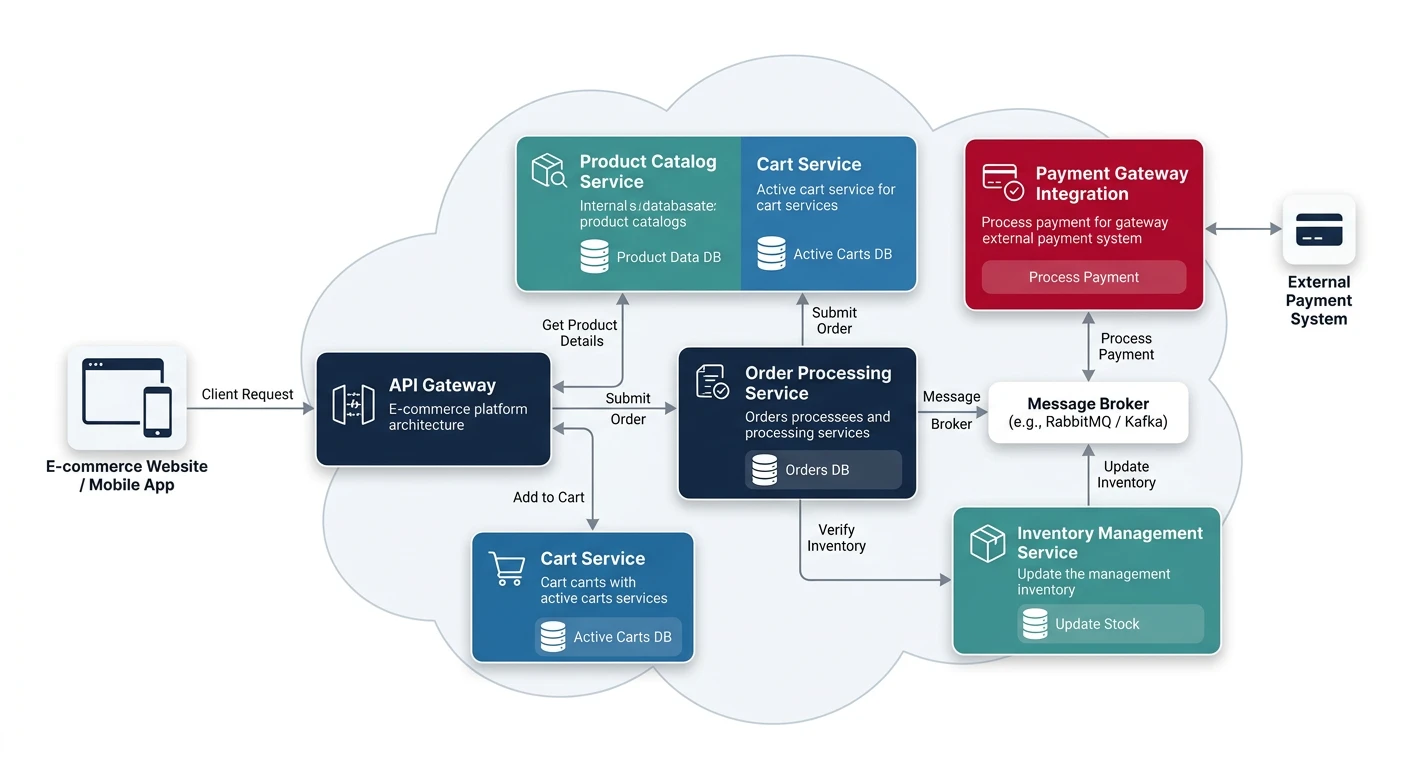

SQL vs NoSQL, replication, partitioningMicroservices Architecture

Decomposition, discovery, testing, resilienceAPI Design & REST/GraphQL

RESTful principles, GraphQL, gRPCMessage Queues & Event-Driven

Kafka, outbox, event sourcing, idempotent consumersCAP Theorem & Consistency

Distributed trade-offs, eventual consistencyRate Limiting & Security

Throttling algorithms, DDoS protectionMonitoring & Observability

Logging, metrics, distributed tracingReal-World Case Studies

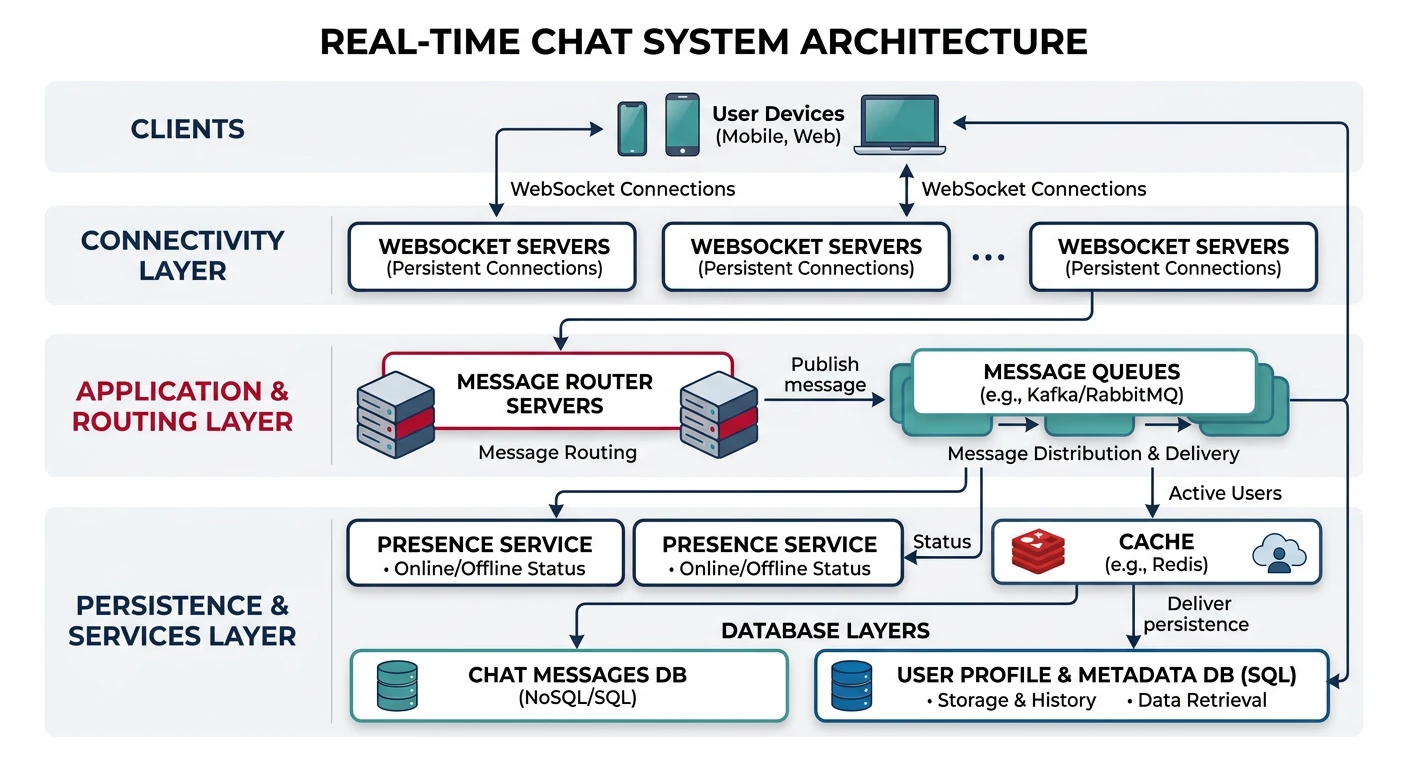

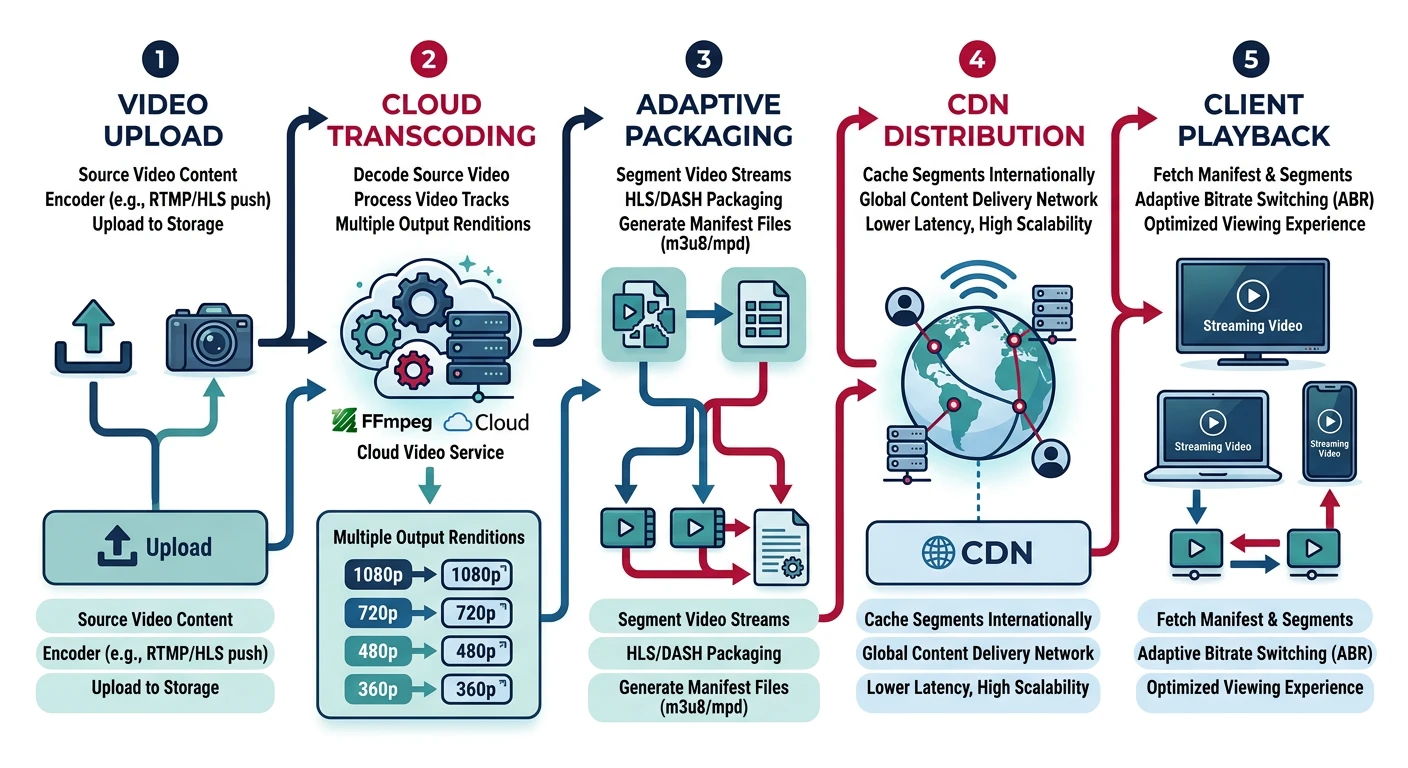

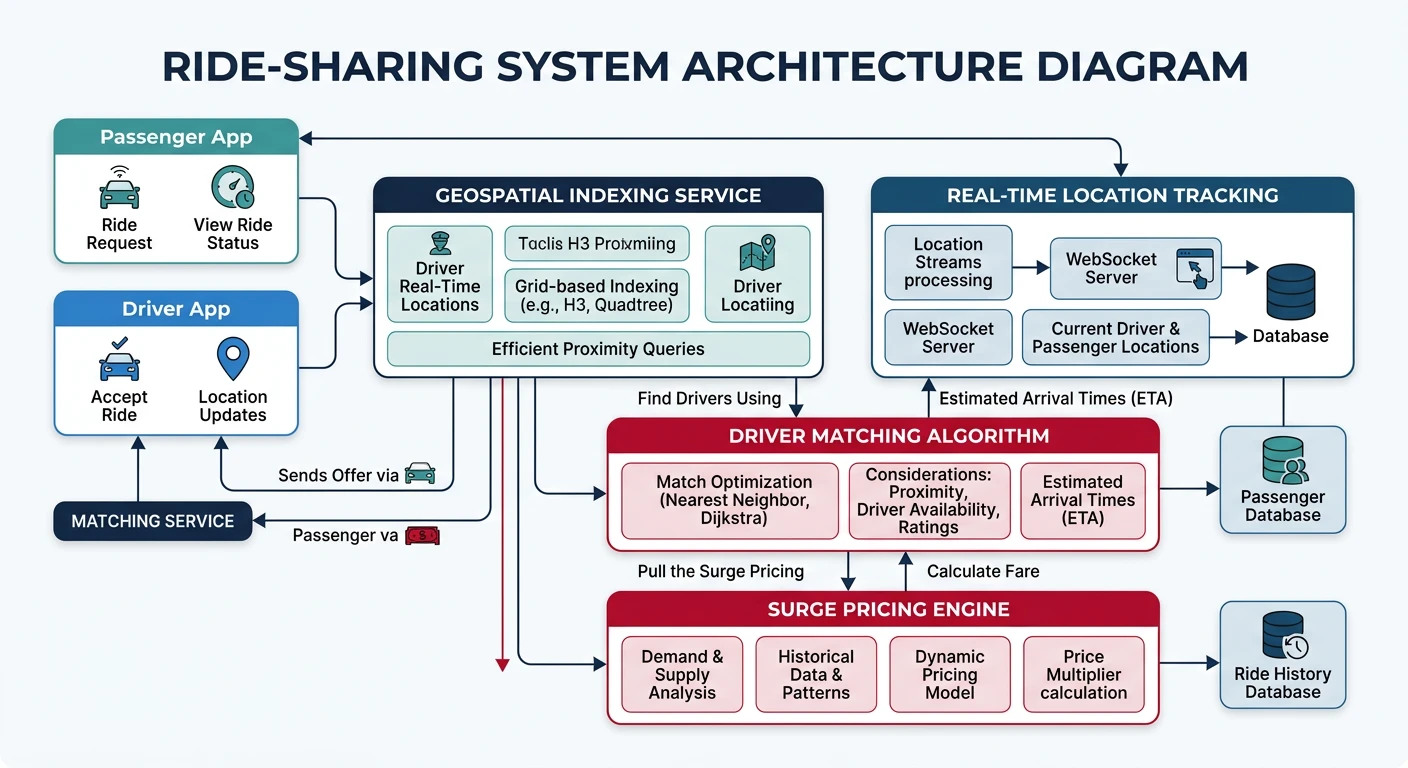

URL shortener, chat, feed, video streamingData Modeling & Schema Design

Data modeling, schema design, indexingDistributed Systems Deep Dive

Consensus, Paxos, Raft, coordinationAuthentication & Security

OAuth, JWT, zero trust, complianceQuestions & Trade-offs

Common questions, SQL vs NoSQL, push vs pullA URL shortener is a classic system design interview question. It tests your understanding of hashing, databases, caching, and handling high read/write ratios.

Functional Requirements

- Given a long URL, return a short URL

- Given a short URL, redirect to original URL

- Custom short URLs (optional)

- Analytics: click counts, geographic data

- URL expiration (optional)

Scale Estimation

# Back-of-envelope estimation

# Assumptions:

# - 100M new URLs per month

# - 100:1 read/write ratio (10B redirects/month)

# Writes: 100M / (30 * 24 * 3600) ˜ 40 URLs/second

# Reads: 40 * 100 = 4000 redirects/second (peak: 40,000)

# Storage (5 years):

# - 100M * 12 * 5 = 6B URLs

# - Each URL: ~500 bytes (original URL + metadata)

# - Total: 6B * 500 = 3TB

# Short URL length:

# - Base62 (a-z, A-Z, 0-9) = 62 characters

# - 6 characters: 62^6 = 56.8B combinations (enough for 6B URLs)

# - 7 characters: 62^7 = 3.5T combinations (future-proof)

Social Media Feed (like Twitter)

Functional Requirements

The Fan-out Problem