Series Navigation: This is Part 12 of the 17-part API Development Series. Review Part 11: Versioning & Governance first.

API Development Mastery

Your 17-step learning path • Currently on Step 12

Backend API Fundamentals

REST, HTTP, status codes, URI designData Layer & Persistence

Database integration, CRUD, transactions, RedisOpenAPI Specification

Contract-first design, OpenAPI 3.0/3.1Documentation & DX

Swagger UI, Redoc, developer portalsAuthentication & Authorization

OAuth 2.0, JWT, RBAC, ABACSecurity Hardening

OWASP Top 10, input validation, CORSAWS API Gateway

REST/HTTP APIs, Lambda integration, WAFAzure API Management

Policies, products, developer portalGCP Apigee

API proxies, monetization, analyticsArchitecture Patterns

Gateway, BFF, microservices, DDDVersioning & Governance

SemVer, deprecation, lifecycle12

Monitoring & Analytics

Observability, tracing, SLIs/SLOs13

Performance & Rate Limiting

Caching, throttling, load testing14

GraphQL & gRPC

Alternative API styles, Protocol Buffers15

Testing & Contracts

Contract testing, Pact, Postman/Newman16

CI/CD & Automation

Spectral, GitHub Actions, Terraform17

API Product Management

API as Product, monetization, ecosystemsAPI Observability

The Three Pillars of Observability

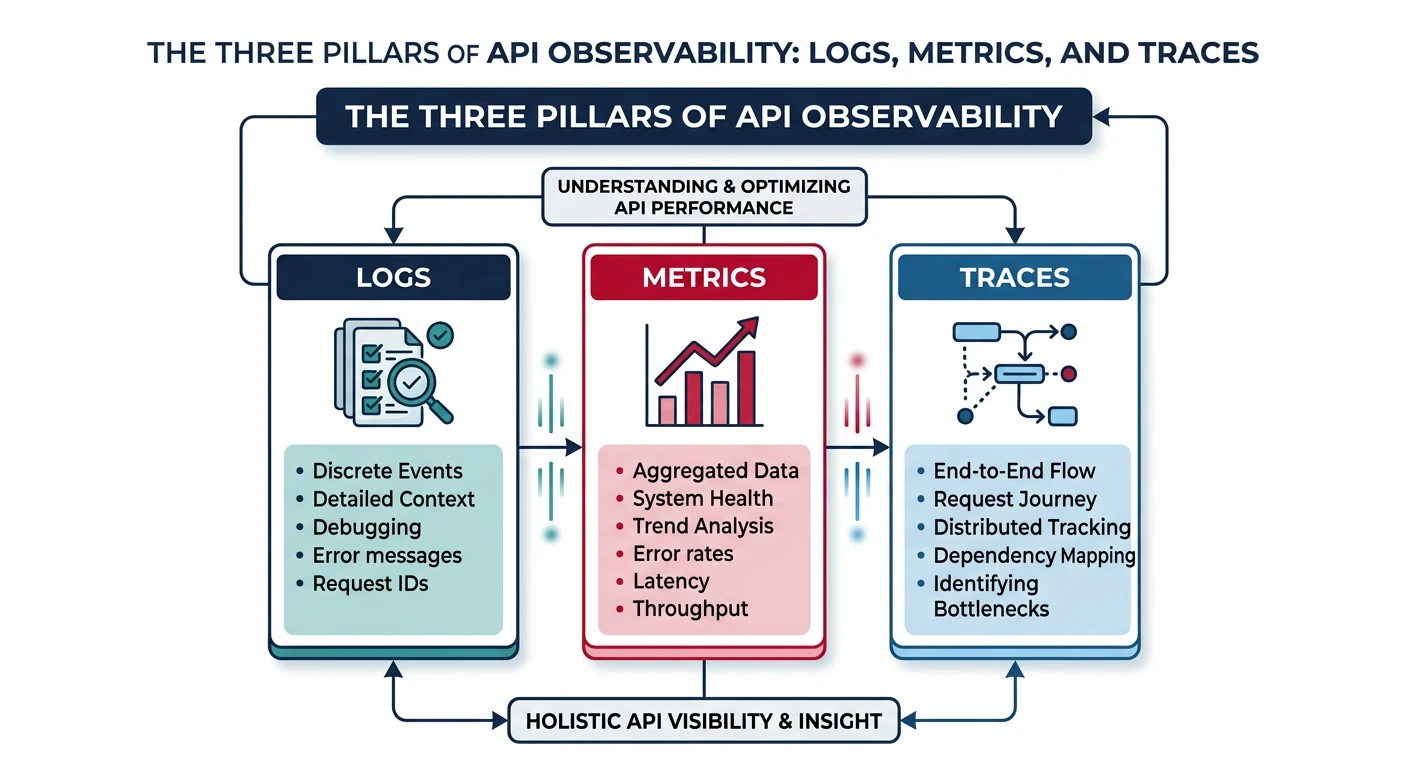

Observability enables you to understand your API's internal state by examining its external outputs.

API Observability Architecture

graph TD

API["API Service"]

API --> L["Logs

Structured Events"]

API --> M["Metrics

Counters and Gauges"]

API --> T["Traces

Request Flow"]

L --> AGG["Aggregation Layer"]

M --> AGG

T --> AGG

AGG --> DASH["Dashboard and Alerting"]

style API fill:#f0f4f8,stroke:#16476A

style AGG fill:#e8f4f4,stroke:#3B9797

style DASH fill:#e8f4f4,stroke:#3B9797

Observability Pillars

| Pillar | Purpose | Tools |

|---|---|---|

| Logs | Discrete events with context | ELK Stack, CloudWatch, Loki |

| Metrics | Numeric measurements over time | Prometheus, CloudWatch, Datadog |

| Traces | Request flow across services | Jaeger, Zipkin, X-Ray, Tempo |

// Structured logging middleware

const winston = require('winston');

const { v4: uuidv4 } = require('uuid');

const logger = winston.createLogger({

format: winston.format.combine(

winston.format.timestamp(),

winston.format.json()

),

transports: [new winston.transports.Console()]

});

const loggingMiddleware = (req, res, next) => {

req.requestId = req.get('X-Request-Id') || uuidv4();

const startTime = Date.now();

// Log request

logger.info('Request received', {

requestId: req.requestId,

method: req.method,

path: req.path,

query: req.query,

userAgent: req.get('User-Agent'),

clientIp: req.ip

});

// Log response

res.on('finish', () => {

const duration = Date.now() - startTime;

logger.info('Response sent', {

requestId: req.requestId,

statusCode: res.statusCode,

duration,

contentLength: res.get('Content-Length')

});

});

next();

};

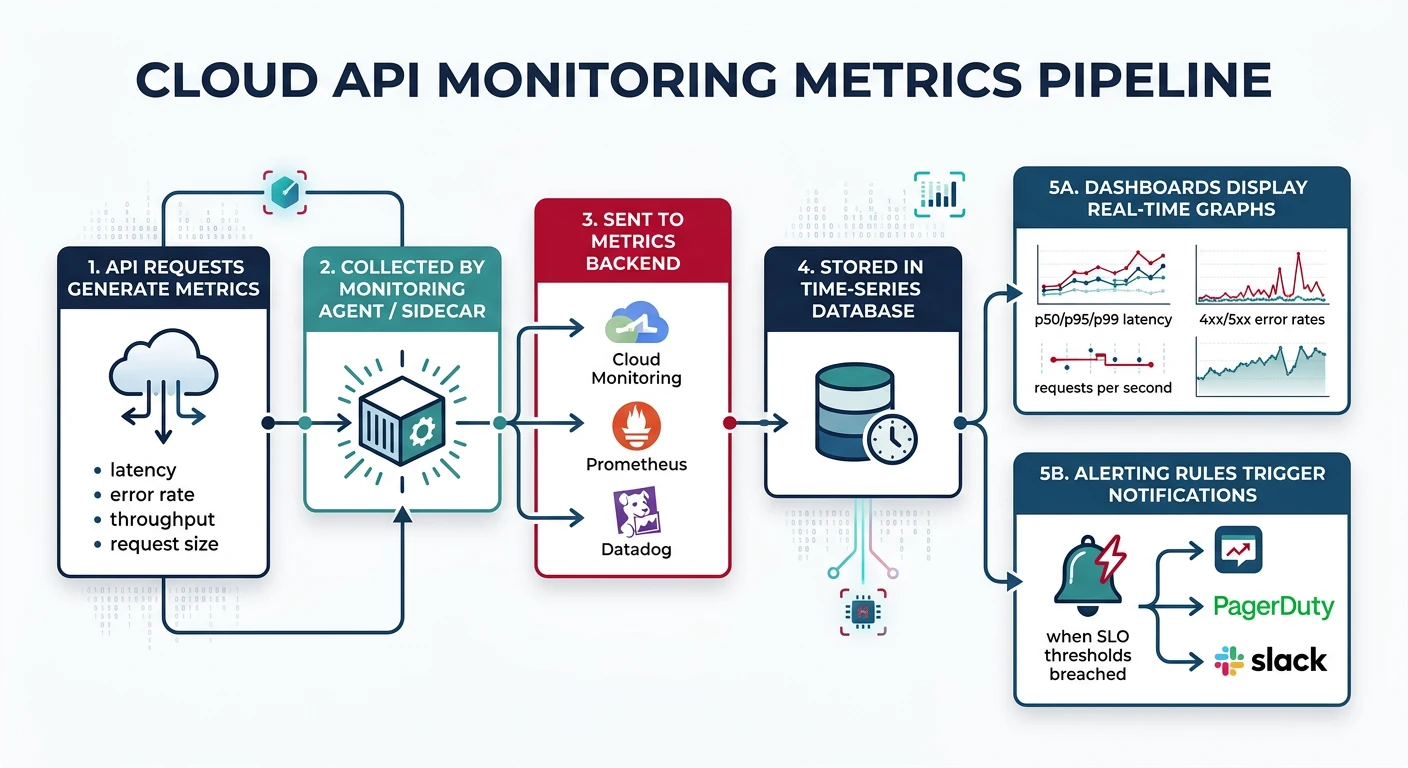

module.exports = { logger, loggingMiddleware };Cloud Monitoring Tools

AWS CloudWatch

// AWS CloudWatch custom metrics

const AWS = require('aws-sdk');

const cloudwatch = new AWS.CloudWatch();

async function publishApiMetrics(metrics) {

const params = {

Namespace: 'TaskAPI',

MetricData: [

{

MetricName: 'RequestCount',

Dimensions: [

{ Name: 'Endpoint', Value: metrics.endpoint },

{ Name: 'Method', Value: metrics.method }

],

Value: 1,

Unit: 'Count'

},

{

MetricName: 'Latency',

Dimensions: [

{ Name: 'Endpoint', Value: metrics.endpoint }

],

Value: metrics.duration,

Unit: 'Milliseconds'

},

{

MetricName: 'ErrorCount',

Dimensions: [

{ Name: 'StatusCode', Value: String(metrics.statusCode) }

],

Value: metrics.statusCode >= 400 ? 1 : 0,

Unit: 'Count'

}

]

};

await cloudwatch.putMetricData(params).promise();

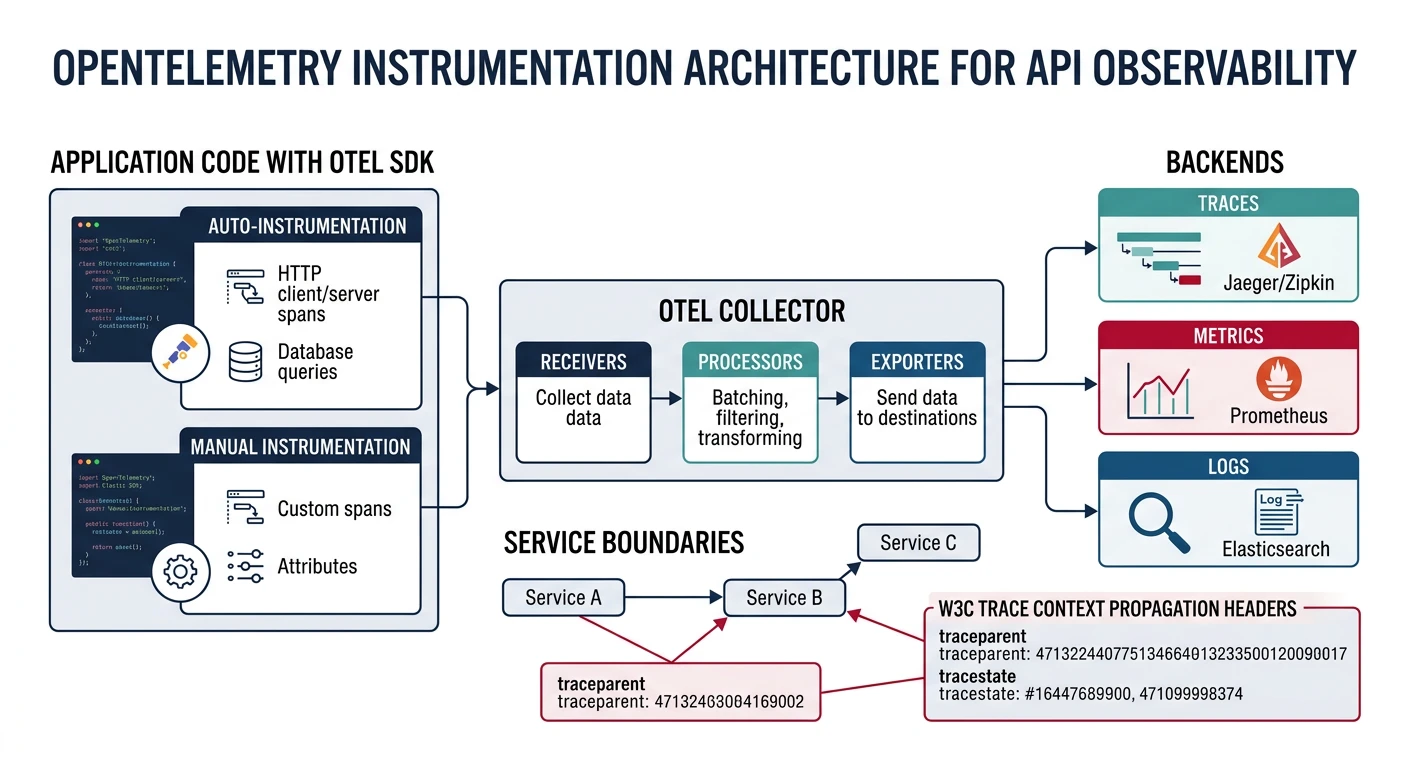

}OpenTelemetry

OpenTelemetry Setup

OpenTelemetry is the vendor-neutral standard for observability instrumentation.

// tracing.js - OpenTelemetry setup

const { NodeSDK } = require('@opentelemetry/sdk-node');

const { getNodeAutoInstrumentations } = require('@opentelemetry/auto-instrumentations-node');

const { OTLPTraceExporter } = require('@opentelemetry/exporter-trace-otlp-http');

const { OTLPMetricExporter } = require('@opentelemetry/exporter-metrics-otlp-http');

const { PeriodicExportingMetricReader } = require('@opentelemetry/sdk-metrics');

const sdk = new NodeSDK({

serviceName: 'task-api',

traceExporter: new OTLPTraceExporter({

url: 'http://otel-collector:4318/v1/traces'

}),

metricReader: new PeriodicExportingMetricReader({

exporter: new OTLPMetricExporter({

url: 'http://otel-collector:4318/v1/metrics'

}),

exportIntervalMillis: 10000

}),

instrumentations: [

getNodeAutoInstrumentations({

'@opentelemetry/instrumentation-http': {

requestHook: (span, request) => {

span.setAttribute('http.request_id', request.headers['x-request-id']);

}

},

'@opentelemetry/instrumentation-express': {},

'@opentelemetry/instrumentation-pg': {}

})

]

});

sdk.start();

process.on('SIGTERM', () => {

sdk.shutdown().then(() => process.exit(0));

});Custom Spans

const { trace, SpanStatusCode } = require('@opentelemetry/api');

const tracer = trace.getTracer('task-api');

async function getTaskWithDetails(taskId) {

return tracer.startActiveSpan('getTaskWithDetails', async (span) => {

try {

span.setAttribute('task.id', taskId);

// Child span for database query

const task = await tracer.startActiveSpan('db.getTask', async (dbSpan) => {

dbSpan.setAttribute('db.operation', 'SELECT');

dbSpan.setAttribute('db.table', 'tasks');

const result = await db.query('SELECT * FROM tasks WHERE id = $1', [taskId]);

dbSpan.end();

return result.rows[0];

});

// Child span for external API call

const owner = await tracer.startActiveSpan('api.getUser', async (apiSpan) => {

apiSpan.setAttribute('http.url', `${USER_SERVICE}/users/${task.userId}`);

const response = await axios.get(`${USER_SERVICE}/users/${task.userId}`);

apiSpan.end();

return response.data;

});

span.setStatus({ code: SpanStatusCode.OK });

return { ...task, owner };

} catch (error) {

span.setStatus({ code: SpanStatusCode.ERROR, message: error.message });

span.recordException(error);

throw error;

} finally {

span.end();

}

});

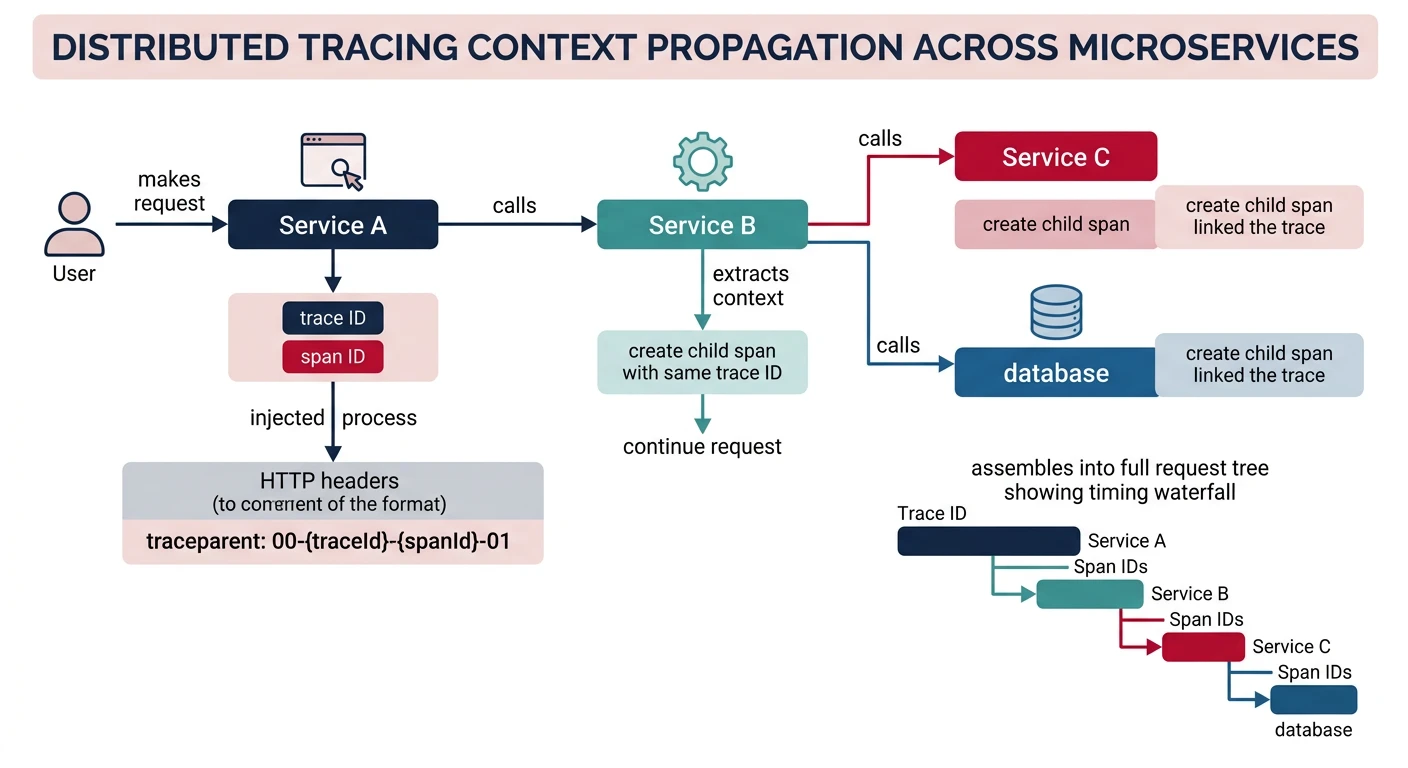

}Distributed Tracing

Trace Context Propagation

W3C Trace Context Headers:

traceparent: Trace ID, parent span ID, flagstracestate: Vendor-specific trace info

// Propagate trace context in HTTP calls

const axios = require('axios');

const { propagation, context } = require('@opentelemetry/api');

async function callDownstreamService(url, data) {

const headers = {};

// Inject trace context into headers

propagation.inject(context.active(), headers);

const response = await axios.post(url, data, { headers });

return response.data;

}

// Example traceparent header:

// traceparent: 00-0af7651916cd43dd8448eb211c80319c-b7ad6b7169203331-01

// version-traceId-parentSpanId-flagsSLIs & SLOs

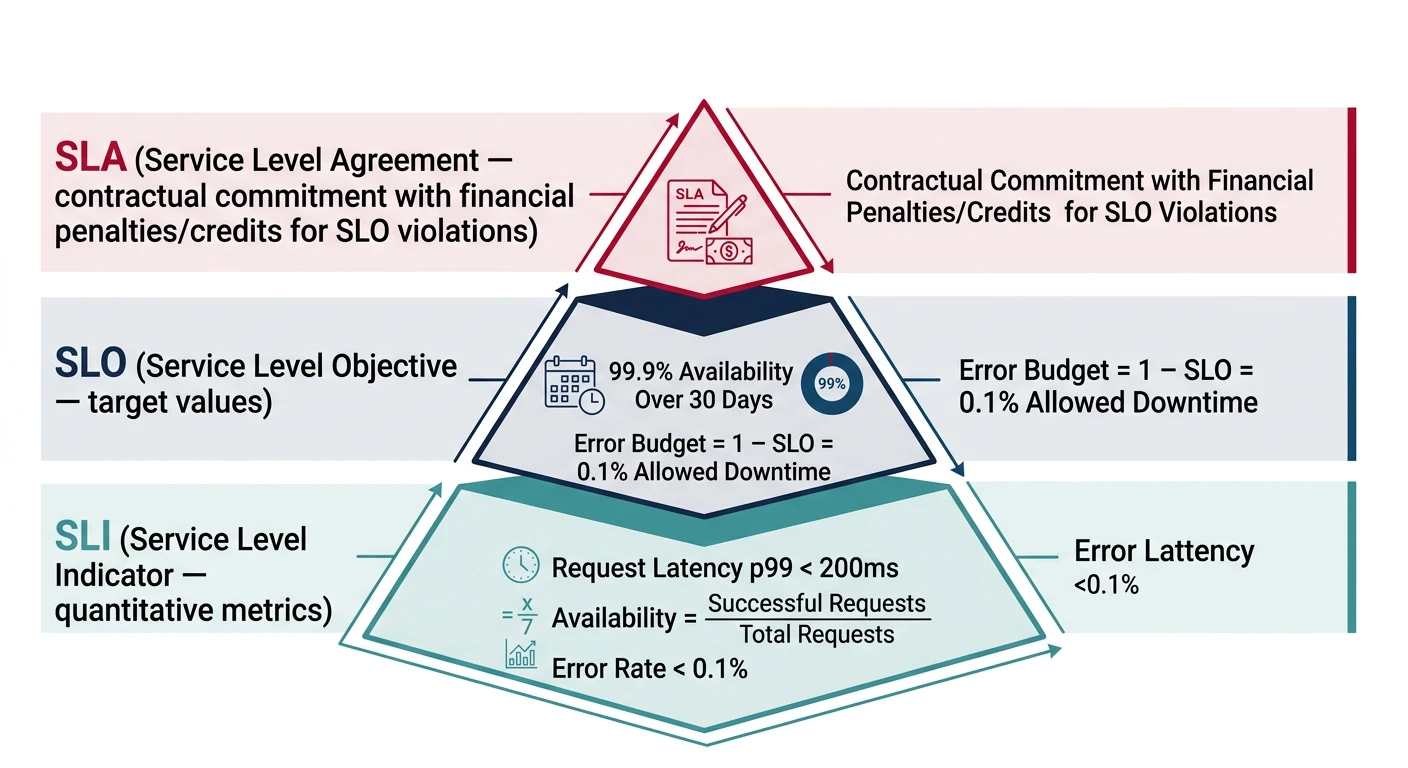

Defining Service Level Objectives

SLI/SLO/SLA Hierarchy

| Term | Definition | Example |

|---|---|---|

| SLI | Service Level Indicator - metric | 99th percentile latency |

| SLO | Service Level Objective - target | P99 latency < 200ms |

| SLA | Service Level Agreement - contract | 99.9% uptime or credits |

# slo.yaml - SLO definitions

service: task-api

slos:

- name: availability

description: API should be available

sli:

type: ratio

good: response.status_code < 500

total: all requests

objective: 99.9%

window: 30d

- name: latency

description: API should respond quickly

sli:

type: histogram

metric: http_request_duration_seconds

threshold: 0.2 # 200ms

objective: 99%

window: 30d

- name: error_rate

description: API should not return errors

sli:

type: ratio

good: response.status_code < 400

total: all requests

objective: 99%

window: 7dError Budget Calculation

// Error budget calculator

function calculateErrorBudget(slo, windowDays, actualAvailability) {

const totalMinutes = windowDays * 24 * 60;

const allowedDowntimeMinutes = totalMinutes * (1 - slo);

const actualDowntimeMinutes = totalMinutes * (1 - actualAvailability);

const remainingBudget = allowedDowntimeMinutes - actualDowntimeMinutes;

return {

slo: `${(slo * 100).toFixed(2)}%`,

allowedDowntime: `${allowedDowntimeMinutes.toFixed(1)} minutes`,

actualDowntime: `${actualDowntimeMinutes.toFixed(1)} minutes`,

remainingBudget: `${remainingBudget.toFixed(1)} minutes`,

budgetConsumed: `${((actualDowntimeMinutes / allowedDowntimeMinutes) * 100).toFixed(1)}%`

};

}

// Example: 99.9% SLO over 30 days with 99.95% actual

console.log(calculateErrorBudget(0.999, 30, 0.9995));

// {

// slo: "99.90%",

// allowedDowntime: "43.2 minutes",

// actualDowntime: "21.6 minutes",

// remainingBudget: "21.6 minutes",

// budgetConsumed: "50.0%"

// }API Analytics Dashboards

Key Metrics to Track

Essential API Metrics:

- Traffic: Requests/second by endpoint

- Latency: P50, P95, P99 response times

- Errors: 4xx and 5xx rates by endpoint

- Saturation: CPU, memory, connection pool usage

# Prometheus recording rules for API metrics

groups:

- name: api_metrics

rules:

# Request rate

- record: api:request_rate:5m

expr: sum(rate(http_requests_total[5m])) by (endpoint, method)

# Error rate

- record: api:error_rate:5m

expr: |

sum(rate(http_requests_total{status_code=~"5.."}[5m])) by (endpoint)

/

sum(rate(http_requests_total[5m])) by (endpoint)

# P99 latency

- record: api:latency_p99:5m

expr: histogram_quantile(0.99, sum(rate(http_request_duration_seconds_bucket[5m])) by (le, endpoint))

# Availability (non-5xx requests)

- record: api:availability:5m

expr: |

1 - (

sum(rate(http_requests_total{status_code=~"5.."}[5m]))

/

sum(rate(http_requests_total[5m]))

)Practice Exercises

Exercise 1: Structured Logging

- Add Winston logger with JSON format

- Include request ID correlation

- Log request/response with duration

Exercise 2: OpenTelemetry Integration

- Set up OpenTelemetry SDK

- Add auto-instrumentation for Express and Postgres

- Export traces to Jaeger

Exercise 3: SLO Dashboard

- Define SLIs for availability and latency

- Create Grafana dashboard with SLO tracking

- Set up error budget alerts