Introduction

I/O systems form the bridge between software and hardware. Understanding how the OS manages devices is crucial for system programming and performance optimization.

Understand how operating systems communicate with hardware—from interrupts and DMA to device drivers and disk scheduling.

I/O systems form the bridge between software and hardware. Understanding how the OS manages devices is crucial for system programming and performance optimization.

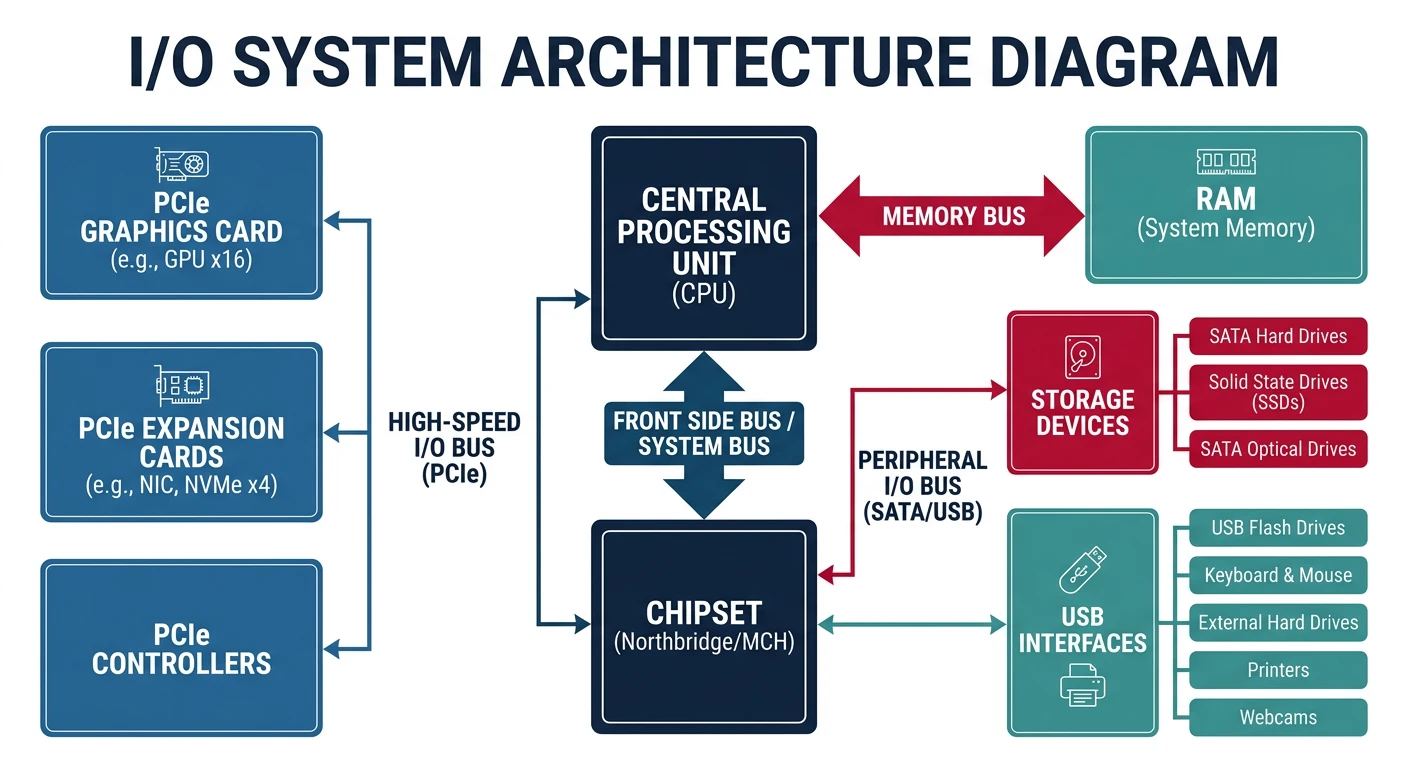

I/O devices communicate with the CPU through controllers, buses, and ports.

I/O Hardware Stack:

══════════════════════════════════════════════════════════════

┌─────────┐

│ CPU │

└────┬────┘

│

▼

┌────────────────────────────────────────────────┐

│ Memory Bus (FSB/QPI) │

└──────────────────┬─────────────────────────────┘

│

┌─────────────┴─────────────┐

▼ ▼

┌─────────┐ ┌───────────────┐

│ RAM │ │ Chipset/PCH │

└─────────┘ └───────┬───────┘

│

┌──────────────────┼──────────────────┐

│ │ │

▼ ▼ ▼

┌─────────┐ ┌─────────┐ ┌─────────┐

│PCIe x16 │ │ SATA │ │ USB │

│ (GPU) │ │ │ │ │

└─────────┘ └─────────┘ └─────────┘

Device Communication:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. PORT-MAPPED I/O (x86)

• Separate I/O address space

• Special instructions: IN, OUT

• Example: in al, 0x60 ; read keyboard

2. MEMORY-MAPPED I/O (modern)

• Device registers mapped to memory addresses

• Use regular MOV instructions

• Example: GPU framebuffer at 0xA0000Interrupts allow devices to signal the CPU asynchronously, avoiding wasteful polling.

Polling vs Interrupts:

══════════════════════════════════════════════════════════════

POLLING (Busy Waiting):

while (device_status != READY) {

// CPU spinning, wasting cycles!

}

read_data();

✗ Wastes CPU time

✗ Can't do other work while waiting

✓ Low latency for high-speed devices

INTERRUPTS:

1. CPU executes other work

2. Device raises interrupt signal (IRQ)

3. CPU saves state, jumps to handler

4. Handler processes device, returns

5. CPU resumes previous work

✓ CPU free to do other work

✓ Efficient for slow devices

✗ Context switch overhead

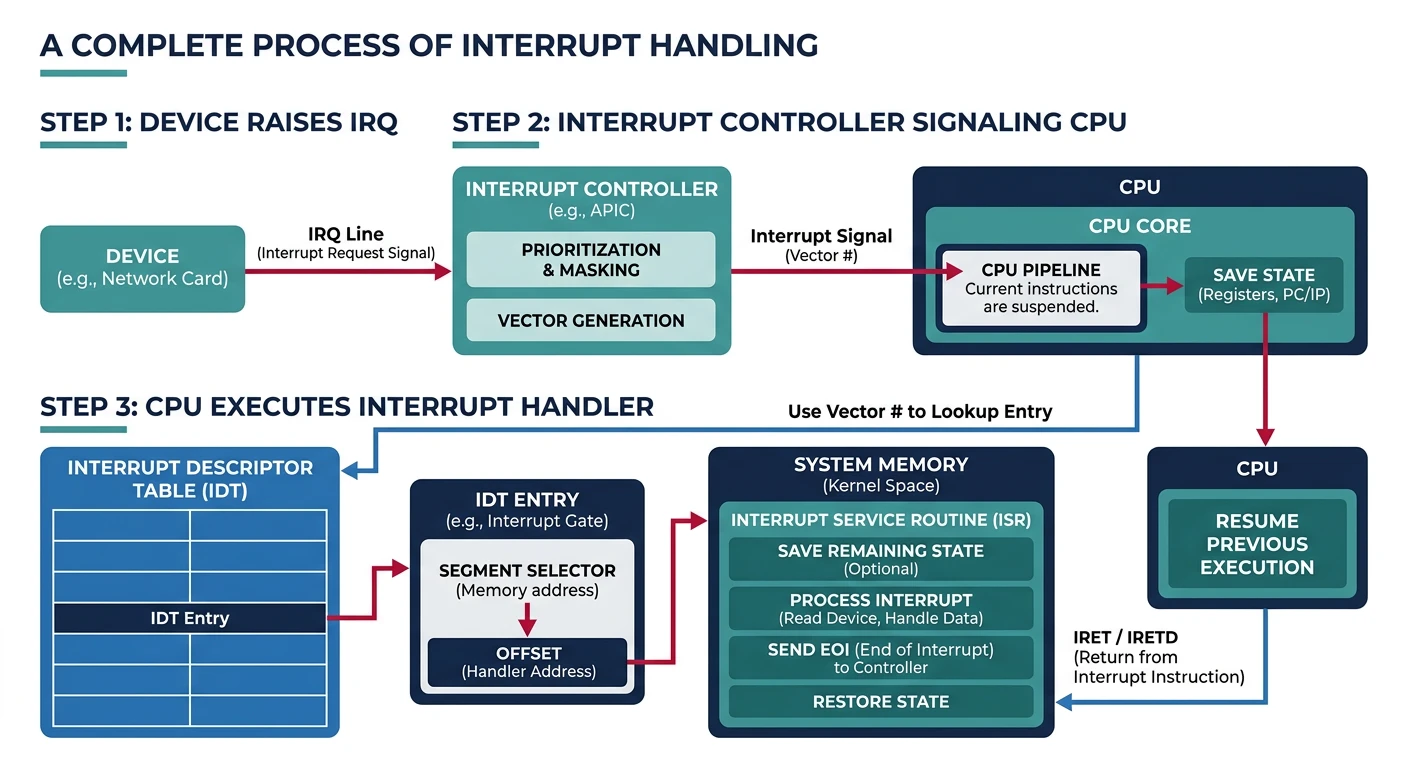

Interrupt Handling Steps:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. Device asserts interrupt line

2. Interrupt controller (APIC) signals CPU

3. CPU finishes current instruction

4. CPU pushes flags, CS, IP to stack

5. CPU looks up handler in IDT (Interrupt Descriptor Table)

6. Jump to interrupt handler

7. Handler:

a. Save registers

b. Service the device

c. Acknowledge interrupt to controller

d. Restore registers

8. IRET - return from interrupt# View interrupts on Linux

$ cat /proc/interrupts

CPU0 CPU1

0: 45 0 IO-APIC 2-edge timer

1: 1234 0 IO-APIC 1-edge i8042 (keyboard)

8: 1 0 IO-APIC 8-edge rtc0

12: 56789 0 IO-APIC 12-edge i8042 (mouse)

16: 123456 123456 IO-APIC 16-fasteoi ehci_hcd (USB)

# Monitor interrupt rate

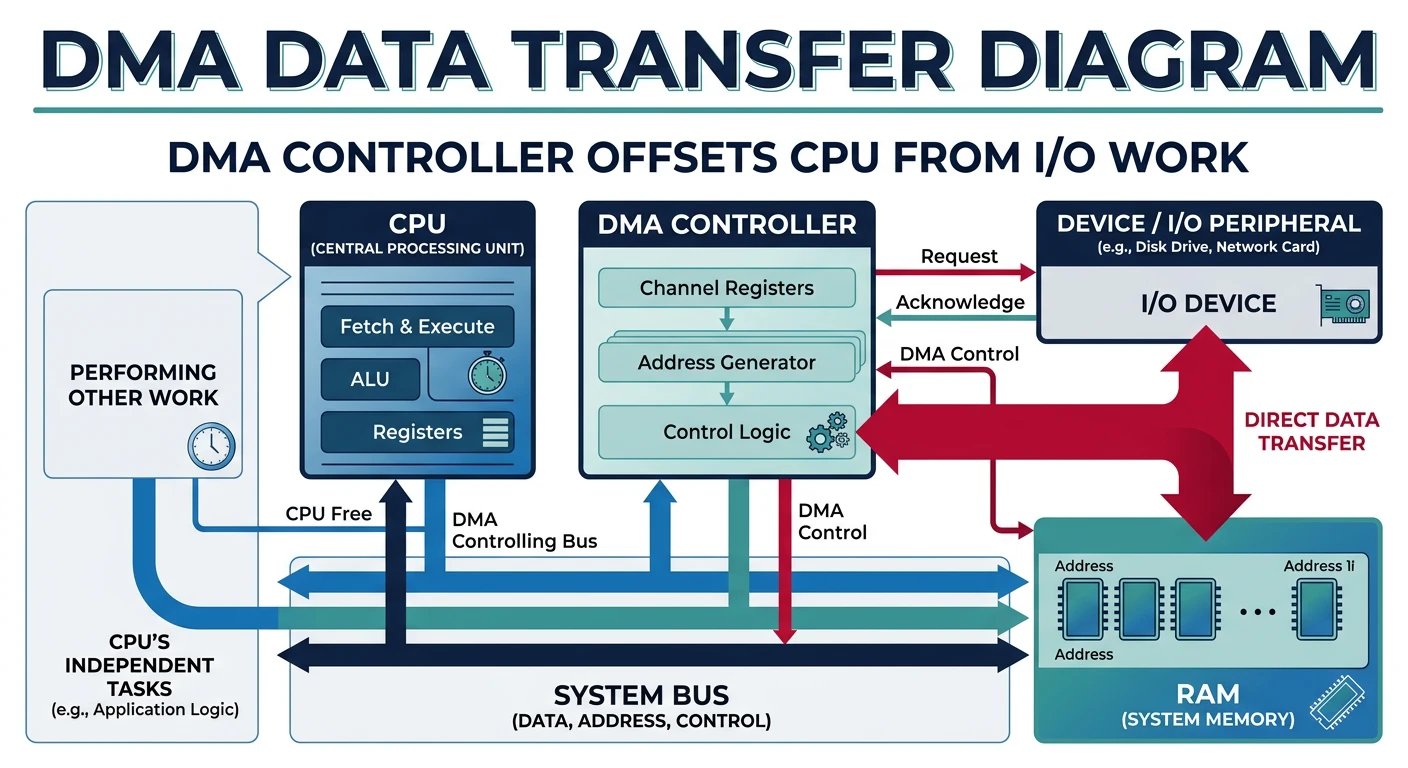

$ watch -n1 'cat /proc/interrupts'DMA allows devices to transfer data directly to/from memory without CPU involvement.

Without DMA (Programmed I/O):

══════════════════════════════════════════════════════════════

for each byte:

1. CPU reads byte from device

2. CPU writes byte to memory

4KB transfer = 4096 CPU read/write cycles!

With DMA:

══════════════════════════════════════════════════════════════

1. CPU programs DMA controller:

- Source address

- Destination address (memory buffer)

- Transfer count

- Direction (read/write)

2. DMA controller takes over bus:

- Device ↔ DMA ↔ Memory

- CPU is FREE during transfer!

3. DMA raises interrupt when complete

4. CPU processes data

┌─────────┐ ┌─────────┐

│ CPU │ (CPU does other work) │ RAM │

└─────────┘ └────┬────┘

│

┌──────────────┐ │

│ DMA │ ────────────┘

│ Controller │ (direct transfer)

└──────┬───────┘

│

┌──────┴───────┐

│ Device │

└──────────────┘dma_alloc_coherent().

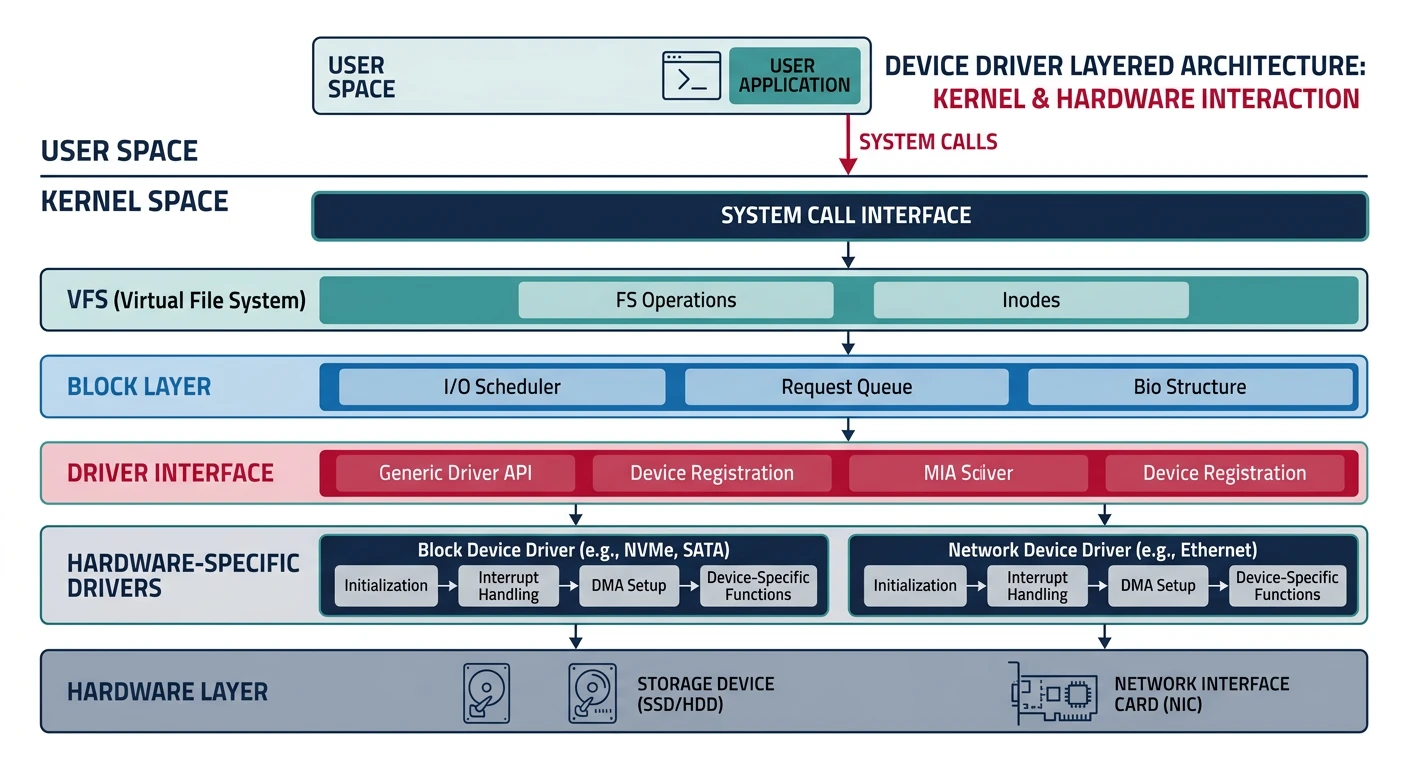

Device drivers are kernel modules that know how to communicate with specific hardware.

Driver Architecture:

══════════════════════════════════════════════════════════════

User Application

│

System Calls

│

┌─────────┴─────────┐

│ Kernel Core │

│ (VFS, block │

│ layer, etc.) │

└─────────┬─────────┘

│

Driver Interface

│

┌─────────────┼─────────────┐

│ │ │

┌───┴───┐ ┌────┴────┐ ┌───┴────┐

│ AHCI │ │ NVMe │ │ USB │

│driver│ │ driver │ │ driver │

└───┬───┘ └────┬────┘ └───┬────┘

│ │ │

▼ ▼ ▼

SATA NVMe USB

disk SSD HID# List loaded kernel modules (drivers)

$ lsmod

Module Size Used by

nvme 45056 3

nvme_core 114688 5 nvme

ahci 40960 5

libahci 36864 1 ahci

usb_storage 77824 1

# Load/unload driver

$ sudo modprobe nvme # Load

$ sudo modprobe -r nvme # Unload

# View driver messages

$ dmesg | tail -20Unix categorizes devices into block and character devices based on data access patterns.

Device Types:

══════════════════════════════════════════════════════════════

BLOCK DEVICES:

• Data accessed in fixed-size blocks (512B, 4KB)

• Random access supported (seek)

• Usually buffered through block layer

• Examples: hard drives, SSDs, USB drives

$ ls -l /dev/sda

brw-rw---- 1 root disk 8, 0 Jan 15 10:00 /dev/sda

↑

b = block device

CHARACTER DEVICES:

• Data accessed as stream of bytes

• Usually sequential access only

• No buffering (direct to/from device)

• Examples: terminals, keyboards, serial ports

$ ls -l /dev/tty

crw-rw-rw- 1 root tty 5, 0 Jan 15 10:00 /dev/tty

↑

c = character device

Special Devices:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

/dev/null - Discards all writes, EOF on read

/dev/zero - Infinite stream of zeros

/dev/random - Random bytes (may block)

/dev/urandom- Random bytes (never blocks)Understanding disk geometry is essential for disk scheduling algorithms.

Hard Disk Drive (HDD) Structure:

══════════════════════════════════════════════════════════════

Spindle

│

┌───────────┴───────────┐

│ ┌─────────────────┐ │

│ │ ┌───────────┐ │ │ ← Platters

│ │ │ Track │ │ │ (spinning disks)

│ │ │ [████] │ │ │ ← Sector

│ │ └───────────┘ │ │

│ └─────────────────┘ │

└───────────────────────┘

\_______________/

Actuator arm

with read/write

heads

Terminology:

• Platter: Spinning magnetic disk

• Track: Concentric circle on platter

• Sector: Smallest addressable unit (512B or 4KB)

• Cylinder: All tracks at same radius across platters

Access Time Components:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. Seek time: Move arm to correct track (~5-10 ms)

2. Rotational latency: Wait for sector (~4 ms @ 7200 RPM)

3. Transfer time: Read data (~0.01 ms per sector)

Total: ~10 ms per random access!

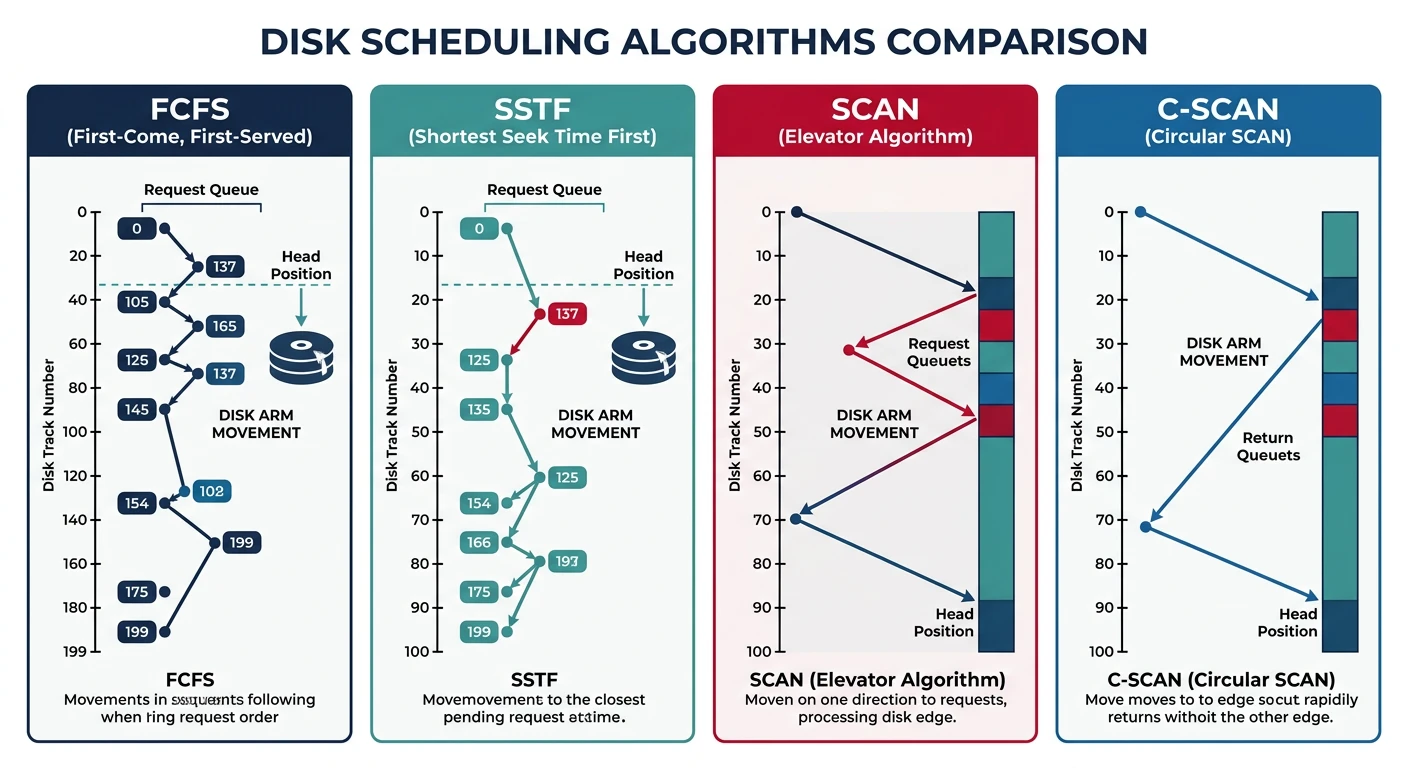

SSD: ~0.1 ms (no mechanical parts)Disk scheduling reorders I/O requests to minimize seek time. Critical for HDD performance.

Example: Head at track 50, Queue: [98, 37, 14, 122, 65, 67]

Disk tracks 0-199

1. FCFS (First-Come First-Served):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Service in arrival order.

50→98→37→14→122→65→67

48 + 61 + 23 + 108 + 57 + 2 = 299 tracks

✓ Fair, no starvation

✗ Poor performance (wild arm movement)

2. SSTF (Shortest Seek Time First):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Always go to nearest request.

50→65→67→37→14→98→122

15 + 2 + 30 + 23 + 84 + 24 = 178 tracks

✓ Better than FCFS

✗ Starvation possible (distant requests)

3. SCAN (Elevator):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Move in one direction, service all, reverse.

50→65→67→98→122→(end)→37→14

15 + 2 + 31 + 24 + 77 + 85 + 23 = 157 tracks

✓ No starvation, fair

✓ Good throughput

4. C-SCAN (Circular SCAN):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Only service in one direction, jump back.

50→65→67→98→122→(end)─jump→0→14→37

✓ More uniform wait time than SCAN

✓ No requests wait too long# View current I/O scheduler

$ cat /sys/block/sda/queue/scheduler

noop deadline [cfq]

# Change scheduler

$ echo deadline | sudo tee /sys/block/sda/queue/scheduler

# For NVMe (usually uses none/noop)

$ cat /sys/block/nvme0n1/queue/scheduler

[none] mq-deadlineI/O systems are the critical bridge between software and hardware. We've covered: