Introduction

Memory management is a core OS responsibility. The operating system must efficiently allocate memory to processes while minimizing waste and ensuring isolation.

Understand how operating systems manage physical memory—from allocation strategies to fragmentation handling.

Memory management is a core OS responsibility. The operating system must efficiently allocate memory to processes while minimizing waste and ensuring isolation.

An address space is the range of addresses a program can use. Modern systems use separate address spaces for each process.

Address Types:

══════════════════════════════════════════════════════════════

PHYSICAL ADDRESS:

• Actual location in hardware RAM chips

• What memory controller sees on the bus

• Range: 0 to (RAM size - 1)

• Example: 0x0000_7FFF_ABCD_1234 (physical location)

LOGICAL (VIRTUAL) ADDRESS:

• Address used by program/process

• What CPU generates during execution

• Each process has its own address space

• Example: 0x0040_0000 (process's view)

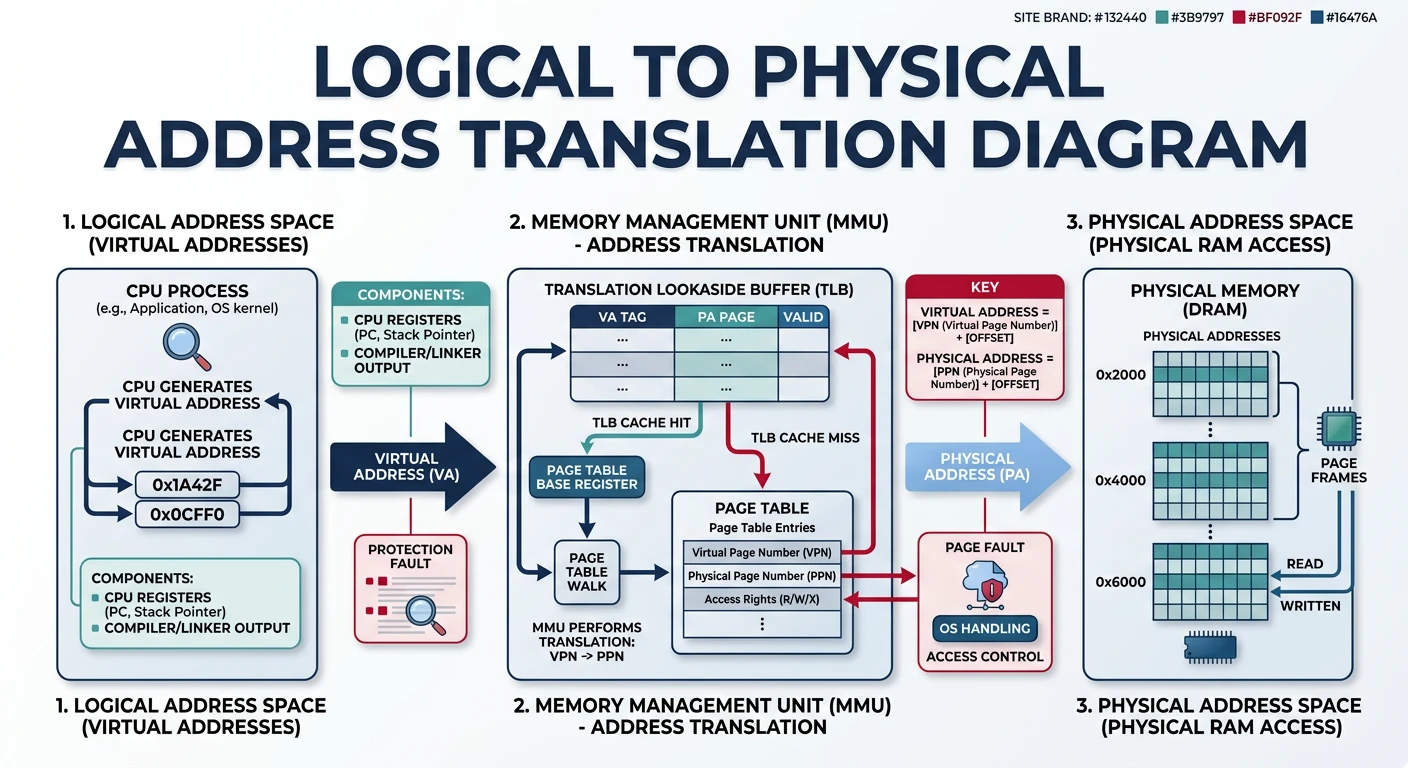

Address Translation:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

┌─────────┐ Logical ┌─────────────┐ Physical ┌────────┐

│ CPU │ ───────────→ │ MMU │ ──────────→ │ RAM │

│ │ Address │ (Memory │ Address │ │

└─────────┘ │ Mgmt Unit) │ └────────┘

└─────────────┘

Process A: 0x1000 → MMU → Physical 0x50000

Process B: 0x1000 → MMU → Physical 0x80000

Same virtual address → Different physical locations!

This enables ISOLATION between processes.# View process memory map on Linux

$ cat /proc/self/maps

00400000-00401000 r-xp 00000000 08:01 1234 /bin/cat # Code

00600000-00601000 r--p 00000000 08:01 1234 /bin/cat # Read-only data

00601000-00602000 rw-p 00001000 08:01 1234 /bin/cat # Data

7f8a12340000-7f8a12500000 r-xp ... /lib/libc.so

7ffd45670000-7ffd45691000 rw-p ... [stack]

7ffd456fe000-7ffd45700000 r-xp ... [vdso]

# Permissions: r=read, w=write, x=execute, p=private, s=sharedEarly systems allocated each process a single contiguous block of memory. Simple but problematic.

Contiguous Memory Allocation:

══════════════════════════════════════════════════════════════

Memory State After Loading Processes:

┌─────────────────────────────────────┐ 0x00000000

│ OS Kernel │

├─────────────────────────────────────┤ 0x00100000

│ Process A (2 MB) │

├─────────────────────────────────────┤ 0x00300000

│ Process B (4 MB) │

├─────────────────────────────────────┤ 0x00700000

│ Process C (1 MB) │

├─────────────────────────────────────┤ 0x00800000

│ FREE │

│ (8 MB hole) │

└─────────────────────────────────────┘ 0x01000000

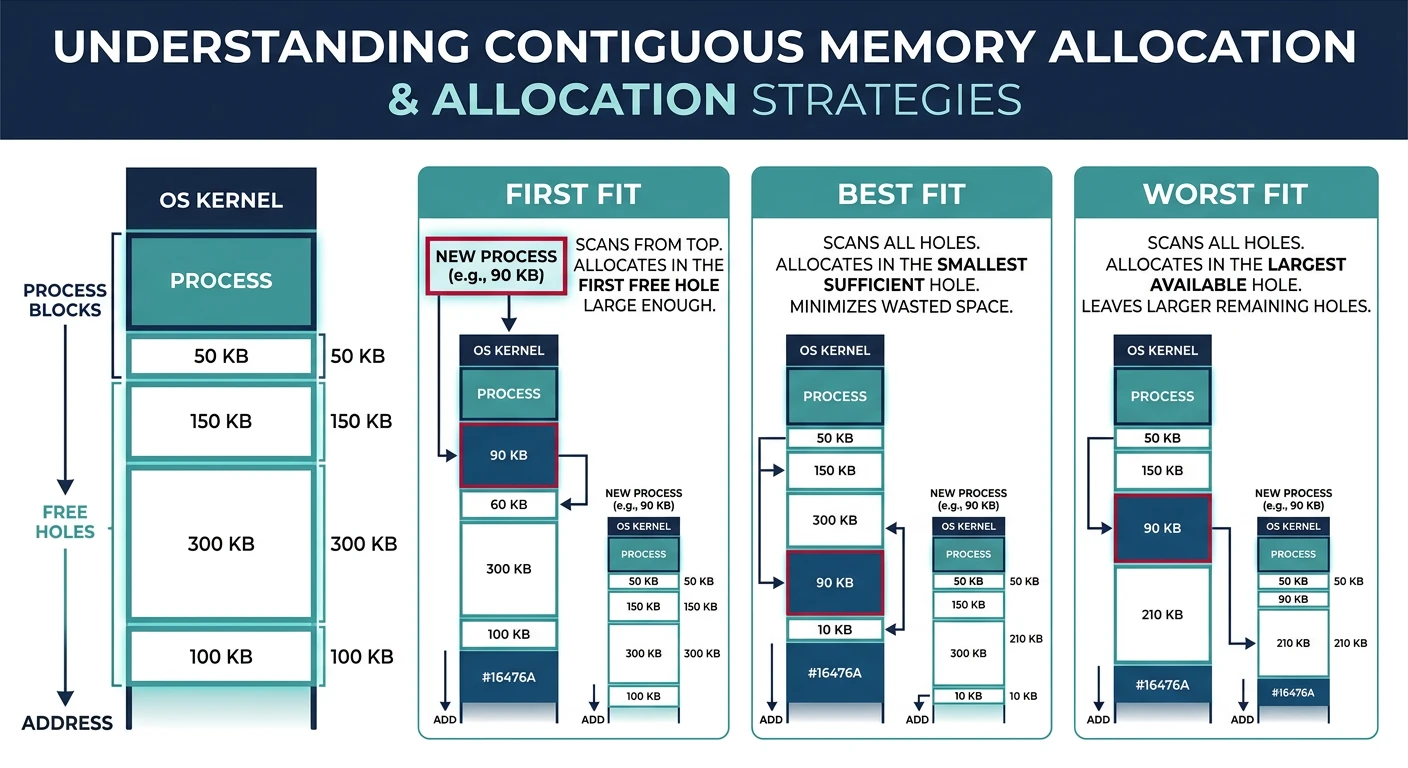

Allocation Strategies (for choosing which hole):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. FIRST FIT

• Allocate first hole big enough

• Fast - stop searching once found

• Tends to leave small holes at start

2. BEST FIT

• Allocate smallest hole that fits

• Leaves smallest leftover fragments

• Must search entire list (slow)

• Creates many tiny unusable holes

3. WORST FIT

• Allocate largest hole

• Leaves largest leftover (may be useful)

• Must search entire list (slow)

• Poor in practice

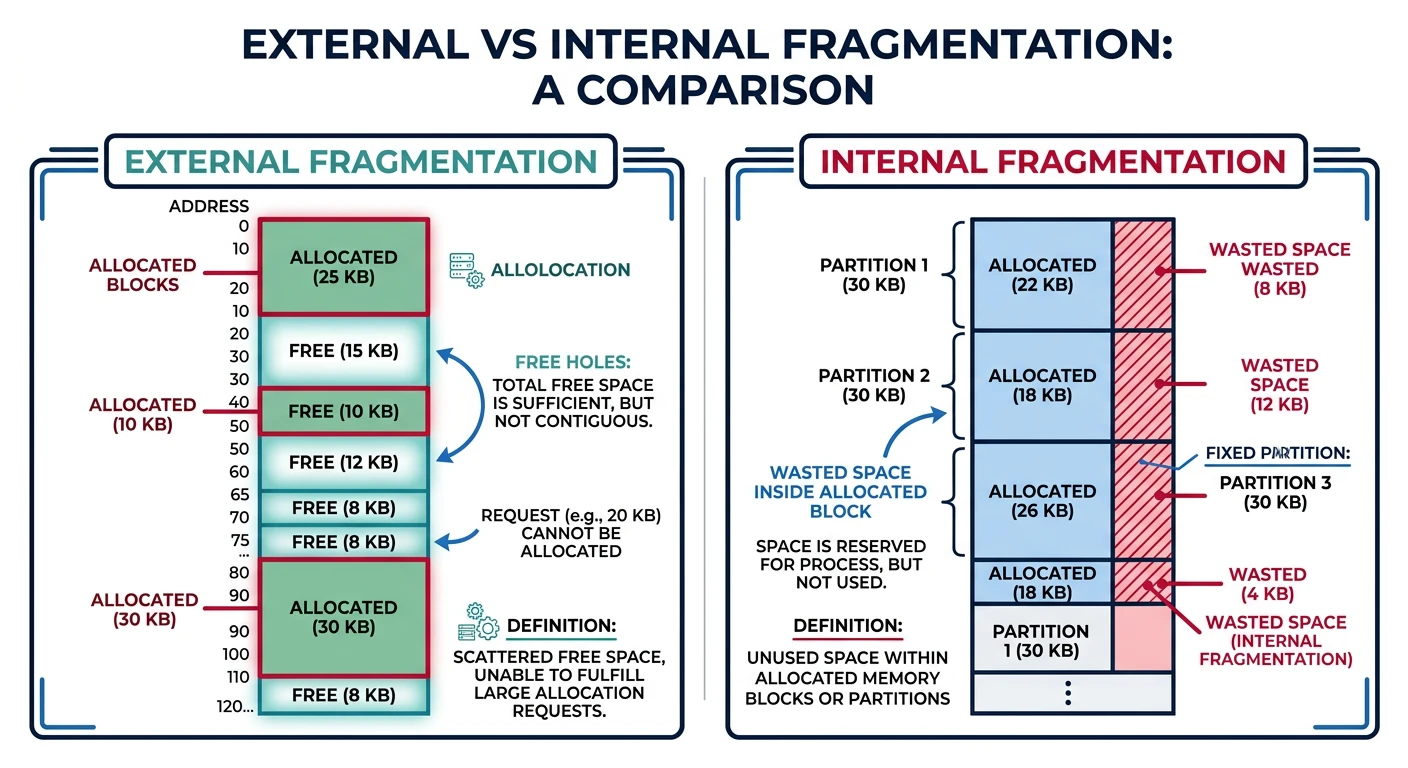

Empirical results: First Fit ≈ Best Fit > Worst FitFragmentation is wasted memory that can't be used. Two types plague memory managers.

EXTERNAL FRAGMENTATION:

══════════════════════════════════════════════════════════════

Free memory exists but is scattered in small non-contiguous holes.

After Process B exits:

┌─────────────────┐

│ OS Kernel │

├─────────────────┤

│ Process A │ 2 MB

├─────────────────┤

│ FREE HOLE │ 4 MB ←─┐

├─────────────────┤ │ Total free: 12 MB

│ Process C │ 1 MB │ But can't fit 8 MB process!

├─────────────────┤ │

│ FREE HOLE │ 8 MB ←──┘

└─────────────────┘

Solution: COMPACTION

• Move processes to create one large free block

• Expensive - must copy all process memory

• Only possible if relocation is dynamic

INTERNAL FRAGMENTATION:

══════════════════════════════════════════════════════════════

Allocated memory is larger than requested (wasted inside block).

Process requests 1000 bytes, system allocates 4096 (one page):

┌─────────────────────────────────────┐

│ Used: 1000 bytes │ Wasted: 3096 │

│ ████████████████ │ │

└─────────────────────────────────────┘

↑

Internal fragmentation

Causes:

• Fixed-size allocation units (pages, blocks)

• Alignment requirements (8-byte, cache-line)

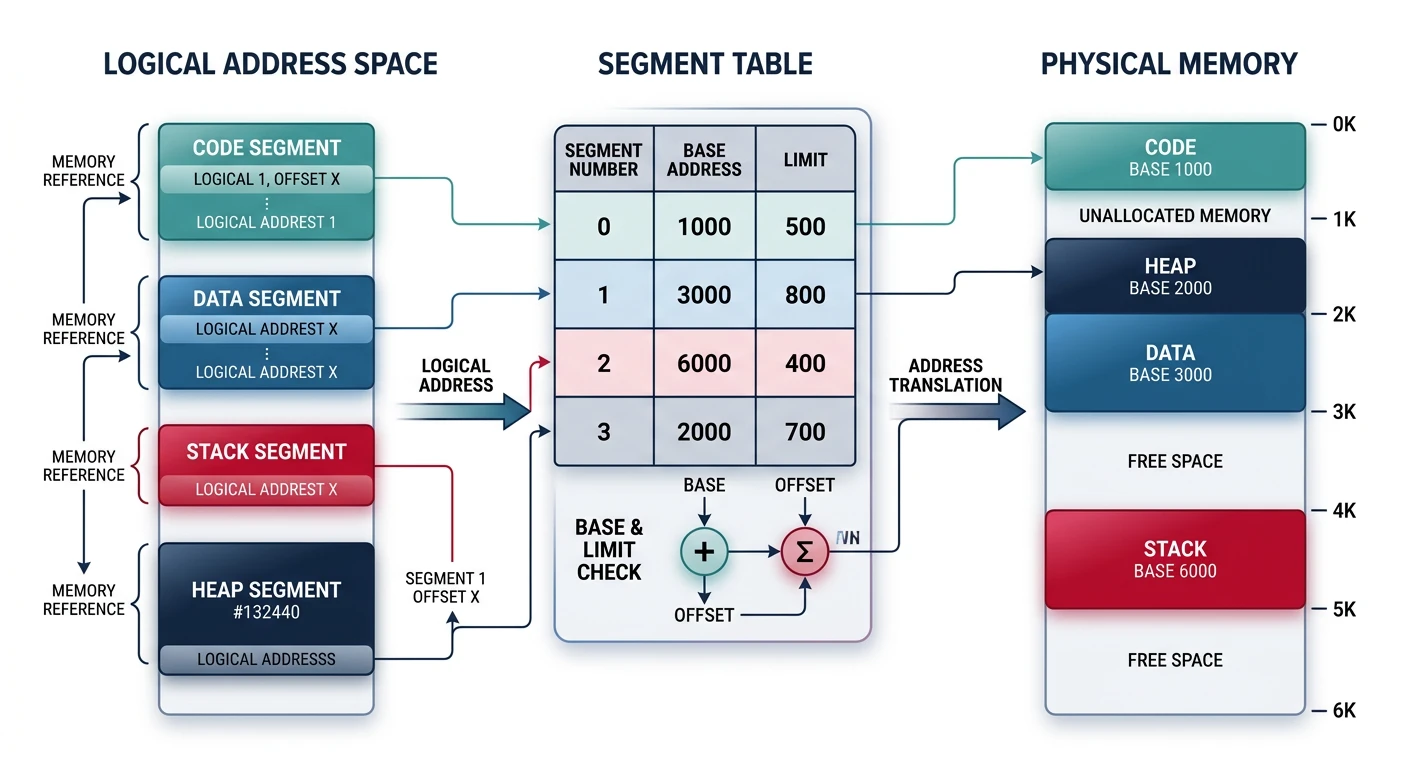

• Power-of-2 allocatorsSegmentation divides a process into logical segments (code, data, stack, heap). Each segment can be placed anywhere in memory.

Segmentation Model:

══════════════════════════════════════════════════════════════

Logical View (Process): Physical Memory:

┌─────────────────────┐ ┌─────────────────────┐

│ Segment 0: Code │ ─────────→│ │

├─────────────────────┤ │ Segment 2 (Stack) │

│ Segment 1: Data │ ───┐ ├─────────────────────┤

├─────────────────────┤ │ │ Process B code │

│ Segment 2: Stack │ ─┐ │ ├─────────────────────┤

├─────────────────────┤ │ └─────→│ Segment 1 (Data) │

│ Segment 3: Heap │ │ ├─────────────────────┤

└─────────────────────┘ │ │ Segment 0 (Code) │

│ ├─────────────────────┤

└───────→│ │

└─────────────────────┘

Segments don't need to be contiguous!

Segment Table:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Segment# Base Limit Permissions

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

0 0x00400000 0x10000 R-X (code)

1 0x00600000 0x05000 RW- (data)

2 0x7FFF0000 0x10000 RW- (stack)

3 0x00800000 0x20000 RW- (heap)

Address Translation:

Logical: (segment#, offset) → Physical: base[segment#] + offset

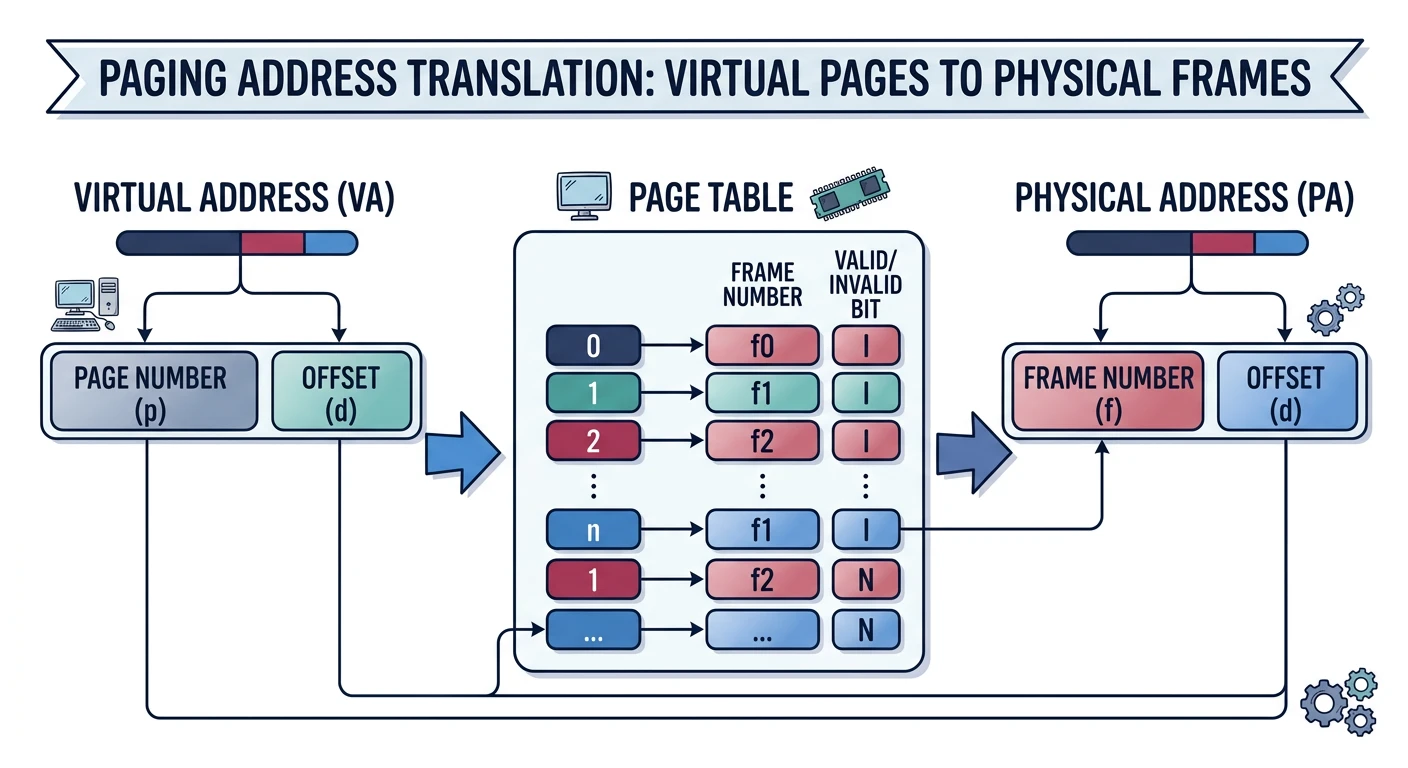

Example: (1, 0x1234) → 0x00600000 + 0x1234 = 0x00601234Paging divides memory into fixed-size blocks. Virtual memory uses pages; physical memory uses frames. No external fragmentation!

Paging Basics:

══════════════════════════════════════════════════════════════

Page: Fixed-size block of virtual memory (typically 4 KB)

Frame: Fixed-size block of physical memory (same size as page)

Virtual Address Space: Physical Memory (Frames):

┌─────────┐ Page 0 ┌─────────┐ Frame 0

├─────────┤ Page 1 ├─────────┤ Frame 1 ←── Page 3

├─────────┤ Page 2 ├─────────┤ Frame 2 ←── Page 0

├─────────┤ Page 3 ├─────────┤ Frame 3 ←── Page 5

├─────────┤ Page 4 ├─────────┤ Frame 4

├─────────┤ Page 5 ├─────────┤ Frame 5 ←── Page 2

└─────────┘ └─────────┘

Pages can map to ANY frame - no contiguity required!

Address Translation:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Virtual Address (32-bit, 4KB pages):

│◄────────── 32 bits ──────────►│

┌────────────────────┬──────────┐

│ Page Number │ Offset │

│ 20 bits │ 12 bits │

└────────────────────┴──────────┘

• Offset: 12 bits = 4096 bytes = 4 KB page size

• Page Number: Index into page table

• Page table maps page# → frame#

Example: Virtual 0x00001234 with 4KB pages

Page# = 0x00001 = 1

Offset = 0x234

If page 1 → frame 7: Physical = 0x00007234# Check page size on Linux

$ getconf PAGE_SIZE

4096

# View huge page support

$ cat /proc/meminfo | grep Huge

HugePages_Total: 0

HugePages_Free: 0

Hugepagesize: 2048 kB # 2 MB huge pages availableThe buddy system allocates memory in power-of-2 sizes. Used by Linux for page-level allocation.

Buddy System Algorithm:

══════════════════════════════════════════════════════════════

Rules:

• Memory blocks are powers of 2 (1, 2, 4, 8... pages)

• Split larger blocks when needed

• Merge adjacent "buddies" when freed

Example: Allocate 3 pages from 16-page pool

1. Start: One 16-page block

[████████████████] 16

2. Need 3 pages → round up to 4. Split 16 → 8+8

[████████][████████] 8 | 8

3. Split one 8 → 4+4

[████][████][████████] 4 | 4 | 8

4. Allocate one 4-page block

[USED][████][████████] 4 | 4 | 8

Free lists by order:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Order 0 (1 page): [empty]

Order 1 (2 pages): [empty]

Order 2 (4 pages): [0x1004000]

Order 3 (8 pages): [0x1008000]

Order 4 (16 pages): [empty]

Buddy Address Calculation:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

For block at address A with size 2^k pages:

Buddy address = A XOR (2^k × page_size)

Example: Block at 0x1000, size 4KB (2^0 = 1 page)

Buddy = 0x1000 XOR 0x1000 = 0x0000 or 0x2000

If buddy is also free → MERGE into larger blockcat /proc/buddyinfo. Shows free blocks at each order (0-10) for each memory zone.

The slab allocator efficiently allocates small, frequently-used kernel objects. Built on top of the buddy system.

Slab Allocator Hierarchy:

══════════════════════════════════════════════════════════════

┌─────────────────┐

│ Kernel Object │ e.g., task_struct

│ Request │

└────────┬────────┘

↓

┌─────────────────────────────────────────────────────────────┐

│ CACHE │

│ (One per object type: task_struct cache, inode cache...) │

├─────────────────────────────────────────────────────────────┤

│ ┌─────────┐ ┌─────────┐ ┌─────────┐ │

│ │ SLAB │ │ SLAB │ │ SLAB │ ... │

│ │ (full) │ │(partial)│ │ (empty) │ │

│ └─────────┘ └─────────┘ └─────────┘ │

└─────────────────────────────────────────────────────────────┘

Each SLAB contains:

┌─────────────────────────────────────────────────────────────┐

│ [obj][obj][obj][obj][obj][obj][obj][obj] │

│ ↑ ↑ ↑ │

│ Used Used Free (linked together in free list) │

└─────────────────────────────────────────────────────────────┘

Benefits:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. No fragmentation - objects are same size within cache

2. Fast allocation - grab from free list, O(1)

3. Cache coloring - spread objects across cache lines

4. Object reuse - freed objects can be reused without init# View slab caches on Linux

$ cat /proc/slabinfo | head -20

slabinfo - version: 2.1

# name <active_objs> <num_objs> <objsize> <objperslab>

task_struct 312 330 6784 4

inode_cache 1847 1890 768 21

dentry 4521 4830 192 21

# Better view with slabtop

$ sudo slabtop -o

Active / Total Objects (% used) : 89234 / 95420 (93.5%)

Active / Total Slabs (% used) : 3456 / 3456 (100.0%)

OBJS ACTIVE USE OBJ SIZE SLABS OBJ/SLAB CACHE SIZE NAME

12345 11234 91% 0.19K 588 21 2352K dentry

8901 8500 95% 6.67K 2225 4 8900K task_structMemory management is foundational to OS design. We've covered: