Introduction

Modern systems feature multiple CPU cores and processors. Operating systems must efficiently schedule work across these resources while maintaining cache coherence and minimizing contention.

Explore how operating systems manage multiple processors—SMP architectures, NUMA, cache coherence, and parallel scheduling.

Modern systems feature multiple CPU cores and processors. Operating systems must efficiently schedule work across these resources while maintaining cache coherence and minimizing contention.

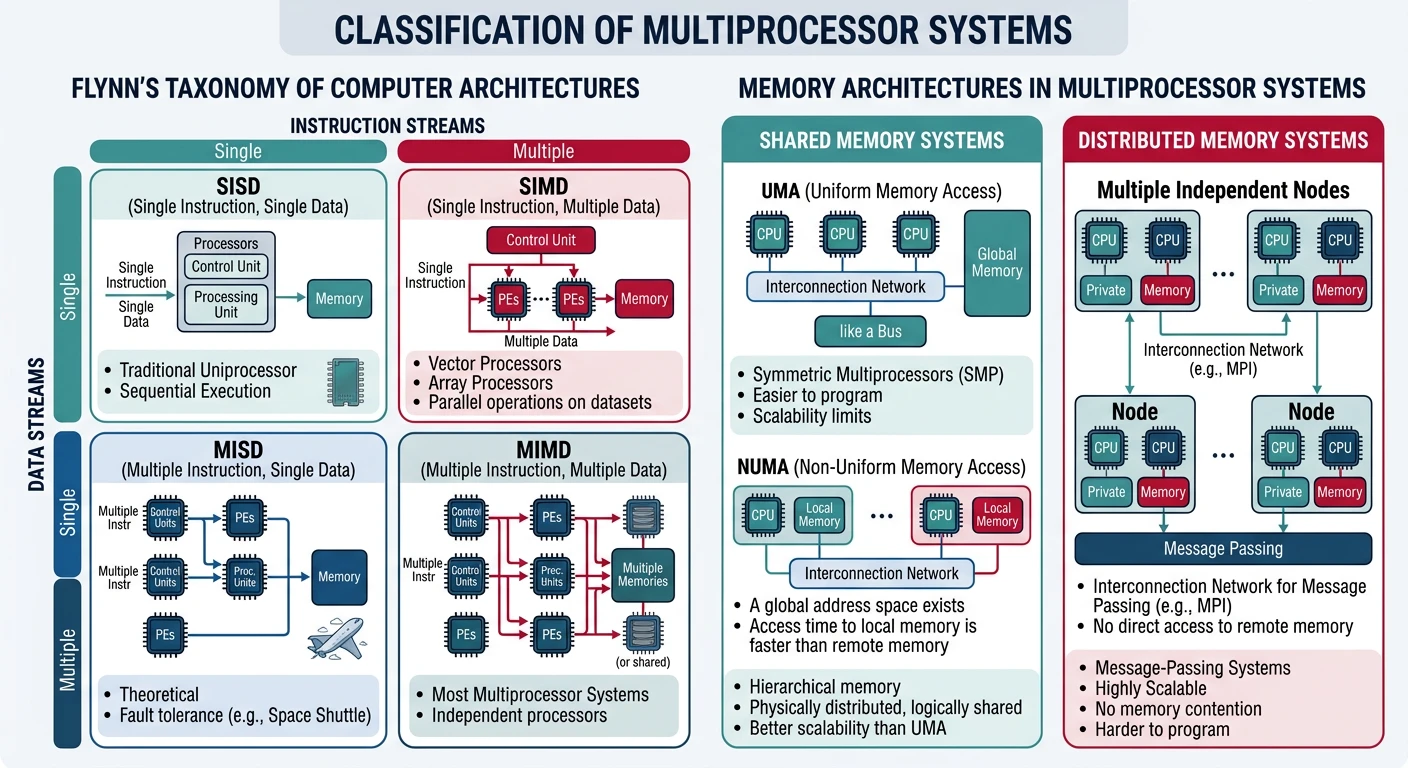

Multiprocessor systems vary dramatically in how CPUs share memory and communicate.

Flynn's Taxonomy:

══════════════════════════════════════════════════════════════

SISD - Single Instruction, Single Data

• Traditional uniprocessor

• One CPU, one data stream

SIMD - Single Instruction, Multiple Data

• GPUs, vector processors

• Same operation on many data elements

• Great for graphics, ML

MIMD - Multiple Instruction, Multiple Data

• General-purpose multiprocessors

• Each CPU runs independent program

• This is what we focus on!

Memory Architecture:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

1. SHARED MEMORY (UMA/NUMA)

• All CPUs access same physical memory

• Communication via shared variables

• Examples: Desktop, server CPUs

2. DISTRIBUTED MEMORY

• Each node has private memory

• Communication via message passing

• Examples: Supercomputers, clustersSMP (also called UMA - Uniform Memory Access) treats all processors equally with uniform memory access times.

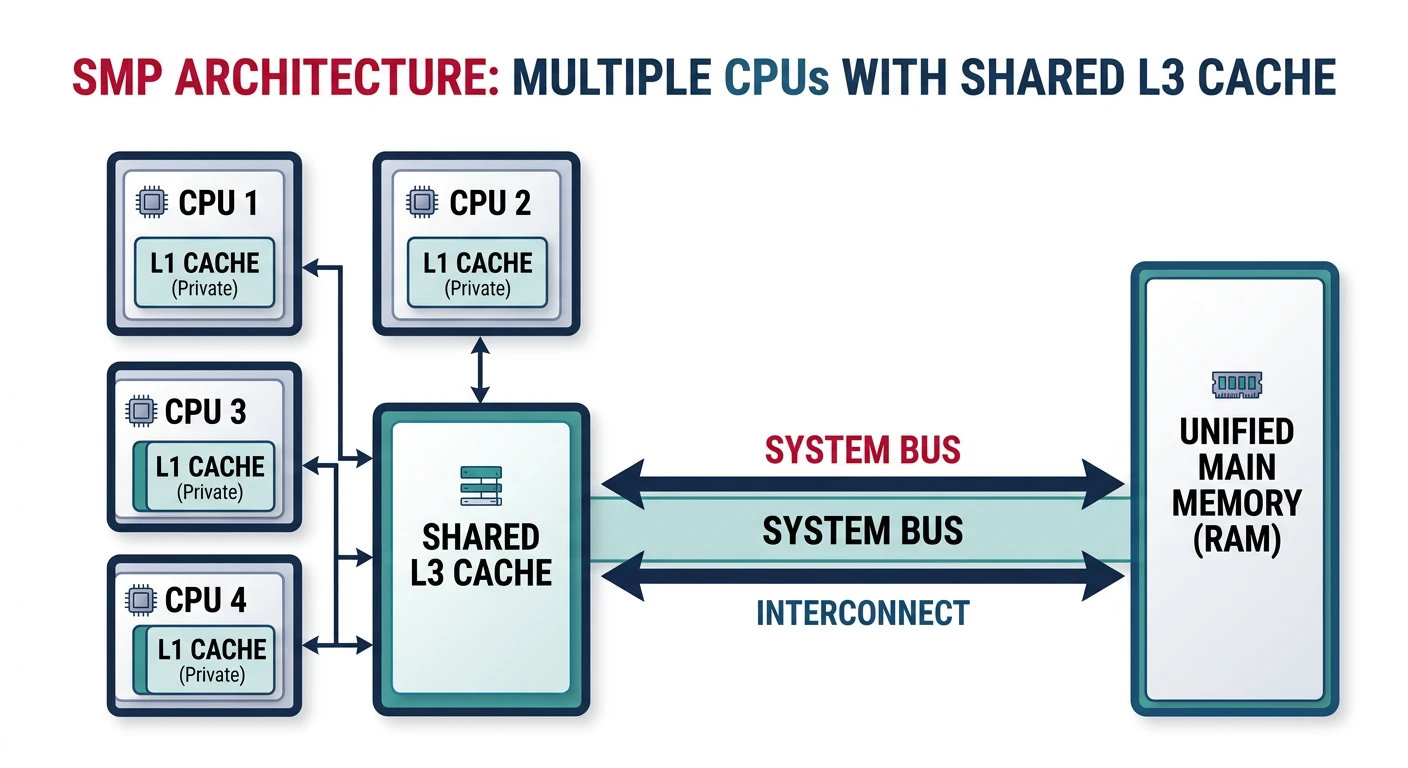

SMP Architecture:

══════════════════════════════════════════════════════════════

┌───────┐ ┌───────┐ ┌───────┐ ┌───────┐

│ CPU 0 │ │ CPU 1 │ │ CPU 2 │ │ CPU 3 │

│ L1 │ │ L1 │ │ L1 │ │ L1 │

└───┬───┘ └───┬───┘ └───┬───┘ └───┬───┘

│ │ │ │

└──────────┼──────────┼──────────┘

│

┌──────┴──────┐

│ L3 Cache │ (shared)

└──────┬──────┘

│

┌──────┴──────┐

│ System Bus │

└──────┬──────┘

│

┌──────┴──────┐

│ Memory │

└─────────────┘

• All CPUs equal - any can run any process

• Uniform access time to all memory

• Single OS instance manages all CPUs

• Shared bus becomes bottleneck beyond ~8 CPUs# View CPU topology on Linux

$ lscpu

Architecture: x86_64

CPU(s): 8

Thread(s) per core: 2

Core(s) per socket: 4

Socket(s): 1

NUMA node(s): 1

# Detailed topology

$ cat /sys/devices/system/cpu/cpu0/topology/core_id

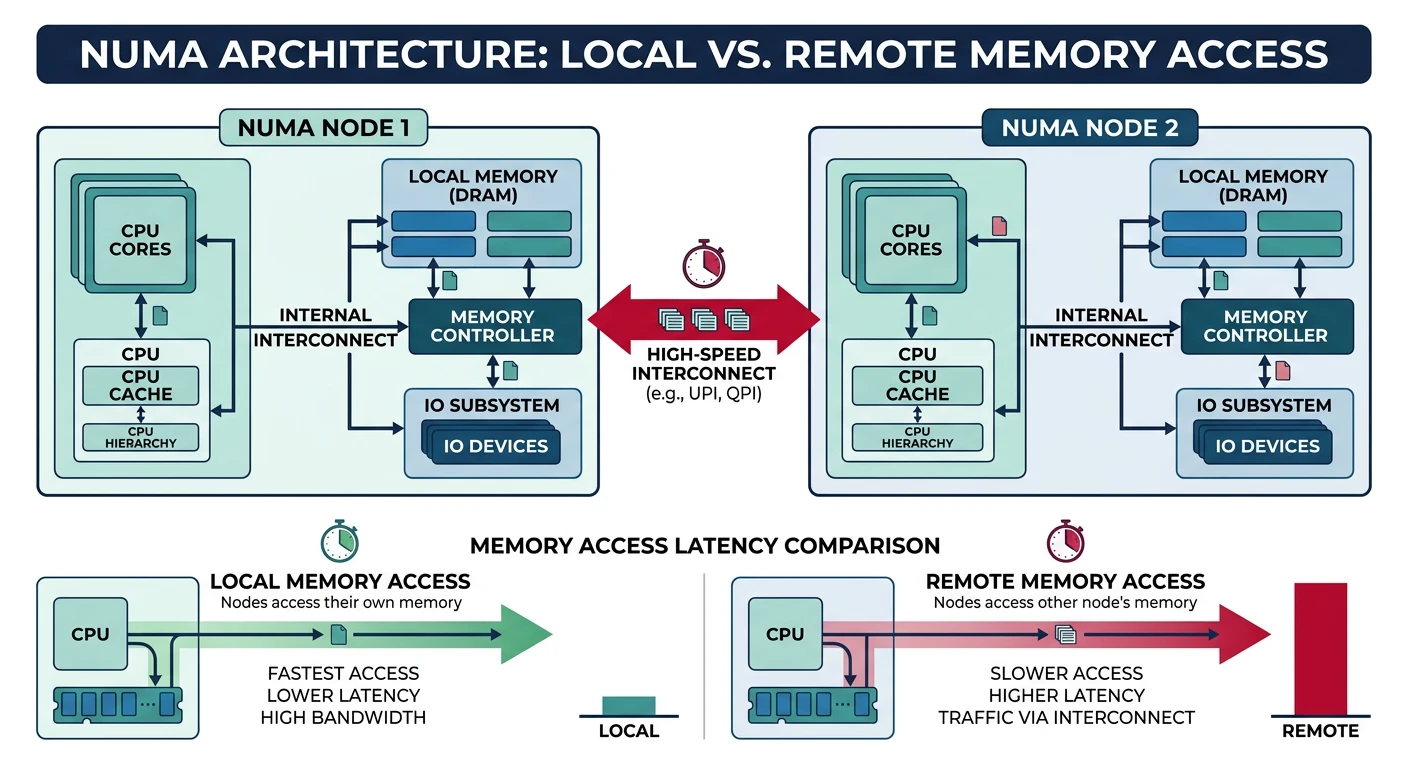

0NUMA (Non-Uniform Memory Access) scales beyond SMP by giving each CPU group its own local memory.

NUMA Architecture (2-node example):

══════════════════════════════════════════════════════════════

NUMA Node 0 NUMA Node 1

┌────────────────────┐ ┌────────────────────┐

│ CPU0 CPU1 CPU2 │ │ CPU4 CPU5 CPU6 │

│ CPU3 │ │ CPU7 │

└─────────┬──────────┘ └─────────┬──────────┘

│ │

┌─────────┴──────────┐ ┌─────────┴──────────┐

│ Local Memory │ │ Local Memory │

│ (64 GB) │ │ (64 GB) │

└─────────┬──────────┘ └─────────┬──────────┘

│ │

└───────────┬───────────┘

│

┌─────────┴─────────┐

│ Interconnect │ (QPI, UPI, etc.)

└───────────────────┘

Memory Access Latencies:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

CPU0 accessing Node 0 memory: ~80 ns (local)

CPU0 accessing Node 1 memory: ~150 ns (remote, 2x!)

NUMA ratio = remote/local latency

Typical: 1.5x to 2x penalty for remote access# View NUMA topology

$ numactl --hardware

available: 2 nodes (0-1)

node 0 cpus: 0 1 2 3

node 0 size: 65536 MB

node 1 cpus: 4 5 6 7

node 1 size: 65536 MB

node distances:

node 0 1

0: 10 21 # Local=10, remote=21 (2.1x penalty)

1: 21 10

# Run program on specific node

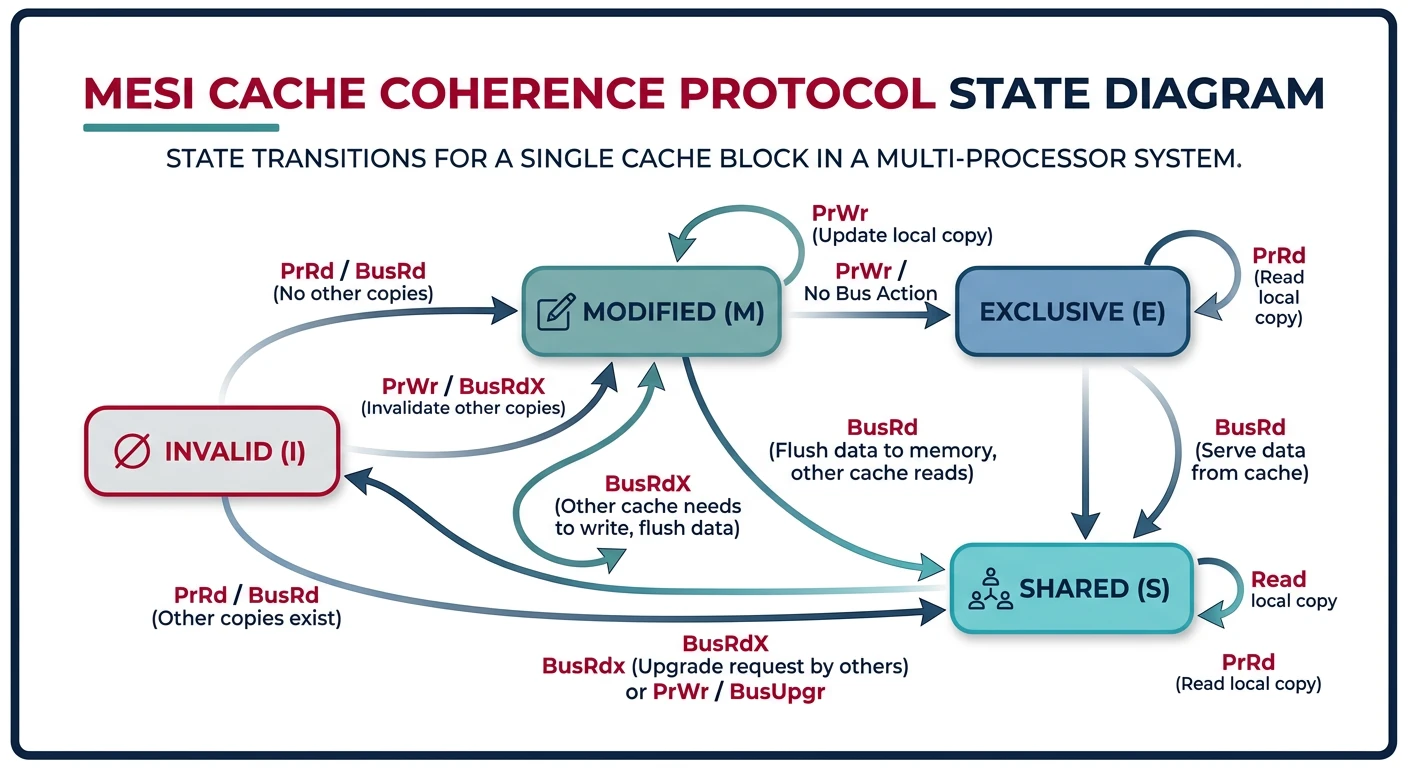

$ numactl --cpunodebind=0 --membind=0 ./my_programWhen multiple CPUs cache the same data, we need cache coherence to ensure consistency.

The Cache Coherence Problem:

══════════════════════════════════════════════════════════════

1. CPU0 reads X=5 into cache

2. CPU1 reads X=5 into cache

3. CPU0 writes X=10 (updates its cache)

4. CPU1 reads X... sees stale value 5!

CPU0 Cache CPU1 Cache

┌─────────┐ ┌─────────┐

│ X = 10 │ │ X = 5 │ ← Incoherent!

└─────────┘ └─────────┘

│ │

┌────┴───────────────┴────┐

│ Memory: X = 5 │

└───────────────────────────┘

MESI Protocol (common solution):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Modified: This cache has only valid copy (dirty)

Exclusive: This cache has only valid copy (clean)

Shared: Multiple caches have copy (read-only)

Invalid: Cache line is stale/empty

When CPU0 writes:

1. Invalidate all other caches holding that line

2. Transition to Modified state

3. Other CPUs must fetch from CPU0 or memoryScheduling for multiple CPUs introduces new challenges beyond single-CPU scheduling.

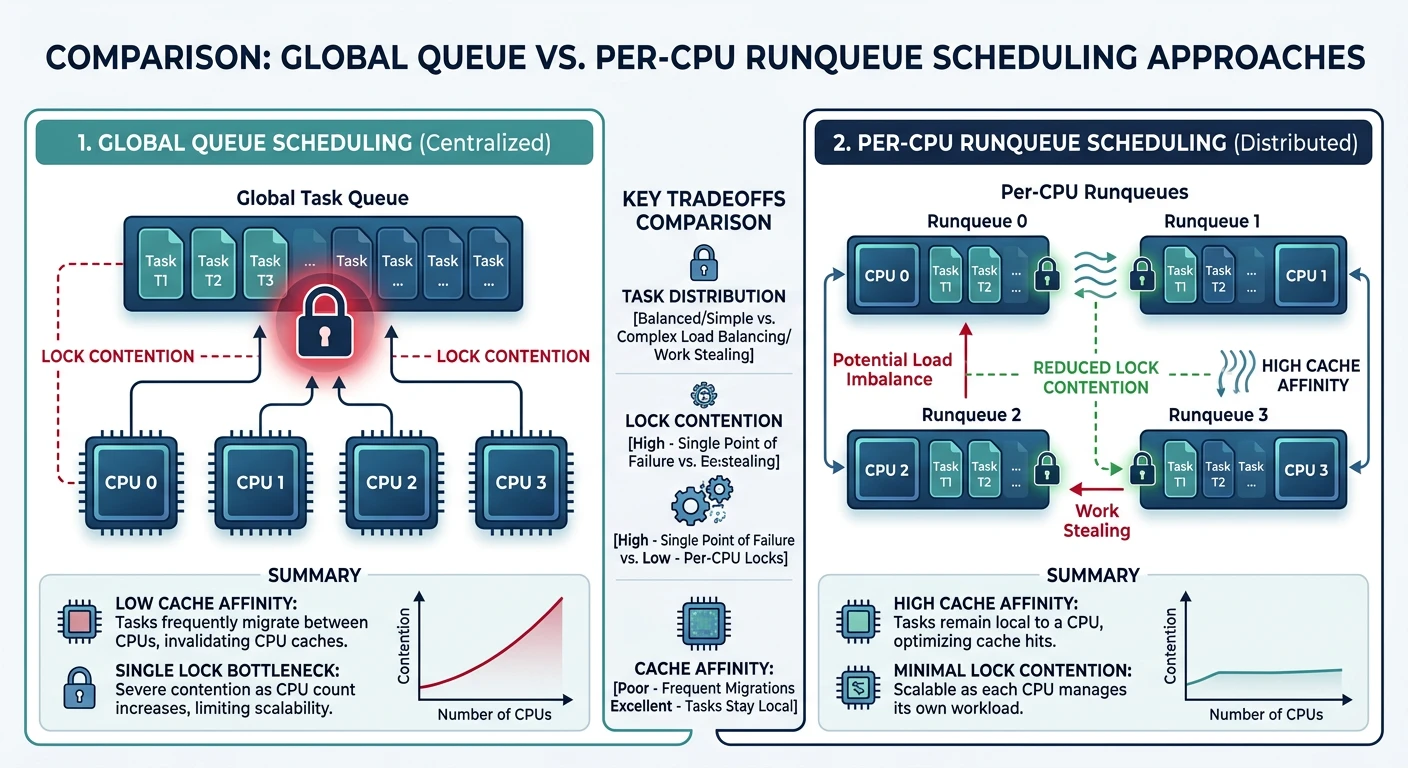

Scheduling Approaches:

══════════════════════════════════════════════════════════════

1. GLOBAL QUEUE (Single shared queue)

┌───────────────────────────┐

│ Task Queue: [T1][T2][T3]... │

└───────┬─────┬──────┬───────┘

│ │ │

CPU0 CPU1 CPU2

✓ Automatic load balancing

✗ Queue lock is bottleneck

✗ Poor cache locality

2. PER-CPU QUEUES (Linux approach)

CPU0 Queue CPU1 Queue CPU2 Queue

[T1][T4] [T2][T5] [T3][T6]

│ │ │

CPU0 CPU1 CPU2

✓ No lock contention

✓ Better cache affinity

✗ Can become unbalanced

→ Need load balancing between queuesProcessor affinity keeps threads on the same CPU to maintain cache warmth.

# View process affinity

$ taskset -p $$

pid 1234's current affinity mask: ff # Can run on CPUs 0-7

# Set affinity - run on CPUs 0 and 1 only

$ taskset -c 0,1 ./my_program

# Change running process

$ taskset -p -c 0-3 1234

# In C code:

#include <sched.h>

cpu_set_t cpuset;

CPU_ZERO(&cpuset);

CPU_SET(0, &cpuset); // Run on CPU 0

sched_setaffinity(0, sizeof(cpuset), &cpuset);Per-CPU queues need load balancing to prevent idle CPUs while others are overloaded.

Linux Load Balancing:

══════════════════════════════════════════════════════════════

Scheduling Domains (hierarchical):

┌─────────────┐

│ System │ Balance every 64ms

└──────┬──────┘

┌───────┴───────┐

┌─────┴─────┐ ┌─────┴─────┐

│ NUMA 0 │ │ NUMA 1 │ Balance every 16ms

└────┬─────┘ └────┬─────┘

┌───┴───┐ ┌───┴───┐

┌─┴─┐ ┌─┴─┐ ┌─┴─┐ ┌─┴─┐

│C0│ │C1│ │C2│ │C3│ Core level

└──┘ └──┘ └──┘ └──┘ Balance every 4ms

Balancing prefers:

1. Same core (shared L2) - cheapest

2. Same NUMA node - moderate cost

3. Different NUMA node - most expensive

Push vs Pull Migration:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Push: Busy CPU pushes tasks to idle CPUs (periodic)

Pull: Idle CPU steals tasks from busy CPUs (immediate)Multiprocessor systems are now the norm. We've covered: